Hello everyone,

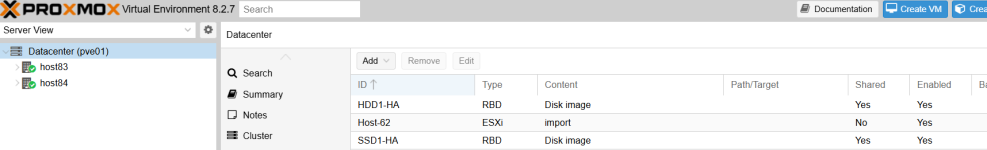

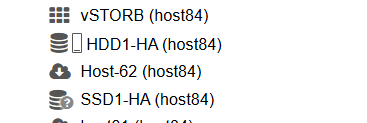

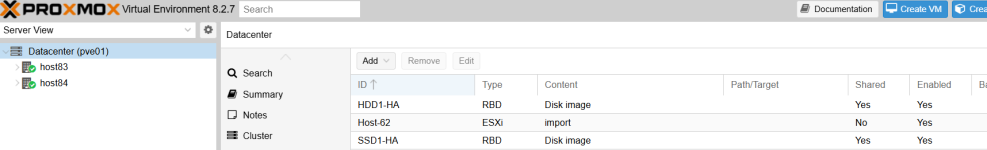

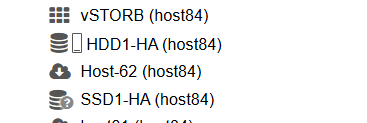

We are in the process of migrating all of our VMware infrastructure hosts to Proxmox. Our current VMware infrastructure running in production is backed up by 2 existing ceph cluster , one for SSD performance tier and a second one for the cold storage tier with mechanical disks.

Through the UI, we were able to add , through RDB , the capacity tier ceph cluster, but we are unable to do so with the SSD one.

Adding the SSD1-HA cluster doesn't show any errors but it stays inactive afterwards.

root@host84:~# pvesm status

got timeout

Name Type Status Total Used Available %

HDD1-HA rbd active 75592859012 1262337412 74330521600 1.67%

Host-62 esxi active 0 0 0 0.00%

SSD1-HA rbd inactive 0 0 0 0.00%

host61 esxi active 0 0 0 0.00%

host63 esxi active 0 0 0 0.00%

host64 esxi active 0 0 0 0.00%

host66 esxi active 0 0 0 0.00%

local dir active 98497780 4609804 88838428 4.68%

local-lvm lvmthin active 335101952 0 335101952 0.00%

pbs1 pbs active 107372087296 857020304 106515066992 0.80%

Also, pvestad shows a lot of "got timeout" messages, only when SSD1-HA is present.

Nov 05 23:35:26 host84 pvestatd[3475507]: status update time (5.760 seconds)

Nov 05 23:35:35 host84 pvestatd[3475507]: got timeout

Nov 05 23:35:35 host84 pvestatd[3475507]: status update time (5.772 seconds)

Nov 05 23:35:46 host84 pvestatd[3475507]: got timeout

Nov 05 23:35:46 host84 pvestatd[3475507]: status update time (5.699 seconds)

Nov 05 23:35:55 host84 pvestatd[3475507]: got timeout

Nov 05 23:35:56 host84 pvestatd[3475507]: status update time (5.780 seconds)

Nov 05 23:36:05 host84 pvestatd[3475507]: got timeout

Nov 05 23:36:06 host84 pvestatd[3475507]: status update time (5.745 seconds)

Communication between proxmox nodes and ceph monitors seems to be ok , all are pingable.

Also , in ceph cluster I see logs from this communication:

ceph-mon.CEPH-21.log:2024-11-05T23:37:20.212+0100 7fc624c0d700 0 log_channel(audit) log [DBG] : from='client.? 10.74.2.84:0/2606079522' entity='client.PROXMOX_SSD' cmd=[{"format":"json","prefix":"df"}]: dispatch

ceph-mon.CEPH-21.log:2024-11-05T23:37:40.836+0100 7fc624c0d700 0 log_channel(audit) log [DBG] : from='client.? 10.74.2.84:0/3642774830' entity='client.PROXMOX_SSD' cmd=[{"prefix":"df","format":"json"}]: dispatch

ceph-mon.CEPH-21.log:2024-11-05T23:37:59.248+0100 7fc624c0d700 0 log_channel(audit) log [DBG] : from='client.? 10.74.2.83:0/4275618953' entity='client.PROXMOX_SSD' cmd=[{"prefix":"df","format":"json"}]: dispatch

ceph-mon.CEPH-21.log:2024-11-05T23:38:08.720+0100 7fc624c0d700 0 log_channel(audit) log [DBG] : from='client.? 10.74.2.83:0/2685920571' entity='client.PROXMOX_SSD' cmd=[{"prefix":"df","format":"json"}]: dispatch

I have also doublechecked ceph client keyrings and they look ok to me.

Only difference between the clusters is ceph version. The one which works is using Ceph Quincy , the one which doesn't Ceph Octopus.

UPDATE: I have upgraded to Quincy , but did not resolve the issue.

Any ideas? I am having hard time trying to find related logs at the pve side.

We are in the process of migrating all of our VMware infrastructure hosts to Proxmox. Our current VMware infrastructure running in production is backed up by 2 existing ceph cluster , one for SSD performance tier and a second one for the cold storage tier with mechanical disks.

Through the UI, we were able to add , through RDB , the capacity tier ceph cluster, but we are unable to do so with the SSD one.

Adding the SSD1-HA cluster doesn't show any errors but it stays inactive afterwards.

root@host84:~# pvesm status

got timeout

Name Type Status Total Used Available %

HDD1-HA rbd active 75592859012 1262337412 74330521600 1.67%

Host-62 esxi active 0 0 0 0.00%

SSD1-HA rbd inactive 0 0 0 0.00%

host61 esxi active 0 0 0 0.00%

host63 esxi active 0 0 0 0.00%

host64 esxi active 0 0 0 0.00%

host66 esxi active 0 0 0 0.00%

local dir active 98497780 4609804 88838428 4.68%

local-lvm lvmthin active 335101952 0 335101952 0.00%

pbs1 pbs active 107372087296 857020304 106515066992 0.80%

Also, pvestad shows a lot of "got timeout" messages, only when SSD1-HA is present.

Nov 05 23:35:26 host84 pvestatd[3475507]: status update time (5.760 seconds)

Nov 05 23:35:35 host84 pvestatd[3475507]: got timeout

Nov 05 23:35:35 host84 pvestatd[3475507]: status update time (5.772 seconds)

Nov 05 23:35:46 host84 pvestatd[3475507]: got timeout

Nov 05 23:35:46 host84 pvestatd[3475507]: status update time (5.699 seconds)

Nov 05 23:35:55 host84 pvestatd[3475507]: got timeout

Nov 05 23:35:56 host84 pvestatd[3475507]: status update time (5.780 seconds)

Nov 05 23:36:05 host84 pvestatd[3475507]: got timeout

Nov 05 23:36:06 host84 pvestatd[3475507]: status update time (5.745 seconds)

Communication between proxmox nodes and ceph monitors seems to be ok , all are pingable.

Also , in ceph cluster I see logs from this communication:

ceph-mon.CEPH-21.log:2024-11-05T23:37:20.212+0100 7fc624c0d700 0 log_channel(audit) log [DBG] : from='client.? 10.74.2.84:0/2606079522' entity='client.PROXMOX_SSD' cmd=[{"format":"json","prefix":"df"}]: dispatch

ceph-mon.CEPH-21.log:2024-11-05T23:37:40.836+0100 7fc624c0d700 0 log_channel(audit) log [DBG] : from='client.? 10.74.2.84:0/3642774830' entity='client.PROXMOX_SSD' cmd=[{"prefix":"df","format":"json"}]: dispatch

ceph-mon.CEPH-21.log:2024-11-05T23:37:59.248+0100 7fc624c0d700 0 log_channel(audit) log [DBG] : from='client.? 10.74.2.83:0/4275618953' entity='client.PROXMOX_SSD' cmd=[{"prefix":"df","format":"json"}]: dispatch

ceph-mon.CEPH-21.log:2024-11-05T23:38:08.720+0100 7fc624c0d700 0 log_channel(audit) log [DBG] : from='client.? 10.74.2.83:0/2685920571' entity='client.PROXMOX_SSD' cmd=[{"prefix":"df","format":"json"}]: dispatch

I have also doublechecked ceph client keyrings and they look ok to me.

Only difference between the clusters is ceph version. The one which works is using Ceph Quincy , the one which doesn't Ceph Octopus.

UPDATE: I have upgraded to Quincy , but did not resolve the issue.

Any ideas? I am having hard time trying to find related logs at the pve side.

Last edited: