Hello,

Three months ago I've moved everything to a new PBS server and set an S3 provider as datastore. I see no errors being generated, everything seems to be fine, so I'm thinking to decommission the old server. On the new server, the

What worries me is that the old server has no less than 6TB in the

Retention policies are identical for both PBS instances: 7x Daily, 4x Weekly. There were no huge changes made inside the VMs, there are practically the same VMs running for 2 years.

Could you please let me know what could be the root cause of this inconsistency?

Thank you!

Three months ago I've moved everything to a new PBS server and set an S3 provider as datastore. I see no errors being generated, everything seems to be fine, so I'm thinking to decommission the old server. On the new server, the

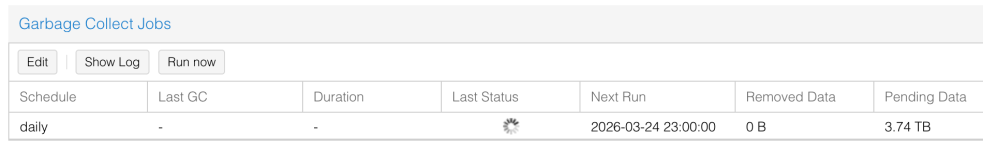

.chunks directory size is 1TB. Both PBS and the S3 provider report 1TB of usage.What worries me is that the old server has no less than 6TB in the

.chunks directory. No backups were executed in the last 3 months. I've executed garbage collection and pruning tasks, but the size of the .chunks directory remains the same.Retention policies are identical for both PBS instances: 7x Daily, 4x Weekly. There were no huge changes made inside the VMs, there are practically the same VMs running for 2 years.

Could you please let me know what could be the root cause of this inconsistency?

Thank you!

Last edited: