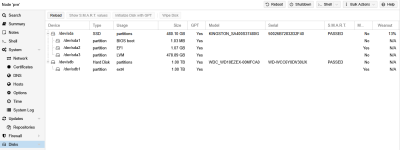

How do I check if Proxmox can even see the HDD?

node > Disks or lsblk -o+FSTYPE,LABEL,MODEL.How do I format it (and which filesystem should I use for a single HDD like this)?

node > Disks > .... What to use depends a bit on the use case and model number of the disk. Please share yours.I like ZFS but it tends to perform badly with VMs on HDDs. without disabling

sync or having a separate SLOG. CTs are fine.LVM-Thin would by my secondary choice but you can't directly store files there like you can with ZFS. I don't recommend

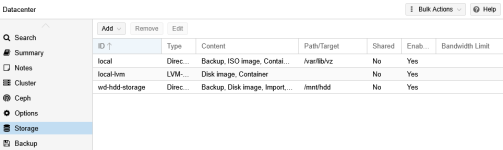

Directory for storing guests.Above way does that automatically if selected. You should edit the Thin provision option for ZFS though. You can create/edit viaHow do I add it as a storage target in Proxmox so I can actually assign VMs or backups to it?

Datacenter > Storage.This is too vague and depends a lot on storage, guest type, etc. Something I recommend most often isAny mount options I should use to keep things running smoothly?

discard. Not as mount option though.I'd suggest

relatime instead of noatime which, AFAIK, is also the default for ext4 nowadays.

Last edited: