Hi All,

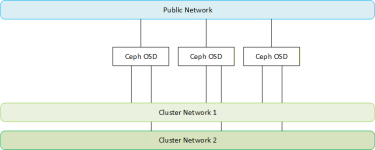

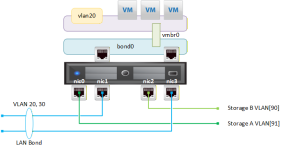

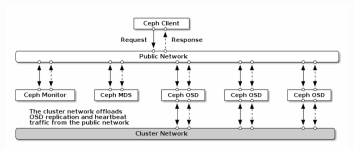

I was googling around and understood that it was best to have two fault domains when using Ceph. So I set up two separate networks. When using the Proxmox GUI it only asks for a single back-end cluster network, which is fine. I then go into the /etc/ceph/ceph.conf file and put

Save and restart Ceph services

When I try adding OSD I get an error.

Does this mean I can only configure it via the .conf file?

I was googling around and understood that it was best to have two fault domains when using Ceph. So I set up two separate networks. When using the Proxmox GUI it only asks for a single back-end cluster network, which is fine. I then go into the /etc/ceph/ceph.conf file and put

Code:

[global]

...

...

cluster_network = 10.10.10.0/24, 10.10.20.0/24

...Save and restart Ceph services

Code:

systemctl restart ceph-*When I try adding OSD I get an error.

Code:

command '/sbin/ip address show to '10.10.10.0/26, 10.10.20.0/26' up' failed: exit code 1 (500)Does this mean I can only configure it via the .conf file?