Hello everyone,

I have spent a lot of time to figure out what is provoking that IO wait. on this cluster VM who do a high amount of IO have a lot of IO wait ( like 30k I/O read, 50% IO wait)

Summary of my setup:

PVE

Code:

proxmox-ve: 9.0.0 (running kernel: 6.17.2-2-pve)

pve-manager: 9.0.18 (running version: 9.0.18/5cacb35d7ee87217)

proxmox-kernel-helper: 9.0.4

proxmox-kernel-6.17.2-2-pve-signed: 6.17.2-2

proxmox-kernel-6.17: 6.17.2-2

proxmox-kernel-6.8: 6.8.12-17

proxmox-kernel-6.8.12-17-pve-signed: 6.8.12-17

amd64-microcode: 3.20250311.1

ceph: 19.2.3-pve4

ceph-fuse: 19.2.3-pve4

corosync: 3.1.9-pve2

criu: 4.1.1-1

frr-pythontools: 10.3.1-1+pve4

ifupdown2: 3.3.0-1+pmx11

intel-microcode: 3.20251111.1~deb13u1

libjs-extjs: 7.0.0-5

libproxmox-acme-perl: 1.7.0

libproxmox-backup-qemu0: 2.0.1

libproxmox-rs-perl: 0.4.1

libpve-access-control: 9.0.4

libpve-apiclient-perl: 3.4.2

libpve-cluster-api-perl: 9.0.7

libpve-cluster-perl: 9.0.7

libpve-common-perl: 9.0.15

libpve-guest-common-perl: 6.0.2

libpve-http-server-perl: 6.0.5

libpve-network-perl: 1.2.3

libpve-rs-perl: 0.11.3

libpve-storage-perl: 9.0.18

libspice-server1: 0.15.2-1+b1

lvm2: 2.03.31-2+pmx1

lxc-pve: 6.0.5-3

lxcfs: 6.0.4-pve1

novnc-pve: 1.6.0-3

openvswitch-switch: 3.5.0-1+b1

proxmox-backup-client: 4.1.0-1

proxmox-backup-file-restore: 4.1.0-1

proxmox-backup-restore-image: 1.0.0

proxmox-firewall: 1.2.1

proxmox-kernel-helper: 9.0.4

proxmox-mail-forward: 1.0.2

proxmox-mini-journalreader: 1.6

proxmox-offline-mirror-helper: 0.7.3

proxmox-widget-toolkit: 5.1.2

pve-cluster: 9.0.7

pve-container: 6.0.18

pve-docs: 9.0.9

pve-edk2-firmware: not correctly installed

pve-esxi-import-tools: 1.0.1

pve-firewall: 6.0.4

pve-firmware: 3.17-2

pve-ha-manager: 5.0.8

pve-i18n: 3.6.4

pve-qemu-kvm: 10.1.2-4

pve-xtermjs: 5.5.0-3

qemu-server: 9.0.30

smartmontools: 7.4-pve1

spiceterm: 3.4.1

swtpm: 0.8.0+pve3

vncterm: 1.9.1

zfsutils-linux: 2.3.4-pve1

Hardware

I have 6 servers on a 3AZ (OVH) setup (2 servers per AZ) with this hardware

- AMD EPYC GENOA 9354 ( 32C/64T )

- 512GO DDR5

- 6 x nvme enterprise grade 7To for OSD

- 4 x 25Gb network card ( mellanox ) bounded together

Network

I have no other choice to bound the 4 25G port for thaht i ma using openv-switch with balance-tcp mode

View attachment 96612

I have a dedicated interface/vlan for ceph and corosync ( using the bond )

CEPH

- 1 MGR per node

- 1 MON per node

- 6 OSD per node

- 1 replicated pool ( min 2 max 3 )

Simplified Crush Map :

Code:

root ceph-prod-3az

zone 1

host a

osd.0

osd.1

osd.2

osd.3

osd.4

osd.5

host b

osd.6

osd.7

osd.8

osd.9

osd.10

osd.11

zone 2

host c

osd.0

osd.1

osd.2

osd.3

osd.4

osd.5

host d

osd.6

osd.7

osd.8

osd.9

osd.10

osd.11

zone 3

host e

osd.0

osd.1

osd.2

osd.3

osd.4

osd.5

host f

osd.6

osd.7

osd.8

osd.9

osd.10

osd.11

Crush rule :

Code:

rule 3az_rule {

id 1

type replicated

step take ceph-prod-3az class nvme

step choose firstn 3 type zone

step chooseleaf firstn 2 type host

step emit

}

Problems

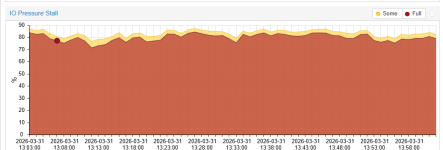

I have mainly postgresql databases vm , below this a htop on a vm with high io wait

View attachment 96607

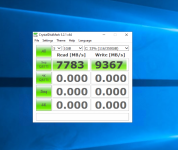

IO View on data disk of this VM

View attachment 96608

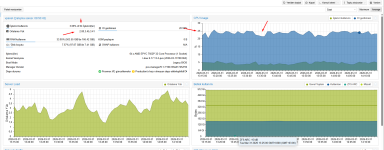

A node export view on this VM

View attachment 96609

Vm drive config:

View attachment 96613

What i have verified

- There is no OSD latency problem

- Network is not saturated

- Host is not overloaded ( ~ 20% cpu usage, 50% RAM usage per node )

- Tuned a bit softnet settings to obliterate packets drops

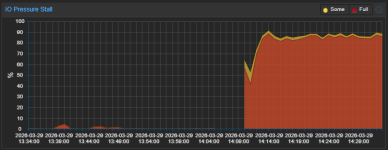

I have noticed some network errors, but seems ok? :

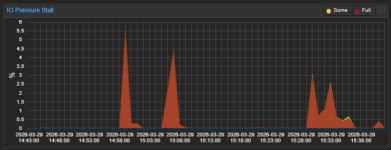

Graph of one node

View attachment 96610

Did someone have experienced that and have an idea ?