Hello, we need to migrate all cloud environments to Proxmox. At present, I am evaluating and testing Proxmox+Ceph+OpenStack.But now we are facing the following difficulties:

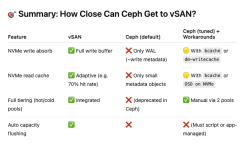

- When VMware vSAN was migrated to ceph, I found that hdd+ssd performed very poorly in ceph, and the write performance was very poor. Performance is far less than vSAN

- The sequential writing performance of ceph in the full flash memory structure is not as good as that of a single hard disk, or even a single mechanical hard disk

- In using the hdd+ssd structure in bcache, the sequential write performance of ceph is far lower than that of a single hard disk

Test server parameters (this is not important)

CPU:Dual Intel® Xeon® E5-2698Bv3Memory: 8 x 16G DDR3

Dual 1 Gbit NIC:Realtek Semiconductor Co., Ltd. RTL8111/8168/8411

Disk:

1 x 500G NVME SAMSUNG MZALQ512HALU-000L1 (It is also the ssd-data Thinpool in PVE)

1 x 500G SATA WDC_WD5000AZLX-60K2TA0 (Physical machine system disk)

2 x 500G SATA WDC_WD5000AZLX-60K2TA0

1 x 1T SATA ST1000LM035-1RK172

PVE:pve-manager/7.3-4/d69b70d4 (running kernel: 5.15.74-1-pve)

Network Configure:

enp4s0 (OVS Port) -> vmbr0 (OVS Bridge) -> br0mgmt (192.168.1.3/24,192.168.1.1)

enp5s0 (OVS Port,MTU=9000) -> vmbr1 (OVS Bridge,MTU=9000)

vmbr2 (OVS Bridge,MTU=9000)

Test virtual machine parameters x 3 (three virtual machines are the same parameters)

CPU:32 (1 sockets, 32 cores) [host]Memory:32G

Disk:

1 x local-lvm:vm-101-disk-0,iothread=1,size=32G

2 x ssd-data:vm-101-disk-0,iothread=1,size=120G

Network Device:

net0: bridge=vmbr0,firewall=1

net1: bridge=vmbr2,firewall=1,mtu=1 (Ceph Cluster/Public Network)

net2: bridge=vmbr0,firewall=1

net3: bridge=vmbr0,firewall=1

Network Configure:

ens18 (net0,OVS Port) -> vmbr0 (OVS Bridge) -> br0mgmt (10.10.1.11/24,10.10.1.1)

ens19 (net1,OVS Port,MTU=9000) -> vmbr1 (OVS Bridge,MTU=9000) -> br1ceph (192.168.10.1/24,MTU=9000)

ens20 (net2,Network Device,Active=No)

ens21 (net3,Network Device,Active=No)

Benchmarking tools

- fio

- fio-cdm (https://github.com/xlucn/fio-cdm)

Use 'python fio-cdm - f -' to get

Code:

[global]

ioengine=libaio

filename=.fio_testmark

directory=/root

size=1073741824.0

direct=1

runtime=5

refill_buffers

norandommap

randrepeat=0

allrandrepeat=0

group_reporting

[seq-read-1m-q8-t1]

rw=read

bs=1m

rwmixread=0

iodepth=8

numjobs=1

loops=5

stonewall

[seq-write-1m-q8-t1]

rw=write

bs=1m

rwmixread=0

iodepth=8

numjobs=1

loops=5

stonewall

[seq-read-1m-q1-t1]

rw=read

bs=1m

rwmixread=0

iodepth=1

numjobs=1

loops=5

stonewall

[seq-write-1m-q1-t1]

rw=write

bs=1m

rwmixread=0

iodepth=1

numjobs=1

loops=5

stonewall

[rnd-read-4k-q32-t16]

rw=randread

bs=4k

rwmixread=0

iodepth=32

numjobs=16

loops=5

stonewall

[rnd-write-4k-q32-t16]

rw=randwrite

bs=4k

rwmixread=0

iodepth=32

numjobs=16

loops=5

stonewall

[rnd-read-4k-q1-t1]

rw=randread

bs=4k

rwmixread=0

iodepth=1

numjobs=1

loops=5

stonewall

[rnd-write-4k-q1-t1]

rw=randwrite

bs=4k

rwmixread=0

iodepth=1

numjobs=1

loops=5

stonewallEnvironment construction steps

Code:

# prepare tools

root@pve01:~# apt update -y && apt upgrade -y

root@pve01:~# apt install fio git -y

root@pve01:~# git clone https://github.com/xlucn/fio-cdm.git

# create test block

root@pve01:~# rbd create test -s 20G

root@pve01:~# rbd map test

root@pve01:~# mkfs.xfs /dev/rbd0

root@pve01:~# mkdir /mnt/test

root@pve01:/mnt# mount /dev/rbd0 /mnt/test

# start test

root@pve01:/mnt/test# python3 ~/fio-cdm/fio-cdmEnvironmental test

Code:

root@pve01:~# apt install iperf3 -y

root@pve01:~# iperf3 -s

-----------------------------------------------------------

Server listening on 5201

-----------------------------------------------------------

Accepted connection from 10.10.1.12, port 52968

[ 5] local 10.10.1.11 port 5201 connected to 10.10.1.12 port 52972

[ ID] Interval Transfer Bitrate

[ 5] 0.00-1.00 sec 1.87 GBytes 16.0 Gbits/sec

[ 5] 1.00-2.00 sec 1.92 GBytes 16.5 Gbits/sec

[ 5] 2.00-3.00 sec 1.90 GBytes 16.4 Gbits/sec

[ 5] 3.00-4.00 sec 1.90 GBytes 16.3 Gbits/sec

[ 5] 4.00-5.00 sec 1.85 GBytes 15.9 Gbits/sec

[ 5] 5.00-6.00 sec 1.85 GBytes 15.9 Gbits/sec

[ 5] 6.00-7.00 sec 1.70 GBytes 14.6 Gbits/sec

[ 5] 7.00-8.00 sec 1.75 GBytes 15.0 Gbits/sec

[ 5] 8.00-9.00 sec 1.89 GBytes 16.2 Gbits/sec

[ 5] 9.00-10.00 sec 1.87 GBytes 16.0 Gbits/sec

[ 5] 10.00-10.04 sec 79.9 MBytes 15.9 Gbits/sec

- - - - - - - - - - - - - - - - - - - - - - - - -

[ ID] Interval Transfer Bitrate

[ 5] 0.00-10.04 sec 18.6 GBytes 15.9 Gbits/sec receiver

Code:

root@pve01:~# ping -M do -s 8000 192.168.10.2

PING 192.168.10.2 (192.168.10.2) 8000(8028) bytes of data.

8008 bytes from 192.168.10.2: icmp_seq=1 ttl=64 time=1.51 ms

8008 bytes from 192.168.10.2: icmp_seq=2 ttl=64 time=0.500 ms

^C

--- 192.168.10.2 ping statistics ---

2 packets transmitted, 2 received, 0% packet loss, time 1002ms

rtt min/avg/max/mdev = 0.500/1.007/1.514/0.507 ms

root@pve01:~#Benchmark category

- Physical Disk Benchmark

- Single osd, single server benchmark

- Multiple OSDs, single server benchmarks

- Multiple OSDs, multiple server benchmarks

Benchmark results (Ceph and the system have not been tuned, and bcache acceleration has not been used)

1. Physical Disk Benchmark (Test sequence is 4)

step.

Code:

root@pve1:~# lsblk

NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT

sda 8:0 0 465.8G 0 disk

├─sda1 8:1 0 1007K 0 part

├─sda2 8:2 0 512M 0 part /boot/efi

└─sda3 8:3 0 465.3G 0 part

├─pve-root 253:0 0 96G 0 lvm /

├─pve-data_tmeta 253:1 0 3.5G 0 lvm

│ └─pve-data-tpool 253:3 0 346.2G 0 lvm

│ ├─pve-data 253:4 0 346.2G 1 lvm

│ └─pve-vm--100--disk--0 253:5 0 16G 0 lvm

└─pve-data_tdata 253:2 0 346.2G 0 lvm

└─pve-data-tpool 253:3 0 346.2G 0 lvm

├─pve-data 253:4 0 346.2G 1 lvm

└─pve-vm--100--disk--0 253:5 0 16G 0 lvm

sdb 8:16 0 931.5G 0 disk

sdc 8:32 0 465.8G 0 disk

sdd 8:48 0 465.8G 0 disk

nvme0n1 259:0 0 476.9G 0 disk

root@pve1:~# mkfs.xfs /dev/nvme0n1 -f

root@pve1:~# mkdir /mnt/nvme

root@pve1:~# mount /dev/nvme0n1 /mnt/nvme

root@pve1:~# cd /mnt/nvme/result.

Code:

root@pve1:/mnt/nvme# python3 ~/fio-cdm/fio-cdm

tests: 5, size: 1.0GiB, target: /mnt/nvme 3.4GiB/476.7GiB

|Name | Read(MB/s)| Write(MB/s)|

|------------|------------|------------|

|SEQ1M Q8 T1 | 2361.95| 1435.48|

|SEQ1M Q1 T1 | 1629.84| 1262.63|

|RND4K Q32T16| 954.86| 1078.88|

|. IOPS | 233119.53| 263398.08|

|. latency us| 2194.84| 1941.78|

|RND4K Q1 T1 | 55.56| 225.06|

|. IOPS | 13565.49| 54946.21|

|. latency us| 72.76| 16.97|2. Single osd, single server benchmark (Test sequence is 3)

Modify ceph.conf set osd_pool_default_min_size and osd_pool_default_size with 1, then systemctl restart ceph.target and fix all errorsstep.

Code:

root@pve01:/mnt/test# ceph osd pool get rbd size

size: 2

root@pve01:/mnt/test# ceph config set global mon_allow_pool_size_one true

root@pve01:/mnt/test# ceph osd pool set rbd min_size 1

set pool 2 min_size to 1

root@pve01:/mnt/test# ceph osd pool set rbd size 1 --yes-i-really-mean-it

set pool 2 size to 1result

Code:

root@pve01:/mnt/test# ceph -s

cluster:

id: 1f3eacc8-2488-4e1a-94bf-7181ee7db522

health: HEALTH_WARN

2 pool(s) have no replicas configured

services:

mon: 3 daemons, quorum pve01,pve02,pve03 (age 17m)

mgr: pve01(active, since 17m), standbys: pve02, pve03

osd: 6 osds: 1 up (since 19s), 1 in (since 96s)

data:

pools: 2 pools, 33 pgs

objects: 281 objects, 1.0 GiB

usage: 1.1 GiB used, 119 GiB / 120 GiB avail

pgs: 33 active+clean

root@pve01:/mnt/test# ceph osd tree

ID CLASS WEIGHT TYPE NAME STATUS REWEIGHT PRI-AFF

-1 0.70312 root default

-3 0.23438 host pve01

0 ssd 0.11719 osd.0 up 1.00000 1.00000

1 ssd 0.11719 osd.1 down 0 1.00000

-5 0.23438 host pve02

2 ssd 0.11719 osd.2 down 0 1.00000

3 ssd 0.11719 osd.3 down 0 1.00000

-7 0.23438 host pve03

4 ssd 0.11719 osd.4 down 0 1.00000

5 ssd 0.11719 osd.5 down 0 1.00000

root@pve01:/mnt/test# python3 ~/fio-cdm/fio-cdm

tests: 5, size: 1.0GiB, target: /mnt/test 175.8MiB/20.0GiB

|Name | Read(MB/s)| Write(MB/s)|

|------------|------------|------------|

|SEQ1M Q8 T1 | 1153.07| 515.29|

|SEQ1M Q1 T1 | 447.35| 142.98|

|RND4K Q32T16| 99.07| 32.19|

|. IOPS | 24186.26| 7859.91|

|. latency us| 21148.94| 65076.23|

|RND4K Q1 T1 | 7.47| 1.48|

|. IOPS | 1823.24| 360.98|

|. latency us| 545.98| 2765.23|

root@pve01:/mnt/test#3. Multiple OSDs, single server benchmarks (Test sequence is 2)

Change crushmap set step chooseleaf firstn 0 type host to step chooseleaf firstn 0 type osdOSD tree

Code:

root@pve01:/etc/ceph# ceph osd tree

ID CLASS WEIGHT TYPE NAME STATUS REWEIGHT PRI-AFF

-1 0.70312 root default

-3 0.23438 host pve01

0 ssd 0.11719 osd.0 up 1.00000 1.00000

1 ssd 0.11719 osd.1 up 1.00000 1.00000

-5 0.23438 host pve02

2 ssd 0.11719 osd.2 down 0 1.00000

3 ssd 0.11719 osd.3 down 0 1.00000

-7 0.23438 host pve03

4 ssd 0.11719 osd.4 down 0 1.00000

5 ssd 0.11719 osd.5 down 0 1.00000result

Code:

root@pve01:/mnt/test# python3 ~/fio-cdm/fio-cdm

tests: 5, size: 1.0GiB, target: /mnt/test 175.8MiB/20.0GiB

|Name | Read(MB/s)| Write(MB/s)|

|------------|------------|------------|

|SEQ1M Q8 T1 | 1376.59| 397.29|

|SEQ1M Q1 T1 | 442.74| 111.41|

|RND4K Q32T16| 114.97| 29.08|

|. IOPS | 28068.12| 7099.90|

|. latency us| 18219.04| 72038.06|

|RND4K Q1 T1 | 6.82| 1.04|

|. IOPS | 1665.27| 254.40|

|. latency us| 598.00| 3926.30|4. Multiple OSDs, multiple server benchmarks (Test sequence is 1)

OSD tree

Code:

root@pve01:/etc/ceph# ceph osd tree

ID CLASS WEIGHT TYPE NAME STATUS REWEIGHT PRI-AFF

-1 0.70312 root default

-3 0.23438 host pve01

0 ssd 0.11719 osd.0 up 1.00000 1.00000

1 ssd 0.11719 osd.1 up 1.00000 1.00000

-5 0.23438 host pve02

2 ssd 0.11719 osd.2 up 1.00000 1.00000

3 ssd 0.11719 osd.3 up 1.00000 1.00000

-7 0.23438 host pve03

4 ssd 0.11719 osd.4 up 1.00000 1.00000

5 ssd 0.11719 osd.5 up 1.00000 1.00000result

Code:

tests: 5, size: 1.0GiB, target: /mnt/test 175.8MiB/20.0GiB

|Name | Read(MB/s)| Write(MB/s)|

|------------|------------|------------|

|SEQ1M Q8 T1 | 1527.37| 296.25|

|SEQ1M Q1 T1 | 408.86| 106.43|

|RND4K Q32T16| 189.20| 43.00|

|. IOPS | 46191.94| 10499.01|

|. latency us| 11068.93| 48709.85|

|RND4K Q1 T1 | 4.99| 0.95|

|. IOPS | 1219.16| 232.37|

|. latency us| 817.51| 4299.14|

Conclusions

- It can be seen that the gap between the write performance of ceph (106.43MB/s) and the write performance of physical disk (1262.63MB/s) is huge, and even the RND4K Q1 T1 directly becomes a mechanical hard disk

- One or more OSDs and one or more machines have little impact on ceph (it may be that my number of clusters is not enough)

- The ceph cluster built with three nodes will cause the disk read performance to drop by half and the write performance to drop by a quarter or more

APPENDIX

Due to the length limitation of the article, the appendix is written on another floorFinally, the question I want to know is:

- How to fix the write performance problem in ceph? Can ceph achieve the same performance as VMware vSAN.

- The results show that the performance of full flash disk is not as good as that of hdd+ssd. So if I do not use bcache, what should I do to fix the performance problem of ceph full flash disk?

- Is there a better solution for the hdd+ssd architecture?