Hi all,

one Virtual Machine is replicated from

I'm now trying to migrate this VM to `node03-i` but the replication is failing with this error:

Proxmox is updated on both nodes:

Could you help me please?

Thank you very much!

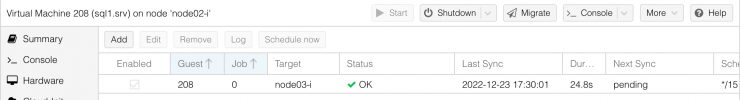

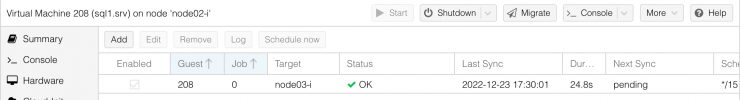

one Virtual Machine is replicated from

node02-i to node03-i, and the replication is successfully running:

I'm now trying to migrate this VM to `node03-i` but the replication is failing with this error:

Code:

2022-12-23 17:42:14 starting migration of VM 208 to node 'node03-i' (192.168.80.3)

2022-12-23 17:42:14 found local, replicated disk 'local-zfs:vm-208-disk-0' (in current VM config)

2022-12-23 17:42:14 found local, replicated disk 'local-zfs:vm-208-disk-5' (in current VM config)

2022-12-23 17:42:14 found local, replicated disk 'local-zfs:vm-208-disk-6' (in current VM config)

2022-12-23 17:42:14 found local, replicated disk 'local-zfs:vm-208-disk-7' (in current VM config)

2022-12-23 17:42:14 found local, replicated disk 'local-zfs:vm-208-disk-8' (in current VM config)

2022-12-23 17:42:14 found local, replicated disk 'local-zfs:vm-208-disk-9' (in current VM config)

2022-12-23 17:42:14 virtio5: start tracking writes using block-dirty-bitmap 'repl_virtio5'

2022-12-23 17:42:14 ERROR: VM 208 qmp command 'block-dirty-bitmap-add' failed - Bitmap already exists: repl_virtio5

2022-12-23 17:42:14 aborting phase 1 - cleanup resources

2022-12-23 17:42:14 ERROR: migration aborted (duration 00:00:00): VM 208 qmp command 'block-dirty-bitmap-add' failed - Bitmap already exists: repl_virtio5

TASK ERROR: migration abortedProxmox is updated on both nodes:

Code:

root@node02-i:~# pveversion -v

proxmox-ve: 7.3-1 (running kernel: 5.15.60-1-pve)

pve-manager: 7.3-4 (running version: 7.3-4/d69b70d4)

pve-kernel-5.15: 7.3-1

pve-kernel-helper: 7.3-1

pve-kernel-5.4: 6.4-20

pve-kernel-5.15.83-1-pve: 5.15.83-1

pve-kernel-5.15.60-1-pve: 5.15.60-1

pve-kernel-5.4.203-1-pve: 5.4.203-1

pve-kernel-5.4.128-1-pve: 5.4.128-2

pve-kernel-4.15: 5.4-19

pve-kernel-4.15.18-30-pve: 4.15.18-58

pve-kernel-4.15.18-12-pve: 4.15.18-36

ceph: 15.2.17-pve1

ceph-fuse: 15.2.17-pve1

corosync: 3.1.7-pve1

criu: 3.15-1+pve-1

glusterfs-client: 9.2-1

ifupdown: 0.8.36+pve2

ksm-control-daemon: 1.4-1

libjs-extjs: 7.0.0-1

libknet1: 1.24-pve2

libproxmox-acme-perl: 1.4.3

libproxmox-backup-qemu0: 1.3.1-1

libpve-access-control: 7.3-1

libpve-apiclient-perl: 3.2-1

libpve-common-perl: 7.3-1

libpve-guest-common-perl: 4.2-3

libpve-http-server-perl: 4.1-5

libpve-storage-perl: 7.3-1

libqb0: 1.0.5-1

libspice-server1: 0.14.3-2.1

lvm2: 2.03.11-2.1

lxc-pve: 5.0.0-3

lxcfs: 4.0.12-pve1

novnc-pve: 1.3.0-3

proxmox-backup-client: 2.3.1-1

proxmox-backup-file-restore: 2.3.1-1

proxmox-mini-journalreader: 1.3-1

proxmox-offline-mirror-helper: 0.5.0-1

proxmox-widget-toolkit: 3.5.3

pve-cluster: 7.3-1

pve-container: 4.4-2

pve-docs: 7.3-1

pve-edk2-firmware: 3.20220526-1

pve-firewall: 4.2-7

pve-firmware: 3.6-2

pve-ha-manager: 3.5.1

pve-i18n: 2.8-1

pve-qemu-kvm: 7.1.0-4

pve-xtermjs: 4.16.0-1

qemu-server: 7.3-2

smartmontools: 7.2-pve3

spiceterm: 3.2-2

swtpm: 0.8.0~bpo11+2

vncterm: 1.7-1

zfsutils-linux: 2.1.7-pveCould you help me please?

Thank you very much!

Last edited: