Hello everyone.

I recently set up a 3 node cluster with Ceph storage. Since the cluster was already running with local storage, I tried a 'rolling' deployment of Ceph. Meaning I reinstalled Proxmox one node at a time, then re-add that node to the cluster, install Ceph, then gradually moved VM disks over.

This worked great and my cluster is now running all my VMs with Ceph.

However, it seems Ceph has created an

What is this

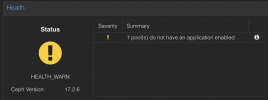

I have attached two screenshots. Hope someone can shed some light on this.

Thanks!

I recently set up a 3 node cluster with Ceph storage. Since the cluster was already running with local storage, I tried a 'rolling' deployment of Ceph. Meaning I reinstalled Proxmox one node at a time, then re-add that node to the cluster, install Ceph, then gradually moved VM disks over.

This worked great and my cluster is now running all my VMs with Ceph.

However, it seems Ceph has created an

.mgr pool that I don't know about. It is complaining that there is no application enabled on the pool

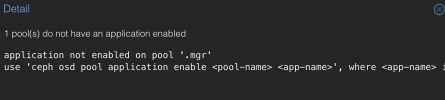

Code:

application not enabled on pool '.mgr'

use 'ceph osd pool application enable <pool-name> <app-name>', where <app-name> is 'cephfs', 'rbd', 'rgw', or freeform for custom applications.What is this

.mgr pool? Do I need to assign an application? Which one? Should I delete it? So many questions .I have attached two screenshots. Hope someone can shed some light on this.

Thanks!