So I'm in the process of consolidating two ESXi hosts (a Dell R710 running ESXi 6.5 and an R430 running ESXi 7) down to a single Proxmox host on an R740.

I'm moving my least used machines first in order to nail the process down.

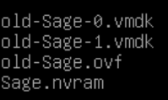

I'm using the ESXi web interface to export the machine out and it does export without error.

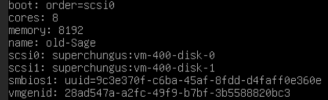

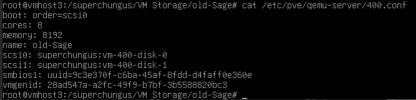

The import command I use is:

qm import ovf 400 <machine>.ovf <filesystem name>

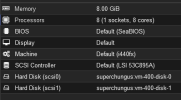

The import worked without error, however when I start the machine it dies...badly:

Any suggestions as to how I can get this to properly function?

Thanks all!

g.

I'm moving my least used machines first in order to nail the process down.

I'm using the ESXi web interface to export the machine out and it does export without error.

The import command I use is:

qm import ovf 400 <machine>.ovf <filesystem name>

The import worked without error, however when I start the machine it dies...badly:

Any suggestions as to how I can get this to properly function?

Thanks all!

g.