Ceph librbd vs krbd

- Thread starter ermanishchawla

- Start date

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

librbd and krbd are just two different clients, the ceph pool does not care much with which one you access an RBD image.

In Proxmox VE we use librbd for VMs by default and krbd for Containers.

But, you can enforce the use of the kernel RBD driver also for VMs if you set "krbd" on in the PVE storage configuration of a pool.

Differences between two clients are:

librbd adopts the use of newer storage features quicker, but with the current 5.4 based kernel you got all good features in the kernel client too.

It's said that KRBD is often a bit faster than librbd, but in my experience librbd isn't slow either and you'd need quite the fast ceph to see a difference.

In Proxmox VE we use librbd for VMs by default and krbd for Containers.

But, you can enforce the use of the kernel RBD driver also for VMs if you set "krbd" on in the PVE storage configuration of a pool.

Differences between two clients are:

librbd adopts the use of newer storage features quicker, but with the current 5.4 based kernel you got all good features in the kernel client too.

It's said that KRBD is often a bit faster than librbd, but in my experience librbd isn't slow either and you'd need quite the fast ceph to see a difference.

Also did the switch. Nothing to complain about so far. I guess krbd is the way to go.Hi All,

We just experienced a bug that caused us to switch to krbd.

Is there a good reason to switch back once the bug is resolved? It seems that krbd might be faster and I don't see any features that I'm giving up.

best,

James

Can someone explain for my VM setup, I should use librdb or krbd while creating ceph pool

Hi,

We tried to do a benchmark for Mysql windows server with a huge database on it using a very heavy query (join, left join, group by ecc...)

Here the results:

test n.1 , RBD, queryA ==> 8:34

test n.2 , RBD, queryA ==> 4:12

test n.3 , RBD, queryA ==> 4:03

test n.3 , RBD, queryA ==> 4:24

test n.1 , KRBD, queryA ==> 10:23

test n.2 , KRBD, queryA ==> 6:41

test n.3 , KRBD, queryA ==> 6:29

test n.3 , KRBD, queryA ==> 6:32

The VM has every kind of optimization:

Cache: Write back

Discard: yes

IO thread: yes

SSD emulation: yes

Hard Disk: iSCSI

Can you provide the actual test syntax? (not sure if the result is in ms/q or q/s.)Here the results:

???Hard Disk: iSCSI

Trying out the different Async IO options for the VM disks would be also good, i.e. io-uring vs native vs threads.

Yes you can without any impact - to get the VMs to use KRBD you need to restart them (restart via Proxmox/QEMU not from within the VM) as the IO process has to be restarted once.Can I switch to KRBD when VM's are currently running?

Last edited:

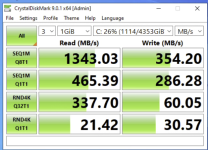

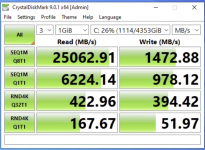

Yes, in my case too, the performance increase was massive, although I believe that Crystaldiskmark with only 1gb chunk running on a VM is not very indicative since it uses the PVE node cache.Switched to KRBD and the difference is massive lol. Here's the before and after, writeback cache was enabled for both runs.

I am inclined to think that the actual performance is not so different between KRBD on or off.

Anyway, I have been using KRBD for months, and so far I have not noticed any problems.