Hi there! I'm brand new to this and trying to figure out how to set up my first homelab. My most immediate goal is to set up a home media server followed by running some Linux instances. I am working on getting ProxMox installed and set up, but think I am making some mistakes and need some support. I'm not sure I even need this stuff, but I think I'd like to have it available unless I don't need it? My immediate objective is to have ProxMox run Jellyfin and use my NAS as the storage system to play from to my TV. I'm not going to use it on other devices at the moment nor do I want any access to this from outside of my home network. However, I will likely want GPU usage for other systems eventually.

The system I am installing this on via a USB stick set up by Rufus is as follows:

[0.140478] x2apic: IRQ remapping doesn't support X2APIC mode

Here are the steps I've taken thus far:

nano /etc/default/grub

Run update-grub

nano /etc/modules

nano /etc/modprobe.d/iommu_unsafe_interrupts.conf

nano /etc/modprobe.d/kvm.conf

nano /etc/modprobe.d/blacklist.conf

lspci -v (used to capture the GPU numbers)

lspci -n -s

nano /etc/modprobe.d/vfio.conf

update-initramfs -u

If I royally screwed up something here, I can reinstall and start fresh as I have nothing running here yet. Haven't made it past this part sadly.

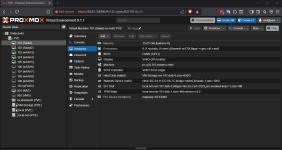

The system I am installing this on via a USB stick set up by Rufus is as follows:

- i7-10700k

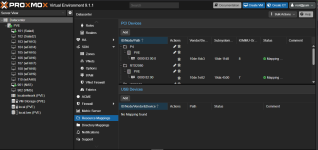

- NVidia GeForce RTX 3070 Ti

- 32GB DDR4 RAM

- 2TB M.2 NVMe SSD (Boot Drive)

- 2TB 3.5" 7200 RPM HDD

- MSI Z490 Motherboard

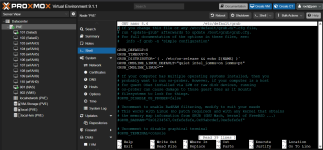

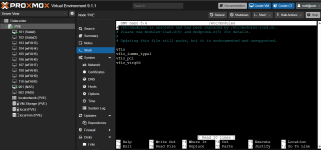

[0.140478] x2apic: IRQ remapping doesn't support X2APIC mode

Here are the steps I've taken thus far:

nano /etc/default/grub

GRUB_CMDLINE_LINUX_DEFAULT="quiet intel_iommu=on iommu=pt pcie_acs_override=downstream,multifunction nofb nomodeset video=vesafb:off,efifb:off"Run update-grub

nano /etc/modules

vfiovfio_iommu_type1vfio_pcivfio_virqfdnano /etc/modprobe.d/iommu_unsafe_interrupts.conf

options vfio_iommu_type1 allow_unsafe_interrupts=1nano /etc/modprobe.d/kvm.conf

options kvm ignore_msrs=1nano /etc/modprobe.d/blacklist.conf

blacklist radeonblacklist nouveaublacklist nvidiablacklist nvidiafblspci -v (used to capture the GPU numbers)

lspci -n -s

nano /etc/modprobe.d/vfio.conf

options vfio-pci ids=10de:2208,10de:1aef disable_vga=1update-initramfs -u

If I royally screwed up something here, I can reinstall and start fresh as I have nothing running here yet. Haven't made it past this part sadly.