Hello,

I’ve been trying for two weeks to set up a working solution for the following configuration using PVE 9.1.1. I’ve read dozens of articles and reinstalled the test environment dozens of times. Nowhere is a setup with two SANs described; instead, they only mention configurations with multiple internal hard drives.

We have two PVE hosts with plenty of RAM and cores, a quorum machine, and two SANs with SSDs.

The PVE hosts are identical, as are the SANs. I’m running the PVE hosts in HA mode, which isn’t a problem.

The difficulty lies in the storage connection, with the following issues.

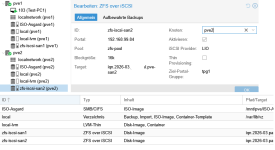

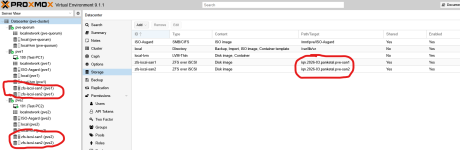

ZFS over iSCSI seems to be extremely prone to errors. Even though both nodes and SANs are configured identically, different errors occur. The main problem is that after an HA migration of a VM, the VM can no longer access the hard drive, even though both SANs are mounted on each node. The ZFS pool name is identical on both SANs. I have tried solutions using NFS/CIFS, iSCSI/LVM, and ZFS over iSCSI. These are at least the file systems with snapshots that, according to the storage type table (https://pve.proxmox.com/pve-docs/chapter-pvesm.html), should work.

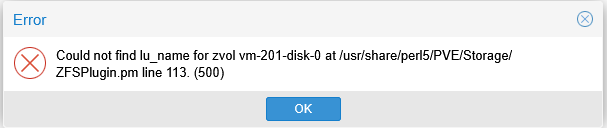

ZFS is the only system that is supposed to support replication between SANs. However, with ZFS over iSCSI, you cannot operate these mounted SANs as a RAID or cluster within Proxmox VE. When I simulate a node failure, the test VM moves, and I can neither move nor delete the VM disk (see image).

Is it better to combine the SANs into a cluster outside of Proxmox and mount only one storage?

What is the most functional setup for two nodes and two SANs?

Thank you very much, Maximus

I’ve been trying for two weeks to set up a working solution for the following configuration using PVE 9.1.1. I’ve read dozens of articles and reinstalled the test environment dozens of times. Nowhere is a setup with two SANs described; instead, they only mention configurations with multiple internal hard drives.

We have two PVE hosts with plenty of RAM and cores, a quorum machine, and two SANs with SSDs.

The PVE hosts are identical, as are the SANs. I’m running the PVE hosts in HA mode, which isn’t a problem.

The difficulty lies in the storage connection, with the following issues.

ZFS over iSCSI seems to be extremely prone to errors. Even though both nodes and SANs are configured identically, different errors occur. The main problem is that after an HA migration of a VM, the VM can no longer access the hard drive, even though both SANs are mounted on each node. The ZFS pool name is identical on both SANs. I have tried solutions using NFS/CIFS, iSCSI/LVM, and ZFS over iSCSI. These are at least the file systems with snapshots that, according to the storage type table (https://pve.proxmox.com/pve-docs/chapter-pvesm.html), should work.

ZFS is the only system that is supposed to support replication between SANs. However, with ZFS over iSCSI, you cannot operate these mounted SANs as a RAID or cluster within Proxmox VE. When I simulate a node failure, the test VM moves, and I can neither move nor delete the VM disk (see image).

Is it better to combine the SANs into a cluster outside of Proxmox and mount only one storage?

What is the most functional setup for two nodes and two SANs?

Thank you very much, Maximus