Hi @McJameson,

the partitioning of your disk looks like what ZFS does. What do

Going to

the partitioning of your disk looks like what ZFS does. What do

zpool list, zfs list and zpool import say? Your boot log also shows that ZFS is used.Going to

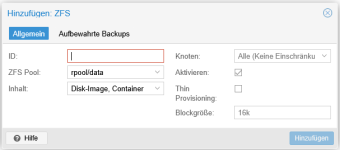

Datacenter > Storage > Add > ZFS and selecting rpool/data might already solve part of your issue.