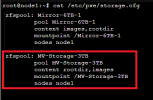

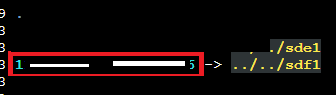

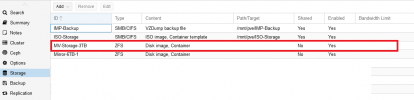

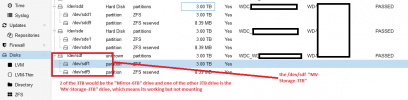

Working Drive no longer auto mounts or mounts when ProxMox is booted up.

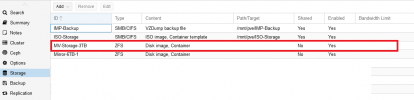

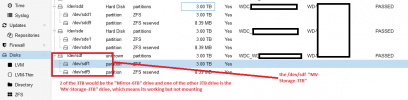

The Drive is visible using `lsblk -f` but not visible in the ZFS drives section. The current drive was working 100% yesterday. Did a reboot of the PMVE server and realized that the drive is no longer mounting as the VM keeps failing to start.

How do I go about fixing this issue as rebooting has not solved anything.

I have attached screenshots showing the issue with the zfs "MV-Storage-3TB" drive.

Any help on this issue is much appreciated.

Thank You

The Drive is visible using `lsblk -f` but not visible in the ZFS drives section. The current drive was working 100% yesterday. Did a reboot of the PMVE server and realized that the drive is no longer mounting as the VM keeps failing to start.

How do I go about fixing this issue as rebooting has not solved anything.

I have attached screenshots showing the issue with the zfs "MV-Storage-3TB" drive.

Any help on this issue is much appreciated.

Thank You