Latest activity

-

Aa.woll replied to the thread [SOLVED] Nach Update auf v9.0.7 UI zerschossen. Ich bitte um Hilfe..Ich hoffe, der Screenshot hilft weiter: Ich probiere es mal im Inkognito-Fenster, fürchte aber, es könnte etwas tiefgreifenderes sein, weil ich soeben auf der Seite auch versucht habe, die DNS-Konfig so anzupassen, dass sie auf den lokalen...

-

Aa.woll reacted to ChrisTG74's post in the thread [SOLVED] Nach Update auf v9.0.7 UI zerschossen. Ich bitte um Hilfe. with

Like.

Fliegen im Browser denn Fehler im Netzwerk-Trace? (Firefox: Shift + F5, dann Tab "Network" und Seite reloaden, beim Chrome muss man die "Developer Tools" über das Menü öffnen). Also 400er oder 500er Errors? In Zweifelsfall ist vielleicht einfach...

Like.

Fliegen im Browser denn Fehler im Netzwerk-Trace? (Firefox: Shift + F5, dann Tab "Network" und Seite reloaden, beim Chrome muss man die "Developer Tools" über das Menü öffnen). Also 400er oder 500er Errors? In Zweifelsfall ist vielleicht einfach... -

BBob@22 replied to the thread ASM2812 based dual NVME PCIe card and passthrough.ASM2812 is not a true PCIe switch It is a lane‑multiplexer, not a switch fabric It presents two NVMe devices to the OS But internally they share a single PCIe endpoint IOMMU groups get confused Passthrough exposes the same upstream device twice...

-

Ccarlitos009 posted the thread PROXMOX 8.3.0 hangs for no apparent reason in Proxmox VE: Installation and configuration.Four days ago my PVE was unresponsive. Noticed because my immich container in my virtualized TrueNas was not responding. I was unable to connect to the GUI and SSH was the same. I figured some power issue but my seconday pve and synology were...

-

Vvesalius replied to the thread [SOLVED] intel x553 SFP+ ixgbe no go on PVE8.Sorry, My intel SFP+ continue to work natively since Kernel 6.8.12 on through current 7.0. No manual or DKMS ixgbe build needed since then either. Have you triple checked the Microtik switch port settings to make sure that it’s not a network...

-

Bbluesite replied to the thread Probleme mit apcupsd.also ich habe aktuell kernel 7.0.0-3-pve am Laufen und drei apc usv am Server, zwei davon sind für andere Rechner da bzw Geräte alle drei laufen hier ohne Probleme mit dem Kernel, habe auch apcupsd 3.14.14 2 mal Back-UPS XS 950U und eine Back-UPS...

-

Dd.oishi replied to the thread Error: Login failed. Please try again.Since I do not know the exact state of your cluster, I cannot advise a safe procedure. However, I believe the following documentation may be helpful. I recommend that you read the cautions and notes in the documentation carefully before...

-

JJohannes S replied to the thread Nesting - Proxmox within Proxmox - Complete private cloud.Incus und ProxmoxVE are both basically built around qemu/kvm for vms and lxc for containers. So I would expect that both have similiar capibalities, in fact nested virtualization and containers work on both with similiar caveats ( nested...

-

JJohannes S reacted to leesteken's post in the thread Nesting - Proxmox within Proxmox - Complete private cloud with

Like.

Can you share the blog? I wonder what they were talking about.

Like.

Can you share the blog? I wonder what they were talking about. -

JJohannes S reacted to leesteken's post in the thread Nesting - Proxmox within Proxmox - Complete private cloud with

Like.

Where did you read that? AFAIK, there is nothing special about nesting VMs on Proxmox VE: https://pve.proxmox.com/wiki/Nested_Virtualization

Like.

Where did you read that? AFAIK, there is nothing special about nesting VMs on Proxmox VE: https://pve.proxmox.com/wiki/Nested_Virtualization -

FFiruz replied to the thread Error: Login failed. Please try again.I have already determined that the problem is a cluster remnant from the old cluster. Now I need to figure out how to remove it in the safest way. Since there are currently a lot of VMs on the server, it should be extremely risk-free.

-

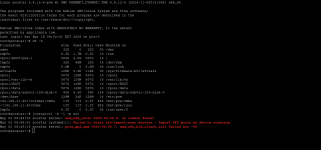

WWeltherrscher replied to the thread Opt-in Linux 7.0 Kernel for Proxmox VE 9 available on test and no-subscription.Since the Upgrade to Kernel 7.0 and PVE 9.1.9, my VMs randomly hard shut down (aka they just switch off hard). E.g. in my Mailserver-VM I see the following errors in dmesg: [ 28.293199] ata9.00: Read log 0x10 page 0x00 failed, Emask 0x1 [...

-

Nnickwalt replied to the thread Nesting - Proxmox within Proxmox - Complete private cloud.Read it on a blog and couldn't see how this was possible so had to ask. Thanks. Looks like running LXC in a nested hypervisor will be performant enough for my requirements.

-

HHotdog reacted to Stubennerd's post in the thread Opt-in Linux 7.0 Kernel for Proxmox VE 9 available on test and no-subscription with

Like.

Can you please put the outputs into code blocks? It's, at least for me, really exhausting to read.

Like.

Can you please put the outputs into code blocks? It's, at least for me, really exhausting to read. -

UdoB reacted to Mudgee Host's post in the thread Proxmox onto of Debian install - instructions to engineers with

UdoB reacted to Mudgee Host's post in the thread Proxmox onto of Debian install - instructions to engineers with Like.

ok, I think I might be pushing harder for a proxmox iso install.

Like.

ok, I think I might be pushing harder for a proxmox iso install. -

Dd.oishi reacted to uzumo's post in the thread Supported way for a NAS across multiple nodes that supports thin disks, snapshots, live migrations, move disks, and HA? with

Like.

Because it is a cluster file system like VMFS, multiple nodes can access the same disk. Since there is no such file system, multiple nodes cannot access the same disk. If that is a requirement, you can simply continue using VMware. *I have...

Like.

Because it is a cluster file system like VMFS, multiple nodes can access the same disk. Since there is no such file system, multiple nodes cannot access the same disk. If that is a requirement, you can simply continue using VMware. *I have... -

Dd.oishi reacted to DerekG's post in the thread Supported way for a NAS across multiple nodes that supports thin disks, snapshots, live migrations, move disks, and HA? with

Like.

The greatest mistake one can make is to try and forklift VMware into a Proxmox environment. You will forever be chasing your tail trying to get Proxmox to 'work' the same as VMWare. Start the process with a clean Proxmox install and then look...

Like.

The greatest mistake one can make is to try and forklift VMware into a Proxmox environment. You will forever be chasing your tail trying to get Proxmox to 'work' the same as VMWare. Start the process with a clean Proxmox install and then look... -

Bbeisser reacted to Stubennerd's post in the thread Opt-in Linux 7.0 Kernel for Proxmox VE 9 available on test and no-subscription with

Like.

Can you please put the outputs into code blocks? It's, at least for me, really exhausting to read.

Like.

Can you please put the outputs into code blocks? It's, at least for me, really exhausting to read. -

JJohannes S reacted to Kingneutron's post in the thread Proxmox (as a company) - what the HELL are you doing? Kernel update to 7 broke networking IN A VM with

Like.

FYI I upgraded my PBS VMs and a secondary PVE server today with no issues. Full backups beforehand. The problematic PBS VM was upgraded in-place from PBS v3 to v4 and apparently did not have the proxmox-network-interface-pinning .deb package...

Like.

FYI I upgraded my PBS VMs and a secondary PVE server today with no issues. Full backups beforehand. The problematic PBS VM was upgraded in-place from PBS v3 to v4 and apparently did not have the proxmox-network-interface-pinning .deb package... -

JJohannes S reacted to omavoss's post in the thread [SOLVED] PBS hat Root-Passwort vergessen with

Like.

Okay, hab meinen Fehler gefunden. Es gab ein LXC auf einer anderen physischen Proxmox-Maschine mit derselben IP-Adresse wie mein PBS, allerdings lauschte dieses LXC an einem anderen Port. Ich habe das korrigiert und nun läuft das BPS-GUI und...

Like.

Okay, hab meinen Fehler gefunden. Es gab ein LXC auf einer anderen physischen Proxmox-Maschine mit derselben IP-Adresse wie mein PBS, allerdings lauschte dieses LXC an einem anderen Port. Ich habe das korrigiert und nun läuft das BPS-GUI und...