Latest activity

-

ProxmoxSecurityAdvisory replied to the thread Proxmox Virtual Environment - Security Advisories.Subject: PSA-2026-00012-1: Corosync: DoS via malformed packets in unencrypted clusters Advisory date: 2026-04-15 Packages: corosync Details: Two flaws were found in Corosync, the clustering stack backing Proxmox VE's clustering feature. An...

ProxmoxSecurityAdvisory replied to the thread Proxmox Virtual Environment - Security Advisories.Subject: PSA-2026-00012-1: Corosync: DoS via malformed packets in unencrypted clusters Advisory date: 2026-04-15 Packages: corosync Details: Two flaws were found in Corosync, the clustering stack backing Proxmox VE's clustering feature. An... -

RRichieX replied to the thread [SOLVED] Veeam Backup nicht möglich "Hot Add".Der Fehler tritt tatsächlich nur in Verbindung mit Application Aware auf. Laut Veeam Support wird anstelle von .\administrator oder domain\administrator user@domain.local verwendet. Uns wurde von Veeam ein Hotfix bereitgestellt. Leider nicht für...

-

Sspintike posted the thread [SOLVED] Cluster based vnc / console error - Version 2 in Proxmox VE: Installation and configuration.Hello community! First of all I have to thank fmgoodman again for writing such a detailed explanation of his error, described in his post. I come to find that I have a very, very similar problem as his cluster. Having five hosts in a cluster...

-

Ee-Quest reacted to MarkTheSpark's post in the thread [SOLVED] Installing PVE on an iSCSI boot LUN with multipathing with

Like.

Hello All, I've spent far too much time getting PVE installed in our Cisco UCS blade environment. So in the hope that it helps others, I will share my installation instructions. Thanks to those who provided little nuggets of information to help...

Like.

Hello All, I've spent far too much time getting PVE installed in our Cisco UCS blade environment. So in the hope that it helps others, I will share my installation instructions. Thanks to those who provided little nuggets of information to help... -

Ee-Quest replied to the thread [SOLVED] Installing PVE on an iSCSI boot LUN with multipathing.How stable is this configuration one year later? Any challenges with Proxmox maintenance and updates? We are running the same environment and facing the same problem with the lack of native supported iSCSI-boot.

-

KKlug replied to the thread [SOLVED] Sync to two PBS, different space used.It ended up during the night Prune/GC happened too and space used went from 25 to 13 TB (dedup: 4.43).

-

KKevin Krause replied to the thread ToughArmor MB902SPR-B R1 wird nicht erkannt.Ich glaube, Kabel und auch die SSDs kann ich ausschließen, das habe ich alles schon in den verschiedensten Kombinationen ausprobiert. Auch die SSDs einzeln, da scheint es keine Probleme zu geben. Bei der Firmware wüßte ich jetzt nicht - so es...

-

Ffalzo replied to the thread [SOLVED] CT backs up fine but all VM's fail.just to confirm, as I also played around with mergerFS: cache.files=off will break qmp, you need at least cache.files=partial thanks @naffhouse for reporting back, this actually helped a lot.

-

Ffalzo reacted to naffhouse's post in the thread [SOLVED] CT backs up fine but all VM's fail with

Like.

for anyone wondering, under my mergerFS fount options, I had to use the option - cache.files-partial in /etc/fstab -- for the mergerFS mount.. now it works successfully

Like.

for anyone wondering, under my mergerFS fount options, I had to use the option - cache.files-partial in /etc/fstab -- for the mergerFS mount.. now it works successfully -

KKodey replied to the thread [SOLVED] Unknown VEN_1AF4&DEV_1057 device.This worked for me

-

KHi, it seems it is it. For some reason it wasn't automatically detected and I had to update the driver with the "have disk" option to let it to be installed. I "suppose" it is it because if I try to load a different driver, the system warns me...

-

Aanowak replied to the thread Ceph with 2 Cluster Networks.Unfortunately I can't get it to work with two networks as it won't accept it.

-

PPiertonio posted the thread [HELP] Windows Server 2016 VM (migrated from VMware) - CRITICAL_PROCESS_DIED after restore from PBS and Veeam in Proxmox VE: Installation and configuration.Background / VM history: The affected VM has a complex migration history: Originally a physical Windows Server 2016 server (HP ML350 Gen10, Xeon Silver) virtualized using VMware Converter onto a Dell PowerEdge with E5-2660 V4 CPUs...

-

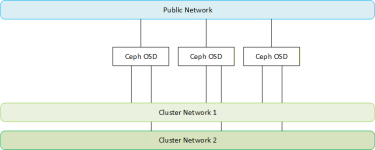

Aabamalu replied to the thread Ceph with 2 Cluster Networks.With 2 cluster_network (like cluster_network = 10.10.10.0/24, 10.10.20.0/24, but ensure that the 2 networks are seperated) you are avoiding the single stream limit with LACP hashing. You should also have advantage for replication because there...

-

Wwoma replied to the thread Periodic power consumption spikes on Proxmox VE 9.1.6 (Dell OptiPlex 7050) – normal behavior?.Periodic spikes are abt. 45 sec apart (~ 27 events per 10 minutes). Graphs only have a resolution of 60 sec if you zoom in. Because of undersampling you can not find something repeating in a 45 sec period. Of course from time to time a power...

-

Aanowak replied to the thread Ceph with 2 Cluster Networks.Sorry that was a typo .... I was just giving an example and stuffed up the addressing... public network is 192.168.20.0/24 Just going through the doco again - is that best practice or is a bond a better idea?

-

Aabamalu replied to the thread Ceph with 2 Cluster Networks.ahh, I get it. But you mention 10.10.10.0/24, 10.10.20.0/24 as your cluster network. Could it be that you have a typo error?

-

Ppflirsich posted the thread [SOLVED] systemd-timedated starting every minute filling up the journal in Proxmox VE: Installation and configuration.On one of our three nodes I observe the following: Apr 14 10:12:46 proxmox3 dbus-daemon[3026]: [system] Activating via systemd: service name='org.freedesktop.timedate1' unit='dbus-org.freedesktop.timedate1.service' requested by ':1.457' (uid=33...

-

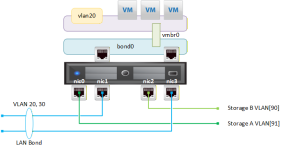

Aanowak replied to the thread Ceph with 2 Cluster Networks.Hi abamalu, Yes, I get that you need a public network which is the front end but what I to configure two cluster networks. My systems have four NICs.. two in a bond for Management/Front-end Traffic and two on separate networks for back-end...

-

Aabamalu replied to the thread Ceph with 2 Cluster Networks.Hello @anowak To split the traffic, you have to set cluster_network and public_network: [global] ... cluster_network = 10.10.10.0/24 public_network = 10.10.20.0/24 ... https://pve.proxmox.com/pve-docs/pveceph.1.html#pve_ceph_install_wizard