Latest activity

-

Stoiko Ivanov reacted to t.a.s's post in the thread [SOLVED] Ingress-connection issues with some remote mailservers with

Stoiko Ivanov reacted to t.a.s's post in the thread [SOLVED] Ingress-connection issues with some remote mailservers with Like.

Hi @Stoiko Ivanov, thank you for your reply. You nailed it. Of course I was too dense to enable TLS and now that it's on, the mails go through in both directions... I'm facepalming real hard right now. I have a handful of other servers in my logs...

Like.

Hi @Stoiko Ivanov, thank you for your reply. You nailed it. Of course I was too dense to enable TLS and now that it's on, the mails go through in both directions... I'm facepalming real hard right now. I have a handful of other servers in my logs... -

Tt.a.s reacted to Onslow's post in the thread [SOLVED] Ingress-connection issues with some remote mailservers with

Like.

Hi, @t.a.s https://www.postfix.org/DEBUG_README.html#debug_peer Verbose logging for specific SMTP connections In /etc/postfix/main.cf, list the remote site name or address in the debug_peer_list parameter. For example, in order to make the...

Like.

Hi, @t.a.s https://www.postfix.org/DEBUG_README.html#debug_peer Verbose logging for specific SMTP connections In /etc/postfix/main.cf, list the remote site name or address in the debug_peer_list parameter. For example, in order to make the... -

Tt.a.s reacted to Stoiko Ivanov's post in the thread [SOLVED] Ingress-connection issues with some remote mailservers with

Like.

did you restore a backup from your old gateway - or set it up freshly? one guess based on the debug-output: - your PMG does not seem to have TLS enabled - maybe the sending servers are configured to not send mails over the internet without TLS...

Like.

did you restore a backup from your old gateway - or set it up freshly? one guess based on the debug-output: - your PMG does not seem to have TLS enabled - maybe the sending servers are configured to not send mails over the internet without TLS... -

Tt.a.s replied to the thread [SOLVED] Ingress-connection issues with some remote mailservers.Hi @Stoiko Ivanov, thank you for your reply. You nailed it. Of course I was too dense to enable TLS and now that it's on, the mails go through in both directions... I'm facepalming real hard right now. I have a handful of other servers in my logs...

-

SteveITS replied to the thread SPF fail, but SPF is legit?.There's a record tester at https://www.kitterman.com/spf/validate.html. There are rules, notably the 10-DNS-lookup limit, that catch people over time especially if using includes.

SteveITS replied to the thread SPF fail, but SPF is legit?.There's a record tester at https://www.kitterman.com/spf/validate.html. There are rules, notably the 10-DNS-lookup limit, that catch people over time especially if using includes. -

SteveITS replied to the thread [SOLVED] Sync to two PBS, different space used.Pruning or syncing? There is a "transfer last ___" in the Advanced settings of a sync job, to send only the last "n" backups of each VM/CT. Otherwise the sync will transfer all backups and then the GC and then pruning will take effect.

SteveITS replied to the thread [SOLVED] Sync to two PBS, different space used.Pruning or syncing? There is a "transfer last ___" in the Advanced settings of a sync job, to send only the last "n" backups of each VM/CT. Otherwise the sync will transfer all backups and then the GC and then pruning will take effect. -

Jjaminmc reacted to UdoB's post in the thread What are these default root crontabs and what do they do? with

Like.

Note that there may be additional systemd-timers, which are not visible in the classic crontab context. Run systemctl list-timers -a instead. You also did not mention user-specific crontabs, editable by everybody by crontab -e - including one...

Like.

Note that there may be additional systemd-timers, which are not visible in the classic crontab context. Run systemctl list-timers -a instead. You also did not mention user-specific crontabs, editable by everybody by crontab -e - including one... -

KKlug posted the thread [SOLVED] Sync to two PBS, different space used in Proxmox Backup: Installation and configuration.Hello all. We have a PVE cluster with about 35 TB used (Ceph), going well. This is backed up to a datastore on a PBS (12 HD in ZRAID2 + special devices) that says it uses 35.64 TB (65 groups, 3906 snapshots, dedup is 34.69). Smoothly too. This...

-

AandyB2018 replied to the thread Periodic power consumption spikes on Proxmox VE 9.1.6 (Dell OptiPlex 7050) – normal behavior?.The peaks you see in the graphs at 1 AM and 5 AM are VM backup and then backup inside HA to Google Drive. As far as I remember I did move HA to another PVE and shutdown it on the actual host. The consumption was the same (minus a few 0.5W for HA...

-

FHi, The last friday in the early morning a node reboot alone, but i don't know what trigger that, I chek the logs and see the next lines: Memory failure: 0xca06f60: Sending SIGBUS to CPU 1/KVM:4190709 due to hardware memory corruption...

-

Jjaminmc replied to the thread Opt-in Linux 7.0 Kernel for Proxmox VE 9 available on test and no-subscription.Although this is the official Linux Kernel 7.0.0 release, it is still classified as beta since Ubuntu 26.04 has not yet been released. Proxmox leverages the Ubuntu kernel, enhanced with custom compile flags, built-in ZFS support, and patches...

-

JJohannes S reacted to monkfish's post in the thread Opt-in Linux 7.0 Kernel for Proxmox VE 9 available on test and no-subscription with

Like.

Here you go - in case you've not already found it this is courtesy of @uzumo in this thread...

Like.

Here you go - in case you've not already found it this is courtesy of @uzumo in this thread... -

JJohannes S reacted to fiona's post in the thread Applying pve-qemu-kvm 10.2.1-1 may cause extremely high “I/O Delay” and extremely high “I/O pressure stalls”. (Patches in the test repository with

Like.

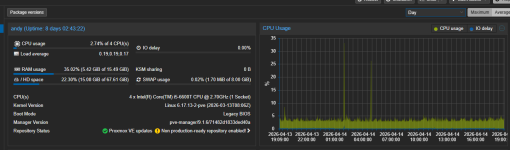

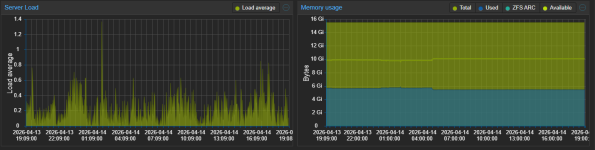

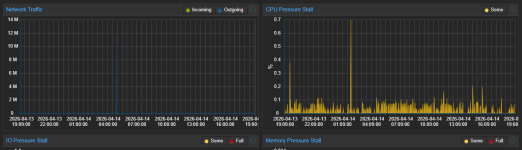

From the analysis until now, the IO pressure seems to be a cosmetic issue or rather accounting issue in the kernel. QEMU switched to using io_uring for event loops with QEMU 10.2. The issue appears in combination with IO threads, where a blocking...

Like.

From the analysis until now, the IO pressure seems to be a cosmetic issue or rather accounting issue in the kernel. QEMU switched to using io_uring for event loops with QEMU 10.2. The issue appears in combination with IO threads, where a blocking... -

JJohannes S reacted to beisser's post in the thread Applying pve-qemu-kvm 10.2.1-1 may cause extremely high “I/O Delay” and extremely high “I/O pressure stalls”. (Patches in the test repository with

Like.

its like using debian sid and then complaining about issues with it. according to his logic debian sid should be qc-tested since the repository is available to thousands. thats not how it works. test = bleeding edge stuff with a decent chance...

Like.

its like using debian sid and then complaining about issues with it. according to his logic debian sid should be qc-tested since the repository is available to thousands. thats not how it works. test = bleeding edge stuff with a decent chance... -

JJohannes S reacted to uzumo's post in the thread Applying pve-qemu-kvm 10.2.1-1 may cause extremely high “I/O Delay” and extremely high “I/O pressure stalls”. (Patches in the test repository with

Like.

Those expectations arise precisely because you’ve actually used it and experienced its shortcomings firsthand. I understand your concerns, but ultimately, the use of the test repository is at your own risk.

Like.

Those expectations arise precisely because you’ve actually used it and experienced its shortcomings firsthand. I understand your concerns, but ultimately, the use of the test repository is at your own risk. -

JJohannes S reacted to beisser's post in the thread Applying pve-qemu-kvm 10.2.1-1 may cause extremely high “I/O Delay” and extremely high “I/O pressure stalls”. (Patches in the test repository with

Like.

i repeat, dont use the test-repo for production. developers state its only for testing-purposes and not for production-use as evidenced by my screenshot from their own wiki. you ignoring this is purely on you and on noone else. you could have run...

Like.

i repeat, dont use the test-repo for production. developers state its only for testing-purposes and not for production-use as evidenced by my screenshot from their own wiki. you ignoring this is purely on you and on noone else. you could have run... -

JJohannes S reacted to beisser's post in the thread Applying pve-qemu-kvm 10.2.1-1 may cause extremely high “I/O Delay” and extremely high “I/O pressure stalls”. (Patches in the test repository with

Like.

@djsami thats why you dont use the test repository in production. as the name implies it is a repository for testing things. its bleeding edge stuff. if you need things to just work, use the enterprise repository, where only well tested releases...

Like.

@djsami thats why you dont use the test repository in production. as the name implies it is a repository for testing things. its bleeding edge stuff. if you need things to just work, use the enterprise repository, where only well tested releases... -

JJohannes S replied to the thread ToughArmor MB902SPR-B R1 wird nicht erkannt.Ich würde es mit beiden Varianten (Ubuntu- und Debian-Livecd testen), da man notfalls ja auch ein ProxmoxVE dadurch installieren kann, dass man erst Debian installiert und danach ProxmoxVE...

-

JJohannes S reacted to celemine1gig's post in the thread ToughArmor MB902SPR-B R1 wird nicht erkannt with

Like.

Kernel Sourcen basieren aber auf denen von Ubuntu (eben nicht Debian). Insofern war der Test nicht so verkehrt.

Like.

Kernel Sourcen basieren aber auf denen von Ubuntu (eben nicht Debian). Insofern war der Test nicht so verkehrt. -

UUturn replied to the thread Opt-in Linux 7.0 Kernel for Proxmox VE 9 available on test and no-subscription.Yes thanks, i was aware of the naming issue, but was waiting for the final solution from @t.lamprecht. so pinning at least did the job right now. I expect a name change soon to come over that problem.