Latest activity

-

JJacek Noga reacted to dlasher's post in the thread Proxmox 9.x / Strix Halo / GPU Passthrough with

Like.

OPTIONAL: If you don't want to blind copy the firmware over the top, or you might actually want to check if you're ALREADY on the latest firmware, use this script instead. #!/bin/bash # pve-firmware-sync.sh # 1. Pull latest firmware blobs...

Like.

OPTIONAL: If you don't want to blind copy the firmware over the top, or you might actually want to check if you're ALREADY on the latest firmware, use this script instead. #!/bin/bash # pve-firmware-sync.sh # 1. Pull latest firmware blobs... -

Nnick-a replied to the thread Managing domains automatically.The way we've done it for multiple cPanel servers, large number of domains etc, is to just make 2 API calls for relay domains and transports and then work out what changes need to be made, MX records to update and so on. Making use of the...

-

Cciax replied to the thread LLMs auf Proxmox mit AMD AI CPUs (Strix): iGPU funktioniert – NPU aktuell nicht.etwas weiter - das Modul amdxdna.ko konnte für den neueren Kernel 6.17.13-1-pve kompiliert werden, ladet aber leider nicht mit folgender Fehlermeldung: modprobe: ERROR: could not insert 'amdxdna': Key was rejected by service Es befindet sich...

-

TTaz-Matt replied to the thread Random IO Error - Windows Server 2025.Since that moment (early December), no issues. If that can be of any use to someone... ext4 everywhere. Not benefiting from ZFS but I don't need it in my setup. Performance is excellent, absolutely no problems.

-

G_gabriel replied to the thread Nested virtualization: Issues enabling Hyper-V in win server VM.-hypervisor implies perfs penalty , use it as a last resort.

-

G_gabriel replied to the thread Guest agent not running on Windows 11.After you discover how it works under the hood, you will follow the recommended way : Local PBS then WAN PBS Remote Sync from. Give a try , I bet on no more QEMU guest agent stopped with a local PBS.

-

bbgeek17 replied to the thread Shared ISCSI Storage VG's?.There may be a slight advantage of having multiple LUNs and Multiple Targets from a queue depth perspective. However, that generally applies to very high performance storage with fast clients. Without knowing anything about your performance...

bbgeek17 replied to the thread Shared ISCSI Storage VG's?.There may be a slight advantage of having multiple LUNs and Multiple Targets from a queue depth perspective. However, that generally applies to very high performance storage with fast clients. Without knowing anything about your performance... -

BMy solution was to define a new CPU model based on x86-64-v2-AES that also exposes AVX New models can be defined here cluster wide: /etc/pve/virtual-guest/cpu-models.conf Add this definition to the /etc/pve/virtual-guest/cpu-models.conf file...

-

LLugitsch IT replied to the thread [SOLVED] The current guest configuration does not support taking new snapshots..That's right. I've deleted TPM.raw File and Snapshot does work now. Tnx

-

Aajezierski replied to the thread Shared ISCSI Storage VG's?.Hi @bbgeek17, and thank you for responding. Your comment on why I created multiple LUNs is a good question. They are all on the same SAN and RAID/hybrid storage (EMC Unity 300). When I had it configured for a VMware environment, I had a...

-

LLugitsch IT reacted to Impact's post in the thread [SOLVED] The current guest configuration does not support taking new snapshots. with

Like.

I'd say it's because of the .raw TPM disk. Don't use Directory if you can help it.

Like.

I'd say it's because of the .raw TPM disk. Don't use Directory if you can help it. -

LLugitsch IT reacted to bbgeek17's post in the thread [SOLVED] The current guest configuration does not support taking new snapshots. with

Like.

Hi @Lugitsch IT , welcome to the forum. Please open a new thread with the following information: - pveversion from each host - cat /etc/pve/storage.cfg in TEXT format using CODE tags from each node - qm config [vmid] in TEXT format using CODE...

Like.

Hi @Lugitsch IT , welcome to the forum. Please open a new thread with the following information: - pveversion from each host - cat /etc/pve/storage.cfg in TEXT format using CODE tags from each node - qm config [vmid] in TEXT format using CODE... -

LLugitsch IT reacted to bbgeek17's post in the thread [SOLVED] The current guest configuration does not support taking new snapshots. with

Like.

@Impact is correct, one VM has local-lvm backing TPM disk, the other directory/raw. Raw file format does not support snapshot capability. Blockbridge : Ultra low latency all-NVME shared storage for Proxmox - https://www.blockbridge.com/proxmox

Like.

@Impact is correct, one VM has local-lvm backing TPM disk, the other directory/raw. Raw file format does not support snapshot capability. Blockbridge : Ultra low latency all-NVME shared storage for Proxmox - https://www.blockbridge.com/proxmox -

Sseechiller replied to the thread Proxmox won't complete the reboot.I had the same problem too. At first, I thought it was due to the HBA, but that wasn't the case. iDRAC messages: A fatal error was detected on a component at bus 4 device 0 function 0. A fatal error was detected on a component at bus 4 device 0...

-

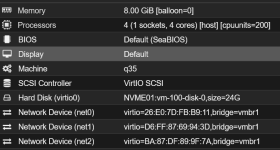

BBeerman replied to the thread Pfsense issues after Linux 6.17.13-1-pve upgrade?.My Hardare: AMD EPYC 4545P 16-Core Processor MC13-LE1 Mainboard (Gigabyte) 10Gb/s LAN ports via Intel X710-AT2 Pfsense VM:

-

Cciax replied to the thread LLMs auf Proxmox mit AMD AI CPUs (Strix): iGPU funktioniert – NPU aktuell nicht.Leider, nach einem "dist-upgrade" am Host und Installation (war auch davor ohne Installation) von https://rocm.docs.amd.com/projects/radeon-ryzen/en/latest/docs/install/installryz/native_linux/install-ryzen.html (rocm 7.2) - bleibt in den Kernel...

-

IIsThisThingOn replied to the thread Guest agent not running on Windows 11.I mean, it is the QEMU guest agent service having the issue, so I would argue it is not a Windows issue, but a QEMU on Windows issue. Just like the high DB load issue with VirtIO is not Windows issue but a QEMU on Windows issue. Sure, that is...

-

DDuelCore replied to the thread Severe system freeze with NFS on Proxmox 9 running kernel 6.14.8-2-pve when mounting NFS shares.Dell T640 running Proxmox with Plex and Truenas VMs. Since updating to 9.1.6 Plex reading from TrueNAS over NFS had substantially improved. I do have a fairly complicated network setup with using bonded network connections to different...

-

bbgeek17 replied to the thread Shared ISCSI Storage VG's?.Hi @ajezierski , welcome to the forum. You need to create LVM using the Multipath DM device, not the underlying direct physical devices. So to answer your question - one VG per unique LUN. In your case 3 Volume groups (or you can combine your...

bbgeek17 replied to the thread Shared ISCSI Storage VG's?.Hi @ajezierski , welcome to the forum. You need to create LVM using the Multipath DM device, not the underlying direct physical devices. So to answer your question - one VG per unique LUN. In your case 3 Volume groups (or you can combine your... -

Ffilipealvarez replied to the thread Install Ubuntu 24.04 fail during OS installation.The problem has been resolved, we can install Ubuntu 24.04 online again!