Latest activity

-

Ffmaurer posted the thread [SOLVED] Proxmox SDN disables ospfd despite usage in /etc/frr/frr.conf.local in Proxmox VE: Networking and Firewall.Hello, we are using a relatively simple ospf config similar to this: https://packetpushers.net/blog/proxmox-ceph-full-mesh-hci-cluster-w-dynamic-routing/ We use ospfd and ospfd6 /etc/frr/frr.conf.local looks like this: frr version 10.4.1 frr...

-

PPigi_102 replied to the thread [SOLVED] Issue with license ( from remote ) ?.I've verified that after some time now everything looks fine. Probably it has been slow to gather every information from the servers. I'll mark solved.

-

Zze42 replied to the thread evpn? network segmentation?.Hypervisor already knows subnets, and is configured per vrf/zone to have a gateway IP, and route the trafic. I just want to add a static route to each VRF. I would much rather just add the static route somewhere, than have some tenant VM have...

-

Stoiko Ivanov replied to the thread Unable to parse message - part did not end with expected boundary.See https://forum.proxmox.com/threads/detected-undelivered-mail-to.180292/post-840426 for a bit more details - else this change is due to: https://forum.proxmox.com/threads/proxmox-mail-gateway-security-advisories.149333/post-838667 and you can...

Stoiko Ivanov replied to the thread Unable to parse message - part did not end with expected boundary.See https://forum.proxmox.com/threads/detected-undelivered-mail-to.180292/post-840426 for a bit more details - else this change is due to: https://forum.proxmox.com/threads/proxmox-mail-gateway-security-advisories.149333/post-838667 and you can... -

Stoiko Ivanov replied to the thread [SOLVED] detected undelivered mail to.The change is related to a potential security issue addressed in: https://forum.proxmox.com/threads/proxmox-mail-gateway-security-advisories.149333/post-838667 You can selectively allow broken mails by adding rules that match on the added...

Stoiko Ivanov replied to the thread [SOLVED] detected undelivered mail to.The change is related to a potential security issue addressed in: https://forum.proxmox.com/threads/proxmox-mail-gateway-security-advisories.149333/post-838667 You can selectively allow broken mails by adding rules that match on the added... -

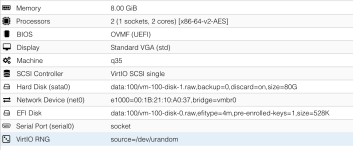

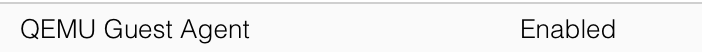

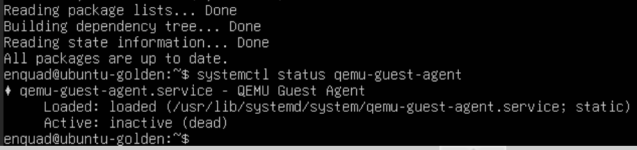

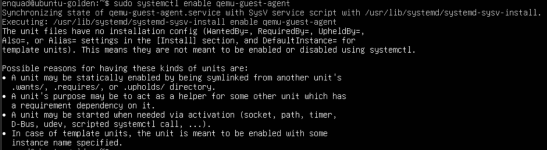

EEnquad posted the thread [SOLVED] Qemu agent not connecting in Proxmox VE: Installation and configuration.Hello! Total noob here. Didn't find a solution on the forum(maybe user error). I have installed Ubuntu Server on proxmox and for my life I can't sort out the qemu agent. Keeps failing. All packages are updated, agent installed on vm, enabled on...

-

LLucasKer replied to the thread cloud-init template für bereitstellung in whmcs.Ich hatte das bei Ubuntu genommen: https://cloud-images.ubuntu.com/noble/current/noble-server-cloudimg-amd64.img (Ist das die richtige) "root@ubuntu-cloudinit:~# systemctl restart sshdFailed to restart sshd.service: Unit sshd.service not found."...

-

BBu66as replied to the thread Proxmox für 500-1000 VMs.@Stefan123, wenn ihr ein dediziertes Ceph-Cluster als Storage nutzt, laufen auf den Compute-Nodes keine Ceph-OSDs. Die lokalen Platten in den Nodes brauchen dann nur das Proxmox-OS und ggf. ISOs/Templates — dafür sind SAS-SSDs völlig ausreichend...

-

LLucasKer reacted to Bu66as's post in the thread cloud-init template für bereitstellung in whmcs with

Like.

@LucasKer, Wie @Johannes S schon sagt — zentrale Sammlung gibt es nicht. Hier die direkten Links für deine Distros: Debian: https://cloud.debian.org/images/cloud/ (jeweils latest/ Unterordner) Ubuntu: https://cloud-images.ubuntu.com/ (z.B...

Like.

@LucasKer, Wie @Johannes S schon sagt — zentrale Sammlung gibt es nicht. Hier die direkten Links für deine Distros: Debian: https://cloud.debian.org/images/cloud/ (jeweils latest/ Unterordner) Ubuntu: https://cloud-images.ubuntu.com/ (z.B... -

Hhawkeye replied to the thread [SOLVED] detected undelivered mail to.Same problem here. Since 2 days our mail gateway reports many many errors like ... message has ambiguous content - adding header ... said: 450 4.1.8 <double-bounce@mail4.localdomain>: Sender address rejected: Domain not found (in reply to...

-

Ffbassas replied to the thread Proxmox VE 9.1 Installation on Dell Poweredge R630.Hi everybody and thanks for this usefull topic. The workaroud worked for me so well. But that solution makes another kind of issue for me. We have a cluster of 3 nodes, and this happens in only one. We aplied the fix, node works fine with...

-

Oozgurerdogan replied to the thread random "api error (status = 400: client error (SendRequest))" pop-ups!.We have same thing. I thought it is because PDM is behind NAT.

-

Vvmwombat replied to the thread [SOLVED] VM Name und Statistic Daten zeitweise nicht verfügbar.Danke @Bua66as

-

Bbl1mp replied to the thread Proxmox für 500-1000 VMs.Es kommt darauf an. :) und zwar auf das Netzwerksetup das ihr am Ende fahrt. Die Geschwindigkeiten im Netzwerk (Bonding/LACP) für das Ceph sollten mit den IO-Geschwindigkeiten der Disks passen (in Abhängigkeit zur Art und Anzahl der Disks). Wenn...

-

Ppiotrpierzchala replied to the thread Space reclamation on thin provisioning after removing file from debian 13 with qcow2 disk.That's a nice summary I agree and reclaimng space works fine on NFS 4.2, with qcow2 EVEN without any qemu-img convert - it works until you move disk to different datastore. After moving disk, qm monitor shows "driver": "zeroinit" (of moved disk)...

-

dakralex replied to the thread How to modify cgroup trees of LXCs and VMs?.Hi! There is no simple way to move VMs and CTs under a single root cgroup as for VMs the code already depends on the VM's cgroups to be under the /qemu.slice cgroup and the /lxc cgroup is given by LXC itself, which has many intricacies that make...

dakralex replied to the thread How to modify cgroup trees of LXCs and VMs?.Hi! There is no simple way to move VMs and CTs under a single root cgroup as for VMs the code already depends on the VM's cgroups to be under the /qemu.slice cgroup and the /lxc cgroup is given by LXC itself, which has many intricacies that make... -

BBu66as replied to the thread Proxmox für 500-1000 VMs.@Stefan123, wenn ihr ein dediziertes Ceph-Cluster nutzt, laufen auf den Compute-Nodes keine Storage-I/Os für die VMs — die lokalen Platten dienen dort nur als Boot-/OS-Laufwerke für Proxmox selbst. Dafür reichen SAS-SSDs locker, selbst SATA-SSDs...

-

AAntonJ replied to the thread conntrack not working when HA is enabled.Thank you, that was quick! When/if it arrives to test I'll be able to test it in our test cluster.

-

ggoller replied to the thread Moving away from Full Mesh Network - Routed Setup.You should make sure to give static ips (in the same subnet as the ceph network (check the ceph config file for this)) to the interfaces attached to the switch. Then just remove the frr config and restart frr to apply the change.

ggoller replied to the thread Moving away from Full Mesh Network - Routed Setup.You should make sure to give static ips (in the same subnet as the ceph network (check the ceph config file for this)) to the interfaces attached to the switch. Then just remove the frr config and restart frr to apply the change. -

SStefan123 replied to the thread Proxmox für 500-1000 VMs.Eine kurze Frage hätte ich tatsählich. Wir schauen gerade grob nach HW-Preisen. Wenn wir ein zentrales NVME Ceph-Storage hätten, ist die Geschwindigkeit der Platten in den Nodes relavant. Also könnte man hier auch SAS-SDDs statt NVME nutzen ohne...