Latest activity

-

Xx509 replied to the thread Is a 3-node Full Mesh Setup For Ceph and Corosync Good or Bad.@Nexces Again, thank you for the information. Your info has been very helpful.

-

Xx509 reacted to shanreich's post in the thread Is a 3-node Full Mesh Setup For Ceph and Corosync Good or Bad with

Like.

Since I haven't seen it mentioned yet, there is also our Ceph Benchmark paper from late 2023 [1] [1] https://www.proxmox.com/images/download/pve/docs/Proxmox-VE-Ceph-Benchmark-202312-rev0.pdf

Like.

Since I haven't seen it mentioned yet, there is also our Ceph Benchmark paper from late 2023 [1] [1] https://www.proxmox.com/images/download/pve/docs/Proxmox-VE-Ceph-Benchmark-202312-rev0.pdf -

JJohannes S replied to the thread [SOLVED] Issue with license ( from remote ) ?.Then imho this warrants writing to office@proxmox.com to ask for help. I mean you payed for support, so I see no reason, why you should figure this out on your own ;) As far I know the person/s behind that adress handle everything subscription...

-

JJohannes S replied to the thread High VM-EXIT and Host CPU usage on idle with Windows Server 2025.On modern systems there are also other CPU types you can try, you can determine the best possible option with https://github.com/credativ/ProxCLMC

-

JJohannes S replied to the thread Problem mit Firebird.Mit folgenden Tool kann man den bestmöglichen Wert für den Prozessortyp bestimmen: https://github.com/credativ/ProxCLMC

-

Xx509 reacted to Nexces's post in the thread Is a 3-node Full Mesh Setup For Ceph and Corosync Good or Bad with

Like.

@x509 corosync is on 1G "private" link no redundancy for 25G - single node failure is accepted as datacenter in which those servers are has 24h service and spare parts - faulty node will be up in matter of minutes / hours there is total of 70 VMs...

Like.

@x509 corosync is on 1G "private" link no redundancy for 25G - single node failure is accepted as datacenter in which those servers are has 24h service and spare parts - faulty node will be up in matter of minutes / hours there is total of 70 VMs... -

Ggfngfn256 replied to the thread Space reclamation on thin provisioning after removing file from debian 13 with qcow2 disk.See this documentation & specifically the note at the end of that section.

-

AJust wanted to add a quick comment for anyone that might find this post via google or similar: This is no longer needed, as the whole functionality (including comments/notes) is now built into vma-to-pbs directly. It can just be pointed at a...

-

TTheGadMan replied to the thread e1000e eno1: Detected Hardware Unit Hang:.If I pin my kernel to 6.8.12-8 can I upgrade all the other packages that need upgrading? I've been holding off

-

Kkmentzelos replied to the thread LVM on shared storage.After reading through the various discussions about the O_DIRECT bug/feature, I understand that this primarily affects VMs configured with cache mode set to none (which is the default in Proxmox). I’m planning to run the C code to reproduce the...

-

SHi, for everyone who has boot issues on kernel 6.17.4-2 if Intel VMD is enabled: One option would be to try kernel 6.17.9-1 (currently on pve-test [1] ), it contains a potential fix [2] for the issue. If you test 6.17.9-1, it would be great if...

-

Ttombstone reacted to juraedv's post in the thread [SOLVED] CIFS VFS errors about old shares (bad network name) with

Like.

Thanks for you quick reply. I checked and there's no hint: etc/fstab is practically empty. systemctl status '*.mount' not hints. (shows a lot, also the new and fine working cifs-shares, but not the old ones.) I scrolled back a bit in journal...

Like.

Thanks for you quick reply. I checked and there's no hint: etc/fstab is practically empty. systemctl status '*.mount' not hints. (shows a lot, also the new and fine working cifs-shares, but not the old ones.) I scrolled back a bit in journal... -

Ttombstone reacted to juraedv's post in the thread [SOLVED] CIFS VFS errors about old shares (bad network name) with

Like.

My bad - i checked again: The messages don't appear after the last reboot. So i guess in the system there was some function that still tried (for weeks now!) to communicate with the once lost cifs / nfs connections. But after a reboot this...

Like.

My bad - i checked again: The messages don't appear after the last reboot. So i guess in the system there was some function that still tried (for weeks now!) to communicate with the once lost cifs / nfs connections. But after a reboot this... -

Aalexskysilk replied to the thread Best RAID for ZFS in Small Cluster?.If by redundancy you mean disk fault tolerance, the higher the value after "raidz" the higher the fault tolerance. In practice, raidz2+ (never use single parity raidz unless prepared to lose the pool at any time) performance= striped mirrors...

-

Wwerwolf_ replied to the thread [ANN] bzfs 1.18.0 near real-time ZFS replication tool is out.* FYI, bzfs is written in Python (not a "shell script"), compression is configurable via CLI options, and it is similarly robust as zrepl, and far more robust and reliable than syncoid. * I think zrepl (and syncoid) have different focus and...

-

Jjeffgott replied to the thread Question on disks after resilvering.Ok, we already did zpool replace -f <pool> <old-device> <new-device> But now we should remove device, wipe it, resinsert it and do the following: sgdisk <healthy bootable device> -R <new device> sgdisk -G <new device> zpool replace -f...

-

Bbbx1_ posted the thread Moving away from Full Mesh Network - Routed Setup in Proxmox VE: Networking and Firewall.Hey everyone, I have a 3-node PVE cluster that I've been using for testing and learning the platform in a business setting. Initially I had my PVE nodes connected to a Mellanox SX1024 40G switch. A few months ago, I spent some time and...

-

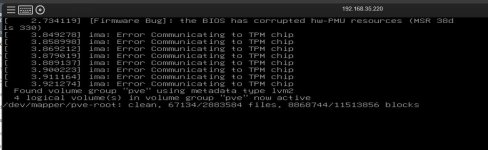

bbgeek17 replied to the thread [SOLVED] Proxmox error?!?!.Get a backup first, then see if there are any available BIOS/firmware updates. Even if there are not, what you posted should not bother you too much. With the large amount of 3rd and 4th tier hardware out there, backed by generic BMC software -...

bbgeek17 replied to the thread [SOLVED] Proxmox error?!?!.Get a backup first, then see if there are any available BIOS/firmware updates. Even if there are not, what you posted should not bother you too much. With the large amount of 3rd and 4th tier hardware out there, backed by generic BMC software -... -

CCptnBlues63 replied to the thread [SOLVED] Proxmox error?!?!.It's up and my server is running! I'm going to run a backup on it now so I don't lose all the changes I made since the previous backup (about a year old....argh!) I know, I had another identical server running in production and this was a copy...

-

bbgeek17 replied to the thread [SOLVED] Proxmox error?!?!.In the future, if you do decide to mount a device that might potentially disappear - you should use on the options discussed here: https://unix.stackexchange.com/questions/53456/what-is-the-difference-between-nobootwait-and-nofail-in-fstab...

bbgeek17 replied to the thread [SOLVED] Proxmox error?!?!.In the future, if you do decide to mount a device that might potentially disappear - you should use on the options discussed here: https://unix.stackexchange.com/questions/53456/what-is-the-difference-between-nobootwait-and-nofail-in-fstab...