Latest activity

-

KI've just had to restore some VMs on PVE due to power outage creating some corruption on my local-lvm array. When I restored the PBS VM from a recent backup, I noticed a lot of breakages (since fixed), with apparmor not allowing dhclient, python...

-

Ttep1997 replied to the thread RAM Usage Discrepancy.Great information, explains a lot. Thanks all!

-

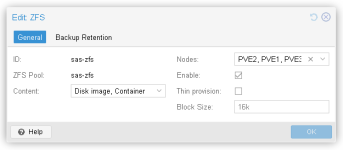

Aawirthy replied to the thread SSD ZFS Pool keeps increasing in usage space.I'll look at enabling this. Any idea why the SSD's storage are being used up?

-

JJohannes S reacted to celemine1gig's post in the thread Offtopic: Jellyfin erzeugt beim Abspielen hohe Load auf ProxmoxVE with

Like.

Noch eine Kleinigkeit, wenn du schon mit alter Server-HW unterwegs bist: Höchste Vorsicht vor älteren Server-/Enterprise-Grade SAS und/oder SATA SSDs. Die Teile enthalten oft größere (Elektrolyt-basierte) Pufferkondensatoren. Gegen...

Like.

Noch eine Kleinigkeit, wenn du schon mit alter Server-HW unterwegs bist: Höchste Vorsicht vor älteren Server-/Enterprise-Grade SAS und/oder SATA SSDs. Die Teile enthalten oft größere (Elektrolyt-basierte) Pufferkondensatoren. Gegen... -

Ccelemine1gig replied to the thread Offtopic: Jellyfin erzeugt beim Abspielen hohe Load auf ProxmoxVE.Noch eine Kleinigkeit, wenn du schon mit alter Server-HW unterwegs bist: Höchste Vorsicht vor älteren Server-/Enterprise-Grade SAS und/oder SATA SSDs. Die Teile enthalten oft größere (Elektrolyt-basierte) Pufferkondensatoren. Gegen...

-

Aawirthy replied to the thread SSD ZFS Pool keeps increasing in usage space.That is not what the UI says. This is not the issue I need solving. I need to know why my SSD storage is being used up.

-

Ddaniel240 posted the thread Migration failed: Storage 'local-lvm' (LVM-Thin) not available on target node in Proxmox VE: Installation and configuration.Subject: Migration failed: Storage 'local-lvm' (LVM-Thin) not available on target node Message: Hi everyone, I am trying to migrate an LXC container from my main node (pve) to a second node (minipve). Source Node (pve): Has local-lvm (LVM-Thin...

-

Aalpha754293 replied to the thread Pardon my less-than-intelligent question, but is there a way to install Proxmox on a Ceph cluster?.Then how do people have like > 300 OSDs??? Surely they're not having 300+ nodes too. Gotcha. Therefore; with four nodes, the most that I would be able to do would be a (3,1) EC then, correct? (I've only been running a very tiny (2,1) EC with...

-

Mmaze-m replied to the thread Offtopic: Jellyfin erzeugt beim Abspielen hohe Load auf ProxmoxVE.Moinsen! Ja, dass die Hardware durchaus alt ist, weiß ich und so hatte es mir mein Arbeitskollege ja auch überlassen (O-Ton: "Wenn du den Server nicht mehr brauchst, entsorg' ihn bitte auf dem Restehof!").... Momenten ist's nur - finde ich...

-

SInisterPisces replied to the thread [SOLVED] [PVE 9.1.0/PVE-Manager 9.1.5] Customized ZFS Min/Max Size--Unexpected Behavior in RAM Summary. Did I Do Something Wrong?.That makes sense. For some reason, I was expecting it to set aside at least the minimum ARC amount and reserve it, but it makes sense that it doesn't do that on boot. I've since seen that system use the full allotment of ARC after running...

SInisterPisces replied to the thread [SOLVED] [PVE 9.1.0/PVE-Manager 9.1.5] Customized ZFS Min/Max Size--Unexpected Behavior in RAM Summary. Did I Do Something Wrong?.That makes sense. For some reason, I was expecting it to set aside at least the minimum ARC amount and reserve it, but it makes sense that it doesn't do that on boot. I've since seen that system use the full allotment of ARC after running... -

Aawirthy replied to the thread SSD ZFS Pool keeps increasing in usage space.From what I've read the discard option for SAS disks is only for thin provisioned storage. Given that is it still recommended to change this?

-

SInisterPisces reacted to UdoB's post in the thread [SOLVED] [PVE 9.1.0/PVE-Manager 9.1.5] Customized ZFS Min/Max Size--Unexpected Behavior in RAM Summary. Did I Do Something Wrong? with

SInisterPisces reacted to UdoB's post in the thread [SOLVED] [PVE 9.1.0/PVE-Manager 9.1.5] Customized ZFS Min/Max Size--Unexpected Behavior in RAM Summary. Did I Do Something Wrong? with Like.

I would expect this behavior. And yes, the ARC only gets actually used when ZFS recognizes relevant read pattern by watching the MRU/MFU counters. (--> "warm up". Newer systems may reload the ARC on boot from disk though...)

Like.

I would expect this behavior. And yes, the ARC only gets actually used when ZFS recognizes relevant read pattern by watching the MRU/MFU counters. (--> "warm up". Newer systems may reload the ARC on boot from disk though...) -

Ccelemine1gig reacted to fstrankowski's post in the thread Offtopic: Jellyfin erzeugt beim Abspielen hohe Load auf ProxmoxVE with

Like.

Transkodierst Du die Filme beim Abspielen? Wenn dem so ist, schalte mal alle Transkodierungsoptionen aus.

Like.

Transkodierst Du die Filme beim Abspielen? Wenn dem so ist, schalte mal alle Transkodierungsoptionen aus. -

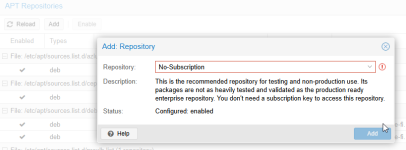

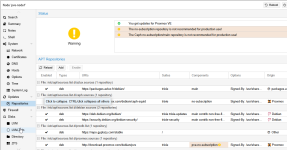

Mmattbatt replied to the thread [SOLVED] Cannot update fresh pve from pve-no-subscription repo.Oh, I'll have to look at that when I get back. I was just seeing errors that it couldn't "apt-get update" and I followed the error trail here.

-

JJohannes S replied to the thread [SOLVED] Cannot update fresh pve from pve-no-subscription repo.Not really. If one uses the WebUI or follows the documentation the no-sub repository gets added with http instead of https, so no manual intervention needed: https://pve.proxmox.com/pve-docs/chapter-sysadmin.html#sysadmin_no_subscription_repo...

-

JJohannes S reacted to UdoB's post in the thread Pardon my less-than-intelligent question, but is there a way to install Proxmox on a Ceph cluster? with

Like.

In my understanding usually the failure domain is "host". I need to be able to shutdown/reboot one node for maintenance. And I want everything to stay alive when (not: if) one node has any kind of problem. You will lose three or four OSDs if any...

Like.

In my understanding usually the failure domain is "host". I need to be able to shutdown/reboot one node for maintenance. And I want everything to stay alive when (not: if) one node has any kind of problem. You will lose three or four OSDs if any... -

JJohannes S reacted to alexskysilk's post in the thread Pardon my less-than-intelligent question, but is there a way to install Proxmox on a Ceph cluster? with

Like.

from understanding failure domains. damn @UdoB beat me to the punch. I wont "professor" you on this. You can either read and understand, or deploy your preconcieved notions and learn on your flesh and blood. I would also note that if your...

Like.

from understanding failure domains. damn @UdoB beat me to the punch. I wont "professor" you on this. You can either read and understand, or deploy your preconcieved notions and learn on your flesh and blood. I would also note that if your... -

JJohannes S reacted to celemine1gig's post in the thread Offtopic: Jellyfin erzeugt beim Abspielen hohe Load auf ProxmoxVE with

Like.

Selbst SAS SSDs (bei der Nennung von "10K" werden es hier HDDs sein) brauchen ordentlich Leistung. Aber das ist nur ein weiteres Teilproblem. Aus heutiger Sicht ist das genannte System halt einfach verschwenderisch, was die Energieeffizienz...

Like.

Selbst SAS SSDs (bei der Nennung von "10K" werden es hier HDDs sein) brauchen ordentlich Leistung. Aber das ist nur ein weiteres Teilproblem. Aus heutiger Sicht ist das genannte System halt einfach verschwenderisch, was die Energieeffizienz... -

OOnslow reacted to powersupport's post in the thread Raid1 boot array, drive replacement procedure with

Like.

Replacing a Failed Disk in Proxmox (BTRFS RAID1 – Simple Explanation) If one disk fails in a Proxmox BTRFS RAID1 setup, your server will still boot from the other disk. That’s normal and expected. Sometimes it boots in “read-only mode.” This is...

Like.

Replacing a Failed Disk in Proxmox (BTRFS RAID1 – Simple Explanation) If one disk fails in a Proxmox BTRFS RAID1 setup, your server will still boot from the other disk. That’s normal and expected. Sometimes it boots in “read-only mode.” This is... -

SInisterPisces replied to the thread [PVE 9.1] Smartest Way to Run Ethtool Commands on PVE Node Boot?.… As long as I've been using Proxmox, and as often as I've had to tinker with the /etc/network/interfaces file to configure bonds and VLANs and everything else, you'd think I'd have realized it can actually be used to configure all of this sort...

SInisterPisces replied to the thread [PVE 9.1] Smartest Way to Run Ethtool Commands on PVE Node Boot?.… As long as I've been using Proxmox, and as often as I've had to tinker with the /etc/network/interfaces file to configure bonds and VLANs and everything else, you'd think I'd have realized it can actually be used to configure all of this sort...