Latest activity

-

Ssilverstone replied to the thread Nothing works anymore "can't lock file '/run/lock/lxc/pve-config-xxx.lock".I just had this happen to me. It wasn't very straightforward, but this seems to work: Kill the lxc-start Process that started the Container Manually remove the Lock file Use lxc-stop with --kill and --nolock Arguments to (try) to stop the...

-

Mmicky5197 posted the thread GPU Passthrough funktioniert nicht mit RX 9070 XT in Proxmox VE (Deutsch/German).Guten Morgen, Ich probiere seit knapp 2 Wochen in Proxmox eine Windows VM mit GPU Passthrough zu erstellen, aber es klappt einfach nicht. Ich habe dies wohlgemerkt vorher noch nie gemacht, aber als ich es auf einem anderen System probiert habe...

-

OOzymandias42 posted the thread Suggestion: Option to consolidate Housekeeping-Tasks to Resource Subset like Xen does with Dom0 in Proxmox VE: Installation and configuration.One of the last advantages of Xen over KVM in terms of virtualisation efficiency is that housekeeping tasks are only done on the CPUs assigned to Dom0, all others are perfectly idle. This has certain advantages in terms of Power-Efficiency...

-

Ggtozzi replied to the thread S3: Restore failing: "failed to extract file: failed to copy file contents: unexpected status code 504 Gateway Timeout".I've had a look at the patches and i believe this is the solution. It should not apply to just 500 and 503 but to any 5xx. Can i test it somehow?

-

Gglasshammer replied to the thread [SOLVED] Can't Boot Installer, No Video Out - Threadripper 3960x + TRX40D8-2N2T.Welp. This was the dumbest possible solution and entirely unhelpful to anyone who also might have these symptoms. I apparently put an SPI TPM module into my LPC TPM header because my supplier sent the wrong one; they have identical header pins...

-

Ggtozzi replied to the thread S3: Restore failing: "failed to extract file: failed to copy file contents: unexpected status code 504 Gateway Timeout".I'm having the same issue on Hetzner. It also happens during backup and verify, it is not strictly related to restore. It also happens with other softwares, it is not strictly related to Proxmox VE. What other softwares do, is wait a brief...

-

Kkaroku89 replied to the thread Netzwerkspeicher unter Proxmox einbinden.also übersetzt für mich als Halblaie: 1. auf Proxmox ein ZFS erstellen aus den (vermutlich 2 Platten, (wobei testweise eine reichen würde) die ichper USB anschließe.) 2. Container mit SambaShare und Template Debian 13 (Trixie?) 3. Recherchieren...

-

Nnihilanthlnxc replied to the thread automated install via PXE boot.Greetings. Could you provide the URL of that project on your GitHub? I'm stuck on this. I've tried several variations and nothing works. The installer isn't downloading the answer.toml file. That variation you suggested sounds interesting, and...

-

UdoB replied to the thread Pardon my less-than-intelligent question, but is there a way to install Proxmox on a Ceph cluster?.The usual boot process uses the BIOS firmware to read the very first blocks of the operating system. This is before even the "initrd"/"initramfs" is available = "pre-boot". While boot devices may be local hardware and network devices with some...

UdoB replied to the thread Pardon my less-than-intelligent question, but is there a way to install Proxmox on a Ceph cluster?.The usual boot process uses the BIOS firmware to read the very first blocks of the operating system. This is before even the "initrd"/"initramfs" is available = "pre-boot". While boot devices may be local hardware and network devices with some... -

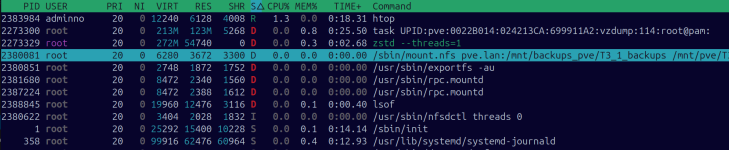

JJulian33 replied to the thread LXCs with NFS mounts failing to start after reboot or restore.Unfortunately this is not fixed. My infra es home lab with proxmox-ve: 9.1.0 (running kernel: 6.17.9-1-pve)pve-manager: 9.1.5 (running version: 9.1.5/80cf92a64bef6889) 2 nodes. Node has an NFS exported storage and HA VM, among other things. I...

-

UdoB replied to the thread [SOLVED] [PVE 9.1.0/PVE-Manager 9.1.5] Customized ZFS Min/Max Size--Unexpected Behavior in RAM Summary. Did I Do Something Wrong?.I would expect this behavior. And yes, the ARC only gets actually used when ZFS recognizes relevant read pattern by watching the MRU/MFU counters. (--> "warm up". Newer systems may reload the ARC on boot from disk though...)

UdoB replied to the thread [SOLVED] [PVE 9.1.0/PVE-Manager 9.1.5] Customized ZFS Min/Max Size--Unexpected Behavior in RAM Summary. Did I Do Something Wrong?.I would expect this behavior. And yes, the ARC only gets actually used when ZFS recognizes relevant read pattern by watching the MRU/MFU counters. (--> "warm up". Newer systems may reload the ARC on boot from disk though...) -

UdoB reacted to bitranox's post in the thread UPS NUTS Client to shutdown other node in cluster (not HA). with

UdoB reacted to bitranox's post in the thread UPS NUTS Client to shutdown other node in cluster (not HA). with Like.

Since your nodes are in a Proxmox cluster, SSH keys are already exchanged between them. That makes this pretty painless. SSH shutdown from node 1's NUT script, Node 1 already has a working NUT client, so you just add a script that SSHs into node...

Like.

Since your nodes are in a Proxmox cluster, SSH keys are already exchanged between them. That makes this pretty painless. SSH shutdown from node 1's NUT script, Node 1 already has a working NUT client, so you just add a script that SSHs into node... -

gurubert reacted to VictorSTS's post in the thread Ceph squid OSD crash related to RocksDB ceph_assert(cut_off == p->length) with

gurubert reacted to VictorSTS's post in the thread Ceph squid OSD crash related to RocksDB ceph_assert(cut_off == p->length) with Like.

Thanks for the heads up. Pretty sure most where created with Ceph Reef except a few that got recreated recently with Squid 19.2.3. I'm aware of that bug, but given that I don't use EC pools (Ceph bugreport mentions it seems to only happen on OSD...

Like.

Thanks for the heads up. Pretty sure most where created with Ceph Reef except a few that got recreated recently with Squid 19.2.3. I'm aware of that bug, but given that I don't use EC pools (Ceph bugreport mentions it seems to only happen on OSD... -

gurubert reacted to bitranox's post in the thread Ceph squid OSD crash related to RocksDB ceph_assert(cut_off == p->length) with

gurubert reacted to bitranox's post in the thread Ceph squid OSD crash related to RocksDB ceph_assert(cut_off == p->length) with Like.

as others pointed out already this hits OSDs that are fairly full (~75%+) with heavy disk fragmentation. v19.2.3 already ships a race condition fix (https://ceph.io/en/news/blog/2025/v19-2-3-squid-released/) that prevents new corruption, but it...

Like.

as others pointed out already this hits OSDs that are fairly full (~75%+) with heavy disk fragmentation. v19.2.3 already ships a race condition fix (https://ceph.io/en/news/blog/2025/v19-2-3-squid-released/) that prevents new corruption, but it... -

Aalpha754293 posted the thread Pardon my less-than-intelligent question, but is there a way to install Proxmox on a Ceph cluster? in Proxmox VE: Installation and configuration.Pardon my less-than-intelligent question, but is there a way to install Proxmox on a Ceph cluster such that Proxmox boots off of a Ceph cluster? Or is this not possible?

-

Ssacarias replied to the thread Reporting enhancements in the bug tracker.@t.lamprecht PVE 9.1 still doesn't have the proposed changes...

-

fstrankowski replied to the thread [SOLVED] Another io-error with yellow triangle.Maybe useful for people coming back to this thread one day: Take a look at the kernel.org thread which describes this bug including examples from Proxmox and QEMU. There is also an excellent writeup here.

fstrankowski replied to the thread [SOLVED] Another io-error with yellow triangle.Maybe useful for people coming back to this thread one day: Take a look at the kernel.org thread which describes this bug including examples from Proxmox and QEMU. There is also an excellent writeup here. -

TTritonB7 reacted to nvanaert's post in the thread BUG: Installing Microsoft Windows Server 2025 fails (related to NUMA / CPU- & Memory Hotplug) with

Like.

Update: I've got past the installer. It's likely a bug, related to NUMA or CPU / Memory hotplug as those are the features that I've turned off. Update 2: Once the installation is done I can turn on NUMA and CPU / Memory Hotplug and I do not seem...

Like.

Update: I've got past the installer. It's likely a bug, related to NUMA or CPU / Memory hotplug as those are the features that I've turned off. Update 2: Once the installation is done I can turn on NUMA and CPU / Memory Hotplug and I do not seem... -

KKingneutron reacted to l.leahu-vladucu's post in the thread Connect a USB drive plugged into PC to a VM? with

Like.

Hello craig.stephens! Yes, you can do this using USB Passthrough. Click on the VM and go to Hardware -> Add -> USB Device.

Like.

Hello craig.stephens! Yes, you can do this using USB Passthrough. Click on the VM and go to Hardware -> Add -> USB Device. -

Zzenoprax replied to the thread HA does not trigger when node loses LAN but remains in quorum.> it is very hard to determine a good set of robust rules enabling this to be automatic ... I feel like they are missing the point of your proposal which was essentially "add the ability to add some additional conditions for failure". Deploying...