Latest activity

-

Ddiscusseded replied to the thread Proxmox VLAN + Unifi - VMs don't get IP when VLAN tagged.In my case I had two problems: I had assigned the server port to a unique zone that didn't have the correct routing/firewall settings configured. The port to the gateway was assigned an Ethernet Port Profile that didn't allow tagged VLANs. Once...

-

fstrankowski replied to the thread Wie kann ich im Cluster Ausfallsicherheit herstellen?.Nicht mit ZFS-Replikation.

fstrankowski replied to the thread Wie kann ich im Cluster Ausfallsicherheit herstellen?.Nicht mit ZFS-Replikation. -

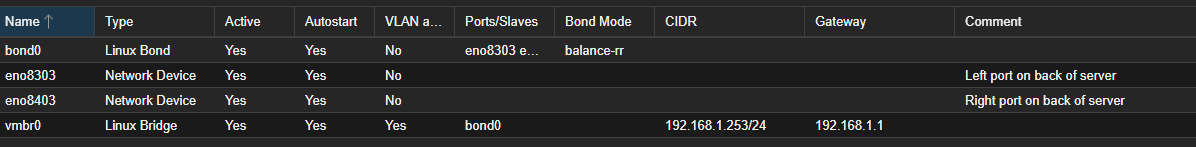

OOnslow replied to the thread Networking slow to come up on VMs, intermittent routing issues on some.I admit I'm not very experienced with Proxmox' networking, so at the moment I'm just comparing your config with the similar one at https://pve.proxmox.com/pve-docs/chapter-sysadmin.html#sysadmin_network_bond - section "Example: Use a bond as the...

-

SSkamanda replied to the thread Networking slow to come up on VMs, intermittent routing issues on some.# network interface settings; autogenerated # Please do NOT modify this file directly, unless you know what # you're doing. # # If you want to manage parts of the network configuration manually, # please utilize the 'source' or 'source-directory'...

-

OOnslow replied to the thread Networking slow to come up on VMs, intermittent routing issues on some.The result of cat /etc/network/interfaces would give more details :) Of course not as a screenshot, but as text in the CODE tags (using this </> button above).

-

OThis is as expected. You need at least 80% of the remotes have a basic subscription to not get these message, see this post by a staff member: https://forum.proxmox.com/threads/proxmox-datacenter-manager-1-0-stable.177321/post-821945 My guess is...

-

Ttp1de replied to the thread Wie kann ich im Cluster Ausfallsicherheit herstellen?.Mir geht es nur darum, dass 2 bis 3 VMs bei Ausfall einer Node weiterlaufen. Auf Basis der letzten Replikation und am Besten automatisch. Gibt es eine Möglichkeit das einzurichten?

-

fstrankowski replied to the thread Wie kann ich im Cluster Ausfallsicherheit herstellen?.Wenn Du wirkliches HA möchtest geht nichts ohne shared Storage. Bei ZFS Replikation hast Du immer das Delta zwischen den Syncs und im worst Case musst Du eben per Hand die VM/LXC-Config manuell verschieben.

fstrankowski replied to the thread Wie kann ich im Cluster Ausfallsicherheit herstellen?.Wenn Du wirkliches HA möchtest geht nichts ohne shared Storage. Bei ZFS Replikation hast Du immer das Delta zwischen den Syncs und im worst Case musst Du eben per Hand die VM/LXC-Config manuell verschieben. -

LLameDuck reacted to Impact's post in the thread Promox, OMV and USB disk enclosure - how to set-up with

Like.

The issue with giving the whole disk(s) to the VM like that is that snapshots or backups don't include that storage then.

Like.

The issue with giving the whole disk(s) to the VM like that is that snapshots or backups don't include that storage then. -

SSkamanda replied to the thread Networking slow to come up on VMs, intermittent routing issues on some.Sorry, coffee hadn't kicked yet. Bonded, not bridged.

-

OOnslow replied to the thread Networking slow to come up on VMs, intermittent routing issues on some.Welcome, @Skamanda Sounds it can be the reason. Are these bridges in the same network, by chance? What are the networks' settings? Especially these bridges.

-

UdoB reacted to Johannes S's post in the thread Live migration from LVM to LVM thin without dataloss? with

UdoB reacted to Johannes S's post in the thread Live migration from LVM to LVM thin without dataloss? with Like.

No, migrating between nodes and back should work. For the resetup migrating the guests off the node, removing it from the cluster and reinstalling is propably the best course of action...

Like.

No, migrating between nodes and back should work. For the resetup migrating the guests off the node, removing it from the cluster and reinstalling is propably the best course of action... -

UdoB reacted to guruevi's post in the thread [SOLVED] diskspace vs. io performance while deciding of the pool architecture of a pve server with

UdoB reacted to guruevi's post in the thread [SOLVED] diskspace vs. io performance while deciding of the pool architecture of a pve server with Like.

There will be few workloads that will be able to stress out your NVMe lanes. Unless you have that kind of workload, I would suggest 2x6 RAIDZ2 if you only have 1 server - it is safer to have less disks in a VDEV, it is still plenty fast. Don’t...

Like.

There will be few workloads that will be able to stress out your NVMe lanes. Unless you have that kind of workload, I would suggest 2x6 RAIDZ2 if you only have 1 server - it is safer to have less disks in a VDEV, it is still plenty fast. Don’t... -

LLameDuck replied to the thread Promox, OMV and USB disk enclosure - how to set-up.I ve finally chosen OMV as VM and Hardware Raid1 within Terramaster enclosure.

-

Mmmmmv replied to the thread [TUTORIAL] How to create Windows cloudinit templates on proxmox 7.3 (PATCH INCLUDED).Did you ever get this to work?

-

Ssilverstone replied to the thread LXC Unprivileged Container Isolation.Bump

-

Mmwrothbe replied to the thread Network fails at boot.Nope. 2 port onboard Intel x710 and a 4 port Intel x710 NIC. I can try disabling RDMA and see what happens, but I don't have the other symptoms you mention. Everything had been working fine for like a year with this HW config, up to a couple...

-

JJohannes S replied to the thread Issue with license ( from remote ) ?.This is as expected. You need at least 80% of the remotes have a basic subscription to not get these message, see this post by a staff member: https://forum.proxmox.com/threads/proxmox-datacenter-manager-1-0-stable.177321/post-821945 My guess is...

-

JJohannes S reacted to SteveITS's post in the thread [SOLVED] Prune simulator OK but real prune KO :-( with

Like.

Without double checking our logs the fact that the Feb 11 log starts with the 2/3 backup implies to me the others were removed before that task run? Is there any sync job? Or retention set on the backup job? Normally retention is additive...14...

Like.

Without double checking our logs the fact that the Feb 11 log starts with the 2/3 backup implies to me the others were removed before that task run? Is there any sync job? Or retention set on the backup job? Normally retention is additive...14...