Latest activity

-

AAgoraphobie replied to the thread Abschalten von "You do not have a valid subscription for this server ...................".Hallo, Ich bin auf diesen Beitrag gestoßen nachdem ich von meinem AG eine sehr teure Proxmoxschulung besucht habe. Dort wurde ebenfalls völlig natürlich erwähnt das sich diese Meldung abschalten ließe. Von unsittlichen oder nicht gerne gesehenen...

-

shanreich replied to the thread Confused regarding guest isolation on cluster.Port isolation uses the isolated flag for bridge ports (for more information see [1]). This can only work locally because of that. If you want to do this across host, or have more fine-grained control over traffic between guests on the same VNet...

shanreich replied to the thread Confused regarding guest isolation on cluster.Port isolation uses the isolated flag for bridge ports (for more information see [1]). This can only work locally because of that. If you want to do this across host, or have more fine-grained control over traffic between guests on the same VNet... -

shanreich replied to the thread Ansible/Terraform breaks on VLAN Interfaces.This often occurs when there are MTU issues somewhere along the path - did you double-check your MTU config across the whole path? You can always check via the ping command: # 9000 MTU ping -M do -s 8972 {target host} # 1500 MTU ping -M do -s...

shanreich replied to the thread Ansible/Terraform breaks on VLAN Interfaces.This often occurs when there are MTU issues somewhere along the path - did you double-check your MTU config across the whole path? You can always check via the ping command: # 9000 MTU ping -M do -s 8972 {target host} # 1500 MTU ping -M do -s... -

Kkriev98 replied to the thread PBS Sync job timeout.I can't do the removable since the source PBS is in a datacenter, we only rent the server. I have run a iperf for 1h without any interruptions at full gigabit between both PBS servers. The configuration is a pull from the remote pbs. The...

-

Jjames74 replied to the thread [SOLVED] MINISFORUM MS-A2 (AMD Ryzen 9 9955HX) - solution, use a ZFS option and not EXT4 when setting up storage.Not trying to be rude, but can you explain that? These were not options available during install. This is what I found. "Logical Volumes (LVs) are not a file system; they are virtual partitions created by Logical Volume Management (LVM) that can...

-

dcsapak replied to the thread Proxmox VM Truenas Scale shows status stopped but is running.the journal/log of the host would be interesting from the time you turned on the guest until a bit after it shows the wrong status

dcsapak replied to the thread Proxmox VM Truenas Scale shows status stopped but is running.the journal/log of the host would be interesting from the time you turned on the guest until a bit after it shows the wrong status -

Ppiotrpierzchala replied to the thread Space reclamation on thin provisioning after removing file from debian 13 with qcow2 disk.There is one more thing which I have to write down... while at some point "trim" and space reclamation will eventually work (we just cannot predict at what point and what is really triggering it), I haven't been able until now to reclaim that...

-

Jjames74 replied to the thread [SOLVED] MINISFORUM MS-A2 (AMD Ryzen 9 9955HX) - solution, use a ZFS option and not EXT4 when setting up storage.I use Gparted Live to wipe the drives prior to each attempt. No need to remove them. I have also tried with 9.1x and 8.4x and it hangs at teh exact same spot. I'll check the "secure boot option".

-

DDep1911 replied to the thread Proxmox Mail Gateway - User Blocklist.Is this a shared database or a separate one for each node in a cluster configuration? After clearing a lot of unnecessary records, I noticed that some records remained on the second cluster node. pmgsh get /nodes/localhost/status | grep insync...

-

Ddaniel17n posted the thread [SOLVED] Can't change resource pool asigned to a VM via CLI. in Proxmox VE: Installation and configuration.Hi, I have a 3-node cluster with ceph. I created some resource pools to act as "folders" and restrict what a generic user could see. I'm trying to build an script that moves VMs from their resource pools to another one called "toBeDeleted" if...

-

HHeracleos reacted to VictorSTS's post in the thread Ceph 20.2 Tentacle Release Available as test preview and Ceph 18.2 Reef soon to be fully EOL with

Like.

Just follow the docs: https://pve.proxmox.com/wiki/Ceph_Squid_to_Tentacle

Like.

Just follow the docs: https://pve.proxmox.com/wiki/Ceph_Squid_to_Tentacle -

UUnpeeled5565 posted the thread Ansible/Terraform breaks on VLAN Interfaces in Proxmox VE: Networking and Firewall.I am looking into a move from VMWare > Proxmox and am stumped by what I believe to be a bug that completely breaks my automation tools when VLANs are configured for the management interface. When the Proxmox host has management IPs on VLAN...

-

Ppiotrpierzchala replied to the thread Space reclamation on thin provisioning after removing file from debian 13 with qcow2 disk.There results after trimming, before moving VM's disk root@:/mnt/pve/truenas01-41-test2-01/images/102# ls -l vm-102-disk-1.qcow2 -rw-r----- 1 root nogroup 16108814336 Feb 13 12:54 vm-102-disk-1.qcow2...

-

VictorSTS replied to the thread Ceph 20.2 Tentacle Release Available as test preview and Ceph 18.2 Reef soon to be fully EOL.Just follow the docs: https://pve.proxmox.com/wiki/Ceph_Squid_to_Tentacle

VictorSTS replied to the thread Ceph 20.2 Tentacle Release Available as test preview and Ceph 18.2 Reef soon to be fully EOL.Just follow the docs: https://pve.proxmox.com/wiki/Ceph_Squid_to_Tentacle -

HHeracleos replied to the thread Ceph 20.2 Tentacle Release Available as test preview and Ceph 18.2 Reef soon to be fully EOL.Okay, understood. In my case, since it's an “old” installation, I have to manually modify the repositories in /etc/apt. For those who have already upgraded, have you experienced any particular problems? Is it fairly reliable? Thanks

-

HHeracleos reacted to jsterr's post in the thread Ceph 20.2 Tentacle Release Available as test preview and Ceph 18.2 Reef soon to be fully EOL with

Like.

Its visible when you install a new proxmox node with ceph, there you can select it already.

Like.

Its visible when you install a new proxmox node with ceph, there you can select it already. -

Rraiz3n replied to the thread Proxmox web UI accessible but shell returns Error 500: close (rename) atomic file.....Do you have the same errors in your log? cuowu: daai suers wenjan shbai cuowu: shzhi wejian wuhong xngzhi sibai ok, i will double check Thanks

-

Ppvejj replied to the thread [SOLVED] new SSD Storage not available.@uzumo : ok, the noob has it alredy. thx changed the (No prefix) to solved

-

Udone

-

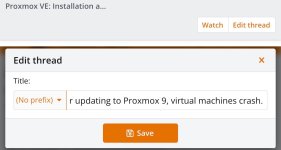

Uuzumo replied to the thread [SOLVED] new SSD Storage not available.The method for solved is as shown in the image. Please change it via the menu instead of writing it out.