Latest activity

-

Ccarles89 reacted to Blueloop's post in the thread Recommendations for virtualized Router/Firewall on Proxmox with

Like.

I'll give you some options: OPNSense pfSense VyOS Proxmox! A Debian box is quite capable of being a very decent router and you already have one: Proxmox. However, that is one for the likes of me to run up. What you probably need is something...

Like.

I'll give you some options: OPNSense pfSense VyOS Proxmox! A Debian box is quite capable of being a very decent router and you already have one: Proxmox. However, that is one for the likes of me to run up. What you probably need is something... -

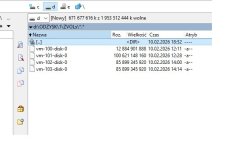

Ggogito replied to the thread ZFS 2.4.0 Special Device + Data VDEV Report Very Wrong Remaining Space.Ah forgot to mention, it's kinda like my backup pool. My main pool is mirror special device + raidz1 4xhdd

-

BbeastyAlk replied to the thread SDN aliases not found by firewall.Here Thanks for the help

-

MMcJameson replied to the thread [SOLVED] No such logical volume pve/data.I thought I installed my 2nd server the same way I did my 1st one, but I might have chosen ZFSinstead of LVM; even though the concept of Thin Provisioning sounded interesting to me. This output of the two commands: root@pve-universe-server:~#...

-

FFingerlessGloves posted the thread Prevent IPv6 local link on SDN VNet in Proxmox VE: Networking and Firewall.Hi, It would be nice if there was an option to prevent IPv6 Link-Local happening on the bridges that get created, in my setup I've created a VNET for each VLAN I need but I noticed each bridge has a IPv6 Link-Local, which means the host is...

-

MMMartinez replied to the thread Ceph multi-public-network setup: CephFS on separate network.Thanks. It seems that the problem is described on this "tip". I was trying to keep ceph private network isolated so it is not routed. It looks that both public networks needs to be routed and visible between them. In that case, I will choose...

-

Tthe-truest-repairman replied to the thread MS-A2 Minisforum (AMD Ryzen™ 9 9955HX + Radeon™ 610M) – The “I Finally Stopped Crying” Zero-Artifact iGPU Passthrough Thread.New to virtualization here haha, so managing the nested nature of this project has been hard. Reason for doing it with VM instead of LXC: needing access to stable proprietary AMF drivers for AMD VCN hardware encoding on another service (not...

-

HI use Proxmox. I have four virtual machines on SATA SSD drives (the machine's motherboard doesn't support RAID). They were running in a ZFS pool. Using a ZFS data recovery program, I recovered the machine file, but without the extension. Is it...

-

HHammer72 replied to the thread Gen1 VM migrate from Hyper-V 2019 to ProxmoxVE9.Hi All Just wanted to update my findings on what worked for me. Below are steps taken to recover a RHEL9 Virtual Machine running on Hyper-V 2019 to Proxmox VE9 1. Copied .vhdx file to /var/lib/vz/images 2. convert image to qcow2 <qemu-img...

-

TTahsin replied to the thread Intel B50pro passthrough..There isn't any specific ones for intel cards I use. I dont have access to my computer remotely. I can get all the information on how mine is set up, later today. When you had the code 43 error in windows, what did the windows event viewer say...

-

Aalexskysilk replied to the thread Ceph multi-public-network setup: CephFS on separate network.ceph allows multiple public networks. just make sure your monitors exist on whatever public network(s) you define. see https://docs.ceph.com/en/reef/rados/configuration/network-config-ref/

-

SSomebodier reacted to ZHS's post in the thread Proxmox boot stuck on: "mpt3sas_cm0: overriding MVDATA EEDPTagMode setting" with

Like.

Sorry for the late reply, but I fixed the problem by reinstalling the whole system from scratch. Hopefully you have proper backups so the reinstallation can run smoothly.

Like.

Sorry for the late reply, but I fixed the problem by reinstalling the whole system from scratch. Hopefully you have proper backups so the reinstallation can run smoothly. -

weehooey-bh reacted to fweber's post in the thread 40 node prod cluster restarts when joining a new node or removing. with

weehooey-bh reacted to fweber's post in the thread 40 node prod cluster restarts when joining a new node or removing. with Like.

Sounds good, thanks for reporting back! I'd generally recommend a dedicated primary network for corosync, to prevent other traffic from driving up corosync latencies, see [1]. corosync can handle multiple redundant networks itself [2], so...

Like.

Sounds good, thanks for reporting back! I'd generally recommend a dedicated primary network for corosync, to prevent other traffic from driving up corosync latencies, see [1]. corosync can handle multiple redundant networks itself [2], so... -

Hhat7 replied to the thread Try to install Proxmox 9.1 on a old linux server, and get lots of disk IO error..Just an additional info. It seems that the problem related to kernel version only. I have very similar issues, but: The machine is new (2 months old) Had no problem after installed using PVE 8 (Kernel 6.8.12-17-pve) After 2 weeks upgraded to 9.1...

-

CComfySofa replied to the thread Intel B50pro passthrough..ok - ill remove it....what are the specific settings for an intel card...all the instructions ive read are aimed at either intel igp, nvidia or AMD?

-

TTahsin replied to the thread Intel B50pro passthrough..Let's not blacklist 'xe'.

-

bbgeek17 reacted to FingerlessGloves's post in the thread LVM and snapshot-as-volume-chain with

bbgeek17 reacted to FingerlessGloves's post in the thread LVM and snapshot-as-volume-chain with Like.

Thanks for those useful links, I'll read those tomorrow, when I'm back in work :)

Like.

Thanks for those useful links, I'll read those tomorrow, when I'm back in work :) -

FFingerlessGloves replied to the thread LVM and snapshot-as-volume-chain.Thanks for those useful links, I'll read those tomorrow, when I'm back in work :)

-

bbgeek17 reacted to aaron's post in the thread Cluster two node active with shared storage in FC connection with

bbgeek17 reacted to aaron's post in the thread Cluster two node active with shared storage in FC connection with Like.

Well, live migration should usually work always. With a non-shared storage it will also transfer the disks of the guests, and that can take a long time. So if you followed the multipath guide and still have some issues, the question would be...

Like.

Well, live migration should usually work always. With a non-shared storage it will also transfer the disks of the guests, and that can take a long time. So if you followed the multipath guide and still have some issues, the question would be... -

aaron replied to the thread Cluster two node active with shared storage in FC connection.Well, live migration should usually work always. With a non-shared storage it will also transfer the disks of the guests, and that can take a long time. So if you followed the multipath guide and still have some issues, the question would be...

aaron replied to the thread Cluster two node active with shared storage in FC connection.Well, live migration should usually work always. With a non-shared storage it will also transfer the disks of the guests, and that can take a long time. So if you followed the multipath guide and still have some issues, the question would be...