Latest activity

-

CComfySofa replied to the thread Intel B50pro passthrough..Yep - i know it would work...just wondered why the driver thought it was off but it was enabled in the Bios. Im in the office today so ill do that when i get home tonight.

-

Wwrre replied to the thread Avoid Reuse of VMID.I don't think this is a good or stable approach. And I know its open source and we could contribute the technical side of the code. But this seems to be a pretty important architectural decision on the core of Proxmox and Backup Server and much...

-

UdoB replied to the thread After upgrading from 9.0 and 9.1 VM Truenas won't start.Out of curiosity: is TrueNAS able to read SMART data of those disks?

UdoB replied to the thread After upgrading from 9.0 and 9.1 VM Truenas won't start.Out of curiosity: is TrueNAS able to read SMART data of those disks? -

XXcite replied to the thread After upgrading from 9.0 and 9.1 VM Truenas won't start.a small follow up to anyone who might also run into this issue... here is how I fixed it for now: Instead of passing through the entire PCIe SATA device to the TrueNAS VM I followed this guide Passthrough HDDs to VM to pass my HDDs individually...

-

HHeracleos reacted to sbarmen's post in the thread Ceph 20.2 Tentacle Release Available as test preview and Ceph 18.2 Reef soon to be fully EOL with

Like.

Just upgraded myself. Went just fine no issues. 3 OSDs. I got some interesting data after upgrading: Ceph Squid → Tentacle Upgrade Benchmark Summary Cluster: 3-node Proxmox (Intel NUC14, NVMe) Pool: ceph-vms (replicated, size 2 / min_size 2)...

Like.

Just upgraded myself. Went just fine no issues. 3 OSDs. I got some interesting data after upgrading: Ceph Squid → Tentacle Upgrade Benchmark Summary Cluster: 3-node Proxmox (Intel NUC14, NVMe) Pool: ceph-vms (replicated, size 2 / min_size 2)... -

A(I've dropped loads of terms that can be searched on here) Proxmox SDN does not include a router. It looks to be designed for layer 2 and tunneled layer 2. To be honest I haven't bothered with it. However I have been fiddling with networks...

-

RRheinold replied to the thread [SOLVED] Proxmox und VM auf einem Laufwerk?.ich habe das doch so verstanden, das ich die sdb für das Proxmox System und für VM nutzen, oder verstehe ich das falsch?

-

UdoB reacted to bbgeek17's post in the thread Simple concept for manual, short term use failover without significant downtime? with

UdoB reacted to bbgeek17's post in the thread Simple concept for manual, short term use failover without significant downtime? with Like.

This is exactly the impression I was getting from the discussion as well. The OP wants a “simple” small-business solution that nonetheless delivers a fully featured, reliable DR setup with many characteristics typically associated with enterprise...

Like.

This is exactly the impression I was getting from the discussion as well. The OP wants a “simple” small-business solution that nonetheless delivers a fully featured, reliable DR setup with many characteristics typically associated with enterprise... -

Oobelix replied to the thread kernel: net_ratelimit: 11 callbacks suppressed, kernel: TCP: too many orphaned sockets.

-

UdoB reacted to alexskysilk's post in the thread Simple concept for manual, short term use failover without significant downtime? with

UdoB reacted to alexskysilk's post in the thread Simple concept for manual, short term use failover without significant downtime? with Like.

I think you may be obsessing about the "performance" and "cost" aspects entirely too much. 1. What is the makeup of your payload? (number of vms, function, ACTUAL performance in IOPs) 2. a backup device MUST be a separate device then the...

Like.

I think you may be obsessing about the "performance" and "cost" aspects entirely too much. 1. What is the makeup of your payload? (number of vms, function, ACTUAL performance in IOPs) 2. a backup device MUST be a separate device then the... -

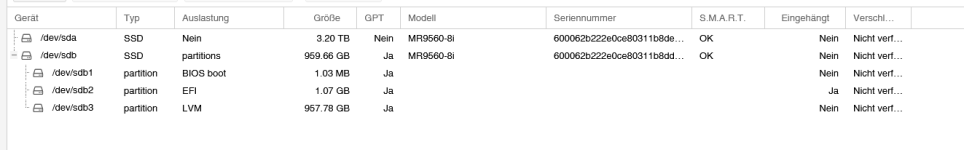

Bboisbleu replied to the thread [SOLVED] Proxmox und VM auf einem Laufwerk?.Wir sprechen über das große RAID - richtig? /dev/sda kannst du entweder per fstab und einen Eintrag in der storage.conf oder per Directory (Disks, Verzeichnis) einbinden. https://pve.proxmox.com/pve-docs/chapter-pvesm.html#storage_directory

-

Rraiz3n replied to the thread Proxmox web UI accessible but shell returns Error 500: close (rename) atomic file.....seriously? no solution ? i tried also those commands, seen on other thread chmod 777 on the file and then Bash: systemctl stop pve-cluster rm -f /var/log/pve/tasks/active* rm -f /var/log/pve/tasks/index* systemctl start pve-cluster[/CODE]...

-

Ggfngfn256 replied to the thread VM names disappear.I don't see why you are using Veeam backup for Proxmox VM's. Use the in-house vzdump or PBS for VM backups.

-

UdoB replied to the thread Questions about ZFS replication and HA.You enable HA for a VM, not for a Node. The node where a HA-VM is running will fence (hard reboot) itself when Quorum is lost. Other nodes without a HA resource will not do that. Yes, that's the idea. The direction of the replication switches...

UdoB replied to the thread Questions about ZFS replication and HA.You enable HA for a VM, not for a Node. The node where a HA-VM is running will fence (hard reboot) itself when Quorum is lost. Other nodes without a HA resource will not do that. Yes, that's the idea. The direction of the replication switches... -

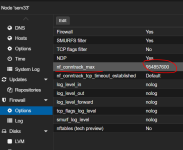

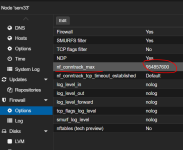

RRheinold replied to the thread [SOLVED] Proxmox und VM auf einem Laufwerk?.ich habe es jetzt mal so gemacht. DAs ist das Ergebnis. Aber wie komme ich jetzt an die Kapazität dran? Ich bekomme immer nur das 2.Raid vorgeschlagen.

-

Ggfngfn256 replied to the thread input/output error.Got the picture. As stated above, on Proxmox host reboot, the mdadm raid is probably being picked up by the host & then terminated. To avoid this, you could try to edit (host-side) : nano /etc/mdadm/mdadm.conf #to specifically ignore these...

-

Ffiveangle replied to the thread [SOLVED] Wireguard and DNS within LXC Container.BTW- all that business is outdated I believe since, what ? 8.something ? PVE kernel has wg built-in so no need to install stuff in the host (sounds like you've got that going, but if you did install wireguard-dkms on the PVE host, maybe that's...

-

Gghandalf replied to the thread Proxmox Datacenter Manager 1.0 (stable).For me, this would be even worse. My dev servers are 2 socket servers with 6 core CPUs, whereas the prod systems are single socket with 32 core CPUs. Either way, all of them have subscriptions, dev community and prod standard, this should count...

-

RRheinold replied to the thread [SOLVED] Proxmox und VM auf einem Laufwerk?.Ja, ist auch so. Aber ich war der Meinung, das es andersherum auch nicht besser war. Ich werde es aber nochmal installieren und dann genau hinschauen, was ich evtl. falsch gemacht habe. Ich war der Meinung, das ich bei kompletter vergabe der...

-

Bboisbleu replied to the thread [SOLVED] Proxmox und VM auf einem Laufwerk?.Sieht so aus, als ob du nur 50GB vom ersten RAID vergeben hast. Bei der Installation solltest du entweder den Installer selbst die Aufteilung der Disk entscheiden lassen oder eben manuell vergeben. Wenn du aber 50GB als hdsize vergibst, dann...