Latest activity

-

Ssilverstone replied to the thread Mountpoints for LXC containers broken after update.Well, maybe so, but a breaking change such as this one (which is NOT the first by a long Shot) which causes Containers to not start should be treated more carefully and not just forced upon Users. It clearly doesn't work. System is uptodate...

-

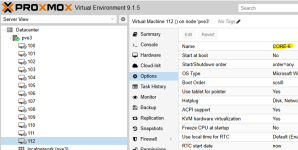

Cceghey replied to the thread [SOLVED] Anti-Cheat KVM Settings.I tried everything suggested above, nothing helped. Only adding this line worked for me. ProxMox 9.1.4, GPU 3090 pass-through to Win11 VM, for Star Citizen EAC.

-

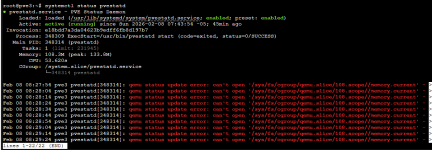

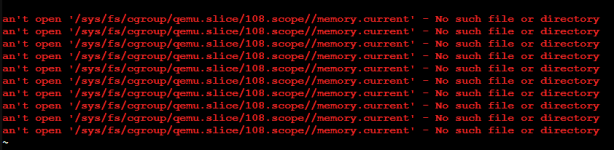

Jjeffgott replied to the thread VM names disappear.I ran "systemctl status pvestat". Here is the output:

-

Ssilverstone replied to the thread Mountpoints for LXC containers broken after update.I confirm I was also just affected by this I opened another Issue a few Minutes ago about it: https://forum.proxmox.com/threads/lxc-fails-to-start-when-using-read-only-mountpoint.180440/

-

Ssilverstone posted the thread LXC Fails to start when using read-only Mountpoint in Proxmox VE: Installation and configuration.I have a Mountpoint /tools_nfs that is mounted read-only on most of my Systems, and I want to be able to pass it to each Container. It used to work OK until now. I suspect one of the last System Updates messed it up :( . Attached is the Debug...

-

Hhaijer replied to the thread Issues with proxmox VNET and dnsmasq (dhcp ranges, option dhcp-host).I am seeing the same issue, the DHCP range is not configured in the dnsmasq config. Running V9.1.4

-

JI have had the names of the VM's disappear several times now in Server View - but the names show up in Options for each VM. Several VM's are still running. I can change the view but none will show the names. This is a standalone server - not...

-

JJohannes S reacted to powersupport's post in the thread Mountpoints for LXC containers broken after update with

Like.

After the update, Proxmox now handles container mount points more strictly. This mainly affects unprivileged containers. Mounted folders now show up as root:root inside the container, so services like MySQL can’t access their files. If a mount...

Like.

After the update, Proxmox now handles container mount points more strictly. This mainly affects unprivileged containers. Mounted folders now show up as root:root inside the container, so services like MySQL can’t access their files. If a mount... -

JJohannes S replied to the thread Proxmox LXD?.You confuse lxcs ( Linux containers) with lxd ( Software for managing vms and lxcs) Both lxd ( and it's fork incus) and ProxmoxVE manage vms and lxcs. Lxcs are more lightweight than vms but only work for Linux applications since they run directly...

-

Sscarfaceuk replied to the thread Mountpoints for LXC containers broken after update.Setting mountidmap=1 didn't work for my NFS share but removing the read-only limitation did.

-

JThe pve-container project and its tooling (e.g., pct = Proxmox Container Toolkit) is already doing all that and more. We're using LXC similarly as LXD does: as low level toolkit which we control with higher level management, things like a syscall...

-

JThese really aren't containers, but rather LXD spins up a regular virtual machine. All which Proxmox VE can also already do fine. (On a techical level, containerization of Windows on e.g. Linux isn't even possible, as containers share the host...

-

JJohannes S reacted to SteveITS's post in the thread How to Reduce SSD Wearout in Proxmox? with

Like.

OP mentioned TRIM… in ZFS it’s only enabled for nvme disks by default. See here and the later posts that links to the reasons. https://forum.proxmox.com/threads/server-disk-i-o-delay-100-during-cloning-and-backup.173051/post-835149 ZFS has a...

Like.

OP mentioned TRIM… in ZFS it’s only enabled for nvme disks by default. See here and the later posts that links to the reasons. https://forum.proxmox.com/threads/server-disk-i-o-delay-100-during-cloning-and-backup.173051/post-835149 ZFS has a... -

JIt helps, but it is not the main culprit with PVE. PVE writes constantly in it sqlite3 database that is the storage behind /etc/pve and also stores metrics into rrdtool. Most of the time, those are very small writes that gets write amplified a...

-

ZZ80user replied to the thread storage status: use SI units for usage for consistency.thanks... I didn't know where to create the report https://bugzilla.proxmox.com/show_bug.cgi?id=7292

-

Oouter_network posted the thread [SOLVED] How one mark as spam/ham mail from external mailserver+webmail in Mail Gateway: Installation and configuration.Hello, Thank you for wounderful software, it fit very well in place where zimbra would be (with extra steps and only as a cog in bigger machine). I move message based on user mailbox interaction with /Spam folder. If he mark mail as spam the...

-

UdoB reacted to Johannes S's post in the thread [SOLVED] auf gleichem PBS (v3) langsamen Storage zum langzeit Archivieren verwenden with

UdoB reacted to Johannes S's post in the thread [SOLVED] auf gleichem PBS (v3) langsamen Storage zum langzeit Archivieren verwenden with Like.

Was? Das prunen geht eigentlich recht schnell. Die garbage-collection oder verify-Job werden bei hdds aber immer lange dauern, weil beim PBS der Datenbestand in zig kleine Dateien ( Chunks ) aufgeteilt wird und jeder (!) chunk dafür eingelesen...

Like.

Was? Das prunen geht eigentlich recht schnell. Die garbage-collection oder verify-Job werden bei hdds aber immer lange dauern, weil beim PBS der Datenbestand in zig kleine Dateien ( Chunks ) aufgeteilt wird und jeder (!) chunk dafür eingelesen... -

SteveITS replied to the thread How to Reduce SSD Wearout in Proxmox?.OP mentioned TRIM… in ZFS it’s only enabled for nvme disks by default. See here and the later posts that links to the reasons. https://forum.proxmox.com/threads/server-disk-i-o-delay-100-during-cloning-and-backup.173051/post-835149 ZFS has a...

SteveITS replied to the thread How to Reduce SSD Wearout in Proxmox?.OP mentioned TRIM… in ZFS it’s only enabled for nvme disks by default. See here and the later posts that links to the reasons. https://forum.proxmox.com/threads/server-disk-i-o-delay-100-during-cloning-and-backup.173051/post-835149 ZFS has a... -

Llordprotector replied to the thread [Virtual Machine Config] Windows 11 Pro Memory Integrity: Does it require nested virtualization?.I didn't have this problem btw. Work fine with my Arc Pro B50 VF.

-

Llordprotector replied to the thread [Virtual Machine Config] Windows 11 Pro Memory Integrity: Does it require nested virtualization?.Some notes on this: You don't need "host" CPU type. Memory Isolation uses Hyper-V to run critical processes inside a virtualized environment. Basically, right now it requires nested virtualization. As you may know, nested virtualization can be...