Latest activity

-

FFearless-Grape5584 replied to the thread Multi-tenant.Short answer: yes, Proxmox VE can be used for multi-tenant style setups – but it doesn’t come as an “opinionated multi-tenant product” out of the box. You normally combine a few native features: - Pools and roles (RBAC) for separating who can...

-

Uuzumo replied to the thread CPU Arguments cuts passthroughed NVMe performance by 4 times.I don't feel like going into detail about eac. We have not experienced significant performance impacts outside of hypervisor-protected code integrity (HVCI)...

-

Ddompro replied to the thread Can't boot VM "Bitmap '' doesn't satisfy the constraints".qemu-img check and qemu-img info all end in the same error. With lvcreate and DD we are able to create a copy of the disk. On the copy we tried to remove the bitmap with, but it also ends in the same error: root@hi0s0046:/dev# qemu-img bitmap...

-

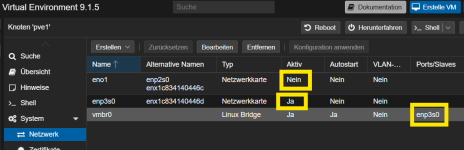

EErnst T. replied to the thread Mein Proxmox friert jede Nacht ein. Könnt ihr helfen?.Vermutlich ist die Schnittstelle einfach nnicht aktiviert. Kannst du aber im GUI umstellen.

-

Uuzumo replied to the thread GMKTEC K12 | Ryzen 7 H 255 | Problem with IGPU.BIOS and similar items may not be redistributed unless explicitly provided by the manufacturer.

-

FFearless-Grape5584 replied to the thread How do you enforce per-pool (tenant) resource quotas for CPU / RAM / disk in Proxmox?.Hi Lucas, thanks — that’s a very helpful perspective. I agree that PDM + multiple clusters can be a solid approach when you want to separate tenants at the cluster boundary. In my case, I’m exploring a different direction: squeezing...

-

RRed Squirrel replied to the thread Is there a mechanism that could cause a node to shutdown?.it's a very slow process since SSH keeps locking up but here's the output of those commands: root@pve02:~# pvecm status Cluster information ------------------- Name: PVEProduction Config Version: 11 Transport: knet Secure...

-

RRiderofDragons68 replied to the thread vulnhub machines.Here is some additional information: I am creating the VM's on my Apple MAC Pro(2013-2017) with 64GB, 12 core processor. I also have 2 additional nodes and they are both Dell R620's. 1 have 1 with 2.7 GHZ 8 core 16 thread and the other is 12...

-

KKingneutron replied to the thread Recommended mini PC server to host ProxMox.BTW, there is no capital M in Proxmox. My Beelink EQR6 Ryzen 9 is similar, but I bought it for under $500 well before RAM prices skyrocketed - and upgraded it from 24GB to 64GB RAM. Under moderate load (~6.86 in htop, 1/2 the cores active) it...

-

RI have been trying to convert a few vulnhub machines to Proxmox, so far I can get the VM to move that is no problem. The issue is that the network card is not detected. I am getting a new adapter that is being detected, but it will not detect a...

-

fstrankowski replied to the thread Is there a mechanism that could cause a node to shutdown?.Again, we need information about your cluster. pvecm status pvecm nodes cat /etc/corosync/corosync.conf corosync-cfgtool -s (on all nodes) journalctl -xeu pve-cluster.service

fstrankowski replied to the thread Is there a mechanism that could cause a node to shutdown?.Again, we need information about your cluster. pvecm status pvecm nodes cat /etc/corosync/corosync.conf corosync-cfgtool -s (on all nodes) journalctl -xeu pve-cluster.service -

KKingneutron reacted to alma21's post in the thread free Starwind x Proxmox SAN storage plugin with

Like.

Hi, I have installed and tested the free / open source Starwind Proxmox SAN storage plugin on a small testcluster (PVE 8) with shared block storage and it works really good .... thin provisioning, snapshots, thin disk auto extension, live...

Like.

Hi, I have installed and tested the free / open source Starwind Proxmox SAN storage plugin on a small testcluster (PVE 8) with shared block storage and it works really good .... thin provisioning, snapshots, thin disk auto extension, live... -

KI would want to emphasize few points: This is especially relevant for Proxmox, since by default you will only with the small default 16k volblocksize. Instead of having a huge RAW VM disk, that is a pita to backup and has a 16k volblocksize...

-

Koh ok, thats fine. I could allocate ~6gb thanks, I think thats a key message - test and find out ps for anyone else reading; I found this pretty helpful to explain the inner workings...

-

RRed Squirrel replied to the thread Is there a mechanism that could cause a node to shutdown?.The cluster itself has quorum but the 4th node did not. it's a 4 node cluster, I set one of the nodes to have 2 votes (this one never went down) as setting up qdevice looks quite involved from what I read, and I plan to add a 5th node eventually...

-

DDarkangeel_hd replied to the thread Nested PVE (on PVE host) Kernel panic Host injected async #PF in kernel mode.Well... that was fast... It appears to make no difference

-

fstrankowski replied to the thread Is there a mechanism that could cause a node to shutdown?.He said it doesnt. But first of all we need some basic information about that cluster overall :D

fstrankowski replied to the thread Is there a mechanism that could cause a node to shutdown?.He said it doesnt. But first of all we need some basic information about that cluster overall :D -

SteveITS replied to the thread Is there a mechanism that could cause a node to shutdown?.Does it have a quorum? If not the nodes will reboot.

SteveITS replied to the thread Is there a mechanism that could cause a node to shutdown?.Does it have a quorum? If not the nodes will reboot. -

fstrankowski replied to the thread Is there a mechanism that could cause a node to shutdown?.Im curious, do you know the basic requirements for a cluster to operate normally? How does your cluster look like. Please provide basic information about your whole topology. And please dont reply-reply-reply to your own topic, you can just edit...

fstrankowski replied to the thread Is there a mechanism that could cause a node to shutdown?.Im curious, do you know the basic requirements for a cluster to operate normally? How does your cluster look like. Please provide basic information about your whole topology. And please dont reply-reply-reply to your own topic, you can just edit... -

fstrankowski replied to the thread Proxmox Backup Client aus einer VM.Probier es mal mit der IP deines PBS anstelle des Hostnamens.

fstrankowski replied to the thread Proxmox Backup Client aus einer VM.Probier es mal mit der IP deines PBS anstelle des Hostnamens.