Latest activity

-

Mmfederanko replied to the thread Mountpoints for LXC containers broken after update.It's recommended to use apt full-upgrade over apt upgrade maybe just doing the full upgrade will resolve your issue. Does this also happen for new containers?

-

IIsThisThingOn replied to the thread Guest agent not running on Windows 11.Today I had the issue again with 0.1.271. The strange thing is that it has a clean exit code. C:\Windows\System32>sc qc QEMU-GA [SC] QueryServiceConfig ERFOLG SERVICE_NAME: QEMU-GA TYPE : 10 WIN32_OWN_PROCESS...

-

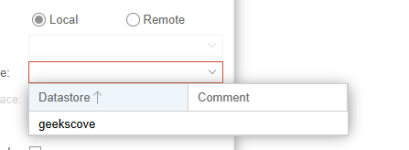

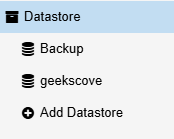

KKeith Miller replied to the thread copy a vm backup from one data store to another.The gui seems cryptic like the console command, all I want to do is copy vm/106 from backup to geekscove within the same data store when I try the GUI and when I do a pull the only item that shows is geekscove not the Backup

-

Ccklahn replied to the thread JBOD mit ZFS.Danke ;-). Nee, da war noch keine weitere gesteckt. Ich würde einfach mal eine 2TB zum Testen dazu stecken und die mit Deinem Befehl versuchen einzubinden.

-

Yyvesp reacted to JeanL's post in the thread Convert licensed Mikrotik CHR Hyper-V to Proxmox with

Like.

Hi, Why not just transfer the license to the VM when it's on PVE ? Cf : https://help.mikrotik.com/docs/spaces/ROS/pages/18350234/Cloud+Hosted+Router+CHR#CloudHostedRouter,CHR-LicenseTransfer Best regards,

Like.

Hi, Why not just transfer the license to the VM when it's on PVE ? Cf : https://help.mikrotik.com/docs/spaces/ROS/pages/18350234/Cloud+Hosted+Router+CHR#CloudHostedRouter,CHR-LicenseTransfer Best regards, -

Kkacper.adrianowicz replied to the thread Slow ceph operation.@aaron - i placed it on ceph-storage What configuration should I check / change on ceph-pool, VM config, etc. to make sure that I'm using proper settings? I read about KRBD on ceph-pool, write-cache on VM, disable RAM balooning and all other...

-

aaron replied to the thread Slow ceph operation.Well, then the question is how fast that NAS can write the backup data. As @spirit mentioned, a slow backup target can have an impact on the running VM. The fleecing option in the backup job options can help if you place that on a fast storage in...

aaron replied to the thread Slow ceph operation.Well, then the question is how fast that NAS can write the backup data. As @spirit mentioned, a slow backup target can have an impact on the running VM. The fleecing option in the backup job options can help if you place that on a fast storage in... -

Mmedievaltorture reacted to lk777's post in the thread [SOLVED] using wifi instead of ethernet with

Like.

I am new to this forum. It would be nice if people share their resolved configurations. My two cents. wifi setup iface enp4s0 inet manual auto vmbr0 iface vmbr0 inet static address 10.10.5.80/24 gateway 10.10.5.1...

Like.

I am new to this forum. It would be nice if people share their resolved configurations. My two cents. wifi setup iface enp4s0 inet manual auto vmbr0 iface vmbr0 inet static address 10.10.5.80/24 gateway 10.10.5.1... -

IITMader replied to the thread Probleme mit VLANS / OPNSENSE.du hast da ja die VLAN*-Schnittstellen. leider habe ich kein OPNSENSE, deshalb hier keine 1:1 anleitung aber so meine ich das: Du hast die Interfaces vom Typ VLAN --- (VLAN*)... Du musst diese jetzt einer neuen Schnittstelle zuweisen z.B. Name...

-

Kkacper.adrianowicz replied to the thread Slow ceph operation.@devaux public and cluster network right now are using the same 10Gb NIC and the same VLAN MTU on NIC and Switch are default 1500 right now All connected to one switch, without MLAG, and yes albo VMs are connected to the same aggregation switch...

-

JJohannes S reacted to robertlukan's post in the thread PBS with a volume >100 TB — how do you handle verify? with

Like.

Yes it would give even better performance. In that case I would need to use two disks and I am running low on empty disk slots. So for now I will use a single disk as caching disk.

Like.

Yes it would give even better performance. In that case I would need to use two disks and I am running low on empty disk slots. So for now I will use a single disk as caching disk. -

KKeith Miller posted the thread copy a vm backup from one data store to another in Proxmox Backup: Installation and configuration.How do I copy one vm backup data store to a different one copy vm/106 from Backup to geekscove

-

Rrobertlukan replied to the thread PBS with a volume >100 TB — how do you handle verify?.Yes it would give even better performance. In that case I would need to use two disks and I am running low on empty disk slots. So for now I will use a single disk as caching disk.

-

JJohannes S replied to the thread PBS with a volume >100 TB — how do you handle verify?.Aha sorry, I didn't got the part on the caching. If this works for your, that's great. I would still expect that a special device would give a further speedup.

-

JJohannes S reacted to robertlukan's post in the thread PBS with a volume >100 TB — how do you handle verify? with

Like.

I have added a cache device not a special device. I found the explanation here: https://klarasystems.com/articles/openzfs-understanding-zfs-vdev-types/ Feel free to comment on this.

Like.

I have added a cache device not a special device. I found the explanation here: https://klarasystems.com/articles/openzfs-understanding-zfs-vdev-types/ Feel free to comment on this. -

Cchristophe reacted to t.lamprecht's post in the thread New Archive CDN for End-of-Life (EOL) Releases with

Like.

Today, we announce the availability of a new archive CDN dedicated to the long-term archival of our old and End-of-Life (EOL) releases. Effective immediately, this archive hosts all repositories for releases based on Debian 10 (Buster) and older...

Like.

Today, we announce the availability of a new archive CDN dedicated to the long-term archival of our old and End-of-Life (EOL) releases. Effective immediately, this archive hosts all repositories for releases based on Debian 10 (Buster) and older... -

Rrobertlukan replied to the thread PBS with a volume >100 TB — how do you handle verify?.I have added a cache device not a special device. I found the explanation here: https://klarasystems.com/articles/openzfs-understanding-zfs-vdev-types/ Feel free to comment on this.

-

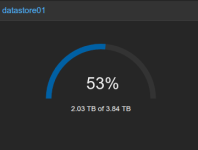

DDexter23 replied to the thread Script for monitor ZFS Usage.I see that the command "pvesm" on Proxmox Backup Server is there a equivalent command to retrive this usage%? As the WebUI Shows: UPDATE: Really i don't need to make a script also for PBS because on PVE has already the PBS Storage for space monitor.

-

JDie Macher der Helperscrips haben das bestimmt berücksichtigt*hust* Schau mal da nach was die zum Thema Update stehen haben. Frage mich allerdings was das Thema im Forum PBS zu suchen hat.

-

Kkifeo posted the thread Cephfs : cannot mount external ceph cluster with non root path in Proxmox VE: Installation and configuration.I try to mount an external ceph cluster with cephfs for backups. I can mount the cephfs with a path capability of /, but not with a /some/path It gives me a : Feb 04 14:19:32 proxmox-bl2 kernel: libceph: client1148949 fsid...