Latest activity

-

Pp-user replied to the thread vm in HA that normally is off starts automatically at PVE reboot.Here's part of the syslog, after the reboot (note put hte HA to ignored). It is VM 118 that get's started, while it was set top stop just before the reboot of the pve node, note that vm 117 is always on should be started...

-

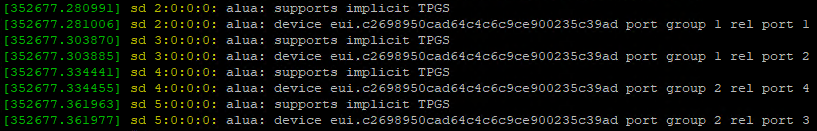

LLG-ITI replied to the thread EFI Disk Timeout during Boot.My entire dmesg output is actually just these 8 messages repeating: I don't know if this would be pointing to an issue or if this is the expected behaviour for my multipath iscsi connection. Edit: I've rebooted my secondary Proxmox Node in...

-

Aapoyen replied to the thread Issues with hot plug processors.Hello, It's because your using this functionnality the wrong way. You have to set your maximal number of vcpu you want on your vm, this value is the total core (socket * cores). You need to restart to set this value. Next you activate the...

-

YYaZoal replied to the thread Proxmox Install Failing on R740xd - PERC H730P.Hi, Do you get the same error as on the thread: https://forum.proxmox.com/threads/opt-in-linux-6-17-kernel-for-proxmox-ve-9-available-on-test-no-subscription.173920/page-5

-

bbgeek17 replied to the thread [SOLVED] How to create a virtual machine on a ZFS storage device from the command line?.Awesome, you can mark the thread solved by editing the first post and selecting appropriate subject prefix. Cheers Blockbridge : Ultra low latency all-NVME shared storage for Proxmox - https://www.blockbridge.com/proxmox

bbgeek17 replied to the thread [SOLVED] How to create a virtual machine on a ZFS storage device from the command line?.Awesome, you can mark the thread solved by editing the first post and selecting appropriate subject prefix. Cheers Blockbridge : Ultra low latency all-NVME shared storage for Proxmox - https://www.blockbridge.com/proxmox -

Aal.semenenko88 replied to the thread [SOLVED] How to create a virtual machine on a ZFS storage device from the command line?.Thanks, it worked!

-

bbgeek17 replied to the thread [SOLVED] How to create a virtual machine on a ZFS storage device from the command line?.If you want to create new volume as part of VM creation, you specified the syntax wrong, from "man qm": Use the special syntax STORAGE_ID:SIZE_IN_GiB to allocate a new volume. It should be: data:vm-2114-disk-0:10 The command only accepts...

bbgeek17 replied to the thread [SOLVED] How to create a virtual machine on a ZFS storage device from the command line?.If you want to create new volume as part of VM creation, you specified the syntax wrong, from "man qm": Use the special syntax STORAGE_ID:SIZE_IN_GiB to allocate a new volume. It should be: data:vm-2114-disk-0:10 The command only accepts... -

Aal.semenenko88 replied to the thread [SOLVED] How to create a virtual machine on a ZFS storage device from the command line?.The correct command is: # qm create 2114 --name "mail-test" --memory 2048 --cores 2 --ostype l26 --scsi0 data:10 --net0 model=e1000,bridge=vmbr0,macaddr=00:50:56:92:7f:6a --scsihw virtio-scsi-pci

-

Ddevops01 replied to the thread Proxmox VM Stun bei Snapshot.Vom Support kam die Rückmeldung, dass es bei VMs mit NFS zu einem Einfrieren kommen kann. Daher wurde in der neuen Version das Feature „snapshot-as-volume-chain“ umgesetzt. Es ist erst eine preview, das erste Feedback ist jedoch durchweg positiv.

-

shanreich replied to the thread MTU on vm's suddenly changed when started on proxmox 9 host..This should already be the case - is there any possibility of re-checking whether the script did not output a warning in your case?

shanreich replied to the thread MTU on vm's suddenly changed when started on proxmox 9 host..This should already be the case - is there any possibility of re-checking whether the script did not output a warning in your case? -

Mmfederanko replied to the thread EFI Disk Timeout during Boot.Thanks for bearing with me. Those units all look correct, can you post your dmesg output? Best attach a text file.

-

Aadvancer replied to the thread Can't get AMD pstate to work. It used to be fine..By the way. I'm using an ASUS Pro B550M-C/CSM with a Ryzen 4650G.

-

Aadvancer replied to the thread Can't get AMD pstate to work. It used to be fine..I think ASRock is a sister company of ASUS. But nevertheless there may of course be differences. By the way the installation of 6.8 was easy - but of course needs a reboot. I temporarily added the repo for Proxmox 8 to a new file...

-

KKeith Miller replied to the thread Unable to add back a Datastore.This is all I see This is the current Data store config the file I am trying to use is the one called Backup

-

Aal.semenenko88 posted the thread [SOLVED] How to create a virtual machine on a ZFS storage device from the command line? in Proxmox VE: Installation and configuration.# zfs list NAME USED AVAIL REFER MOUNTPOINT data 418G 241G 144K /data data/subvol-2109-disk-0 430M 15.6G 430M /data/subvol-2109-disk-0 data/vm-2026-disk-0 3.07M 241G 76K -...

-

Cc10l replied to the thread Can't get AMD pstate to work. It used to be fine..Not yet. I have Home Assistant and the NAS on this server, so I need to find some time when I can reboot without affecting others. My mobo is ASRock tough - not ASUS.

-

Ggoxxy reacted to Tomahawk's post in the thread 845 Failed to run lxc.hook.pre-start for container with

Like.

I found the root cause namely I restored the LXC on different machine on which the attached disk has not been mounted mp0: /mnt/pve/smb/share,mp=/mnt/share that cause the issue. When femoved it then it works fine.

Like.

I found the root cause namely I restored the LXC on different machine on which the attached disk has not been mounted mp0: /mnt/pve/smb/share,mp=/mnt/share that cause the issue. When femoved it then it works fine. -

Wwrre replied to the thread Avoid Reuse of VMID.@fabian We are currently building a Proxmox ManageIQ Provider together with the ManageIQ Team. But once again we face the issue of reusing the VMIDs. As you mentioned above, something outside of PVE should keep track of it. But PVE does not...

-

news replied to the thread [SOLVED] Upgraded from 7.4 to 8.4 - zpool upgrade safe, what do I need to check?.I Run Proxmox VE and BS with ZFS for some years and had no Problem with internal ZFS Upgrades. But not all my desktop System run the same zfs version for access the newer zfs disks. Your way is to go to Proxmox VE 9.x, then this is only one step.

news replied to the thread [SOLVED] Upgraded from 7.4 to 8.4 - zpool upgrade safe, what do I need to check?.I Run Proxmox VE and BS with ZFS for some years and had no Problem with internal ZFS Upgrades. But not all my desktop System run the same zfs version for access the newer zfs disks. Your way is to go to Proxmox VE 9.x, then this is only one step. -

RRonny Aasen posted the thread MTU on vm's suddenly changed when started on proxmox 9 host. in Proxmox VE: Networking and Firewall.Hello. I have solved my issue, by setting mtu=1500 on all nic's in all vm *.conf files. using sed inline. This is just to understand if there is something strange in my enviorment. or if this is as expected. if a vm, started on a proxmox 8.x...