Latest activity

-

BBluemeus reacted to news's post in the thread ZFS-ERROR Live-Migration: cannot create snapshot: out of space with

Like.

You have a nvme-2tb/vm-130-disk-1 "Disk", this is a zfs volume witch use: "referenced 443G". Then with this zfs snapshot nvme-2tb/vm-130-disk-1@__replicate_130-0_1766276210__ it will use: "referenced 443G". In toal, with all additional zfs...

Like.

You have a nvme-2tb/vm-130-disk-1 "Disk", this is a zfs volume witch use: "referenced 443G". Then with this zfs snapshot nvme-2tb/vm-130-disk-1@__replicate_130-0_1766276210__ it will use: "referenced 443G". In toal, with all additional zfs... -

BBluemeus reacted to news's post in the thread ZFS-ERROR Live-Migration: cannot create snapshot: out of space with

Like.

Can you please run this for my clearness: zfs list -t snapshot,filesystem -r nvme-2tb/vm-130-disk-1

Like.

Can you please run this for my clearness: zfs list -t snapshot,filesystem -r nvme-2tb/vm-130-disk-1 -

BBluemeus reacted to news's post in the thread ZFS-ERROR Live-Migration: cannot create snapshot: out of space with

Like.

hello, i would, clean up / delete zfs snapshot from both disks: * 'nvme-2tb:vm-130-disk-0' * 'nvme-2tb:vm-130-disk-1' zfs list -t snapshot -r nvme-2tb/vm-130-disk-0 zfs list -t snapshot -r nvme-2tb/vm-130-disk-1 zfs destroy -r...

Like.

hello, i would, clean up / delete zfs snapshot from both disks: * 'nvme-2tb:vm-130-disk-0' * 'nvme-2tb:vm-130-disk-1' zfs list -t snapshot -r nvme-2tb/vm-130-disk-0 zfs list -t snapshot -r nvme-2tb/vm-130-disk-1 zfs destroy -r... -

Falk R. replied to the thread Proxmox Cluster + Storage Best Practices.Da musst du mal bei Pure schauen. Ich habe keinen Zugriff, weil alles hinter der Paywall. Mit ZFS over iSCSI kannst du einen ZFS Pool über iSCSI zugreifbar machen, dafür brauchst du aber ein Storage was mit ZFS arbeitet. Bei shared Storage am...

Falk R. replied to the thread Proxmox Cluster + Storage Best Practices.Da musst du mal bei Pure schauen. Ich habe keinen Zugriff, weil alles hinter der Paywall. Mit ZFS over iSCSI kannst du einen ZFS Pool über iSCSI zugreifbar machen, dafür brauchst du aber ein Storage was mit ZFS arbeitet. Bei shared Storage am... -

BBluemeus replied to the thread ZFS-ERROR Live-Migration: cannot create snapshot: out of space.This worked for me. But also the disk was not that empty i expected. The more space was needed by not trimming the filesystems. So i activated the Discard-function on every disks on every vm an trimmed after that. Now the spaced was aclaimed...

-

TTErxleben replied to the thread [SOLVED] Wie kann es sein, dass.Klar. Ich lösche ja nicht willenlos rum. /var/cache/ ist aber per definitionem extrem volatil und dessen Inhalt sollte immer gelöscht werden können. Gerne wird ein Ordner nur bei der Installation angelegt und nie wieder auf Vorhandensein...

-

Yyourfate posted the thread ZFS bug causing kernel panic when accessing snapshots - fix in OpenZFS v2.4.0, is there a simple upgrade? in Proxmox VE: Installation and configuration.Since proxmox upgraded to zfs 2.3.4 I'm having problems with accessing snapshots, it causes kernel panics, making the access hang indefinitely. Part of my backup strategy is automated backups for container snapshots, but this is broken by this...

-

OOnslow replied to the thread [SOLVED] Accidentally increased size of a disk for a client. How do I decrease?.So they use the same units, fine. Anyway, don't remove full 200 GiB, just in case. You'll be trying to decrease it more, later, anyway. Not really. The data is still there. But as I wrote, don't start it. Yes, that's what I wrote. I'm a little...

-

Ppeaksteve reacted to them00n's post in the thread Issue on nodes pvestatd[616102]: storage 'PROXMOX_SSD_1' is not online with

Like.

We found issue on our network, IP address conflict, it seems that we have solved this for now

Like.

We found issue on our network, IP address conflict, it seems that we have solved this for now -

Zzhdi replied to the thread SSH failing to start at Boot Time of Fedora LXC Container.I have exactly same issue on lxc container, though my manger is incus but roughly same issue, currently workaround is, it appears working if disable --now sshd.socket. Don't know why and systemd debug log didn't give me enough clue to figure it...

-

Jjaminmc replied to the thread Opt-in Linux 6.17 Kernel for Proxmox VE 9 available on test & no-subscription.Do you know if you are using Grub, or Systemd to boot? You may want to check https://forum.proxmox.com/threads/update-to-version-9-fail-systemd-boot.172356/post-813782. If you are not using ZFS for your root drive, then it should be using Grub...

-

fstrankowski reacted to KemMuammer's post in the thread proxmox after restart does not start anymore and is stuck at hugepage with

fstrankowski reacted to KemMuammer's post in the thread proxmox after restart does not start anymore and is stuck at hugepage with Like.

So, I have tried that and also changed it to an AMD card I had lying around. Nothing it went kinda through and then black but nothing could not input anything.

Like.

So, I have tried that and also changed it to an AMD card I had lying around. Nothing it went kinda through and then black but nothing could not input anything. -

PI have tested a bit more. You are totaly right. The command should be without the ending "/": lxc.cgroup2.devices.allow: c 189:* rwm lxc.mount.entry: /dev/bus/usb/003/002 dev/bus/usb none bind,optional,create=file The first...

-

TTErxleben replied to the thread [SOLVED] Wie kann es sein, dass.Nun habe ich allen meinen Mut aktiviert und den cache-Ordner komplett geleert und neu gestartet. Alle VM/LXC's sind erreichbar. Die WebGUI ist ist allerdings platt, obwohl nmap auf 8006 "open" zeigt. purge und Konsorten mache ich sowieso immer...

-

RR.Warps posted the thread [SOLVED] sync job failing "connection reset" in Proxmox Backup: Installation and configuration.Hello, I have 3 proxmox backup servers, one main and two remotes. the remotes share the same hardware and are configured the same. One of the remotes has recently been moved off site to replace an existing remote PBS. Prior to the move sync jobs...

-

Sstruppu replied to the thread [SOLVED] Problem backing up container.The directory is empty - see output below ls -al /mnt/vzsnap0/ total 8 drwxr-xr-x 2 root root 4096 Dec 30 00:16 . drwxr-xr-x 3 root root 4096 Dec 29 23:53 .. config for container 101 pct config 101 arch: amd64 cores: 2 features: nesting=1...

-

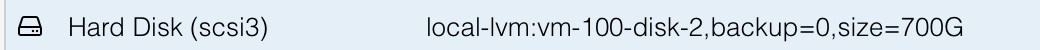

Ggctwnl replied to the thread [SOLVED] Accidentally increased size of a disk for a client. How do I decrease?.Thanks for helping out, @Onslow. On PVE, lvdisplay says about the device: --- Logical volume --- LV Path /dev/pve/vm-100-disk-2 LV Name vm-100-disk-2 VG Name pve LV UUID...

-

aaron replied to the thread [SOLVED] Wie kann es sein, dass.TIL about gdu. Deutlich flotter als ncdu. :) @TErxleben je nachdem wie alt der Host ist und was da abseits von PVE irgendwann mal drauf installiert wurde, kann auch ein apt autoremove und apt purge manchmal ganz gut tun. Auch alte Kernel...

aaron replied to the thread [SOLVED] Wie kann es sein, dass.TIL about gdu. Deutlich flotter als ncdu. :) @TErxleben je nachdem wie alt der Host ist und was da abseits von PVE irgendwann mal drauf installiert wurde, kann auch ein apt autoremove und apt purge manchmal ganz gut tun. Auch alte Kernel... -

aaron reacted to robm's post in the thread VM live migration issue - api error (status = 400: api error (status = 596: )) with

aaron reacted to robm's post in the thread VM live migration issue - api error (status = 400: api error (status = 596: )) with Like.

Thanks for the tip. We added the IPs of the clusters to the firewall, and it worked! Just had to map the server names to the private network in /etc/hosts to force the migration over the PVN, and use detailed mapping, and everything went as...

Like.

Thanks for the tip. We added the IPs of the clusters to the firewall, and it worked! Just had to map the server names to the private network in /etc/hosts to force the migration over the PVN, and use detailed mapping, and everything went as... -

Ttmnr008 replied to the thread SPICE without having to download a VV file?.I dont have a problem opening the .VV file, I am looking for a way to not have to click the 'SPICE' button via Proxmox UI. I was hoping for a way similar to RDP, type in the IP address and connect.