Latest activity

-

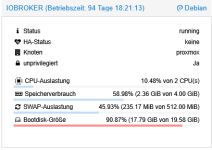

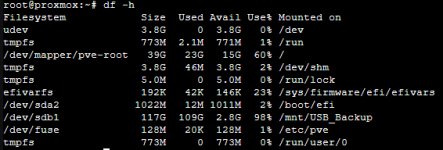

EErnst T. replied to the thread Speicherverteilung (Bootdisk-Größe).Von selber macht das der Container nicht. Du kannst aber manuell die die Größe im GUI erhöhen. Sogar ohne Neustart .... Oder lösch mal den npmcache und reduziere das journallog.

-

1150d posted the thread cluster add node replacing qdevice in Proxmox VE: Installation and configuration.Hi, I think I may have made a mistake: I'm running a cluster with two nodes and a qdevice on an additional Raspberry. To this, I have now added a third node. The joining of the third node was successful. But "pvecm status" gives this output...

-

UdoB replied to the thread New Proxmox box w/ ZFS - R730xd w/ PERC H730 Mini in “HBA Mode” - BIG NO NO?.You may read man zpool-import - there are some options to find a missing pool by searching for devices and also to import a "destroyed" pool (for example). Of course most of those commands are dangerous, but you don't have actual data on those...

UdoB replied to the thread New Proxmox box w/ ZFS - R730xd w/ PERC H730 Mini in “HBA Mode” - BIG NO NO?.You may read man zpool-import - there are some options to find a missing pool by searching for devices and also to import a "destroyed" pool (for example). Of course most of those commands are dangerous, but you don't have actual data on those... -

Jjsalas424 posted the thread Backup Failure After Debian LXC Upgrade in Proxmox VE: Installation and configuration.I recenty upgraded my Debian LXC running DNS and backups have started to fail. Here's a writeup of the investigation. What is the right way to address this? PVE 8.4.13 Issue:Automated backups of LXC container 101 (AdGuard2, Debian 11) started...

-

Ddompro replied to the thread Move Disk hangs forever in version 8.2.4.Is it possible that LVM does not take VMDK's? Just tried to create a 1GB vmdk on a test VM and it says: failed to update VM 108: unsupported format 'vmdk' at /usr/share/perl5/PVE/Storage/LVMPlugin.pm line 697. (500) Now the question is, if I...

-

Ffertig replied to the thread New Proxmox box w/ ZFS - R730xd w/ PERC H730 Mini in “HBA Mode” - BIG NO NO?.This is a pool with 8 600GB SAS 15k disks (which are leftover, I'd prefer higher capacity, it's just for backup) On the other node the pool ist just "not there" after reboot I tried a lot of force imports, pointed the zpool import to the...

-

UdoB replied to the thread Move Disk hangs forever in version 8.2.4.I am not convinced! 4 binary TB (correct: 4 TiB) is 4398046511104 Bytes. And it is 4294967296 KILOBytes. The last one is possibly used to show wrongly 4.2 TB...

UdoB replied to the thread Move Disk hangs forever in version 8.2.4.I am not convinced! 4 binary TB (correct: 4 TiB) is 4398046511104 Bytes. And it is 4294967296 KILOBytes. The last one is possibly used to show wrongly 4.2 TB... -

Ddompro replied to the thread Move Disk hangs forever in version 8.2.4.On this NAS are multiple VMDK disks over 4TB. Moved there with VMware. That makes me confident that the NAS does not have an issue with files over 4TB. And the PURE-Fibre-Thick-LVM volumes also have multiple disks over 4TB. It is a bit curious...

-

Xxmoxer replied to the thread Severe system freeze with NFS on Proxmox 9 running kernel 6.14.8-2-pve when mounting NFS shares.Hi! I have the same problem and it looks like I found a solution. NFS shares from the Truenas scale virtual machine are mounted to unprivileged LXC via the host. In /etc/fstab, I added vers=3 and lookupcache=none to the NFS parameters. One day...

-

UdoB replied to the thread Move Disk hangs forever in version 8.2.4.Perhaps a maximum filesize limit is hit somewhere? Compare: https://en.wikipedia.org/wiki/Comparison_of_file_systems#Limits

UdoB replied to the thread Move Disk hangs forever in version 8.2.4.Perhaps a maximum filesize limit is hit somewhere? Compare: https://en.wikipedia.org/wiki/Comparison_of_file_systems#Limits -

news replied to the thread Move Disk hangs forever in version 8.2.4.ask your nas again and check big filetransver from your desktop pc to your nas. Maybe you have a big video file? i use e.g. rsync to my zfs nas based an Proxmox VE and store there all my raw video files.

news replied to the thread Move Disk hangs forever in version 8.2.4.ask your nas again and check big filetransver from your desktop pc to your nas. Maybe you have a big video file? i use e.g. rsync to my zfs nas based an Proxmox VE and store there all my raw video files. -

Ddompro replied to the thread Move Disk hangs forever in version 8.2.4.Hey, did you ever find a solution for that? Because I'm just stuck with a pretty similar issue. After exactly 4.2TB the transfer seemingly stops. Going from 400mb/s from the NAS NFS share to 0.

-

Uuzumo reacted to jaminmc's post in the thread Hookscript with 'post-stop' when the VM was shutdown from the VM itself with

Like.

I encountered a similar issue. I desired to execute a hook script that would reinitialize the AMD GPU after a virtual machine (VM) shutdown. The script functioned correctly when the VM was shut down via the web interface. However, if I initiated...

Like.

I encountered a similar issue. I desired to execute a hook script that would reinitialize the AMD GPU after a virtual machine (VM) shutdown. The script functioned correctly when the VM was shut down via the web interface. However, if I initiated... -

Nnoidea replied to the thread Speicherverteilung (Bootdisk-Größe).Vielen Dank für deine Rückmeldung. Das ganze ich für mich nicht so richtig zu packen. Es ist also nicht so, wie bei Windows, wo ich z.B. einer Partition 20GB fest zuordne, sondern ich habe in diesem Fall hier z.B.dem Container vm-106-disk-0...

-

BBamsefar posted the thread Use USB for network (without a USB to Ethernet dongle) - is it possible? in Proxmox VE: Networking and Firewall.So I am thinking of creating a ProxMox cluster, three (3) nodes, and use direct network between the nodes (no switch). Now that requires two network ports dedicated just for this. Plus the normal ports. I don't think I will be able to get small...

-

UdoB replied to the thread New Proxmox box w/ ZFS - R730xd w/ PERC H730 Mini in “HBA Mode” - BIG NO NO?.That's surprising and not expected, of course. How did you create that pool? What gives "zpool status" before reboot and "zpool import" after it? We are talking "write cache", right? It tells ZFS that data has been written to disk while it...

UdoB replied to the thread New Proxmox box w/ ZFS - R730xd w/ PERC H730 Mini in “HBA Mode” - BIG NO NO?.That's surprising and not expected, of course. How did you create that pool? What gives "zpool status" before reboot and "zpool import" after it? We are talking "write cache", right? It tells ZFS that data has been written to disk while it... -

Mmiro-zamiro replied to the thread PGs incomplete.I managed to fix it using these steps: https://medium.com/opsops/recovering-ceph-from-reduced-data-availability-3-pgs-inactive-3-pgs-incomplete-b97cbcb4b5a1 DO THAT ONLY IF INACTIVE PGs ARE EMPTY (MEANING OBJECTS = 0, seen using command ceph pg...

-

Ffertig replied to the thread New Proxmox box w/ ZFS - R730xd w/ PERC H730 Mini in “HBA Mode” - BIG NO NO?.this is exactly my hardware, but I did not disable the cache. The zpool completely disapears after each reboot. Is this the cache? Do I need to disable it?