I have just noticed some strange, repeating patterns in the resource graphs on my host and VM's / CT's. To start with, the following are from the host machine:

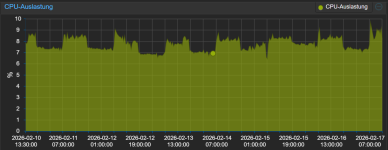

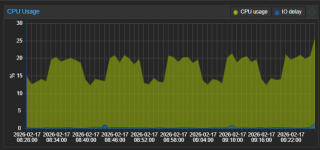

CPU:

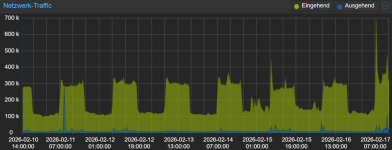

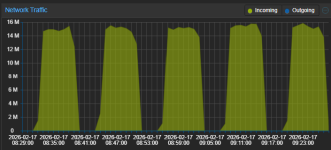

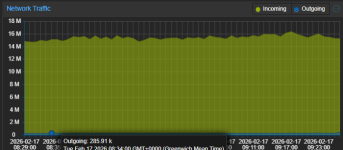

Net:

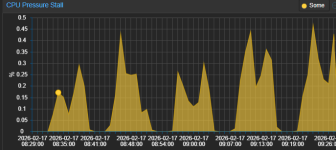

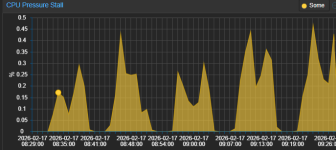

CPU Pressure Stall:

You can start to see a bit of a pattern on the CPU. Nothing too odd so far...

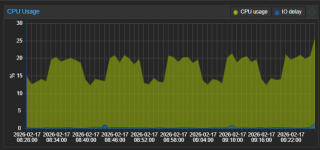

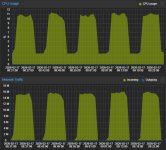

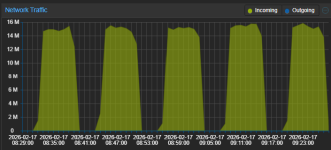

Now this is from a Linux CT. I have two CT's that look exactly like the following, and two that do not.

CPU is quite a constant, low, flat graph.

Net:

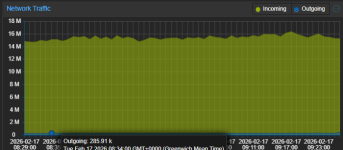

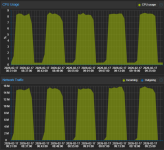

And this is from Linux VM 1:

And this is from Linux VM 2, completely separate from the above machine:

There are two things really confusing me here.

A) What is causing the odd resource spikes on the VM / CT (any one, in isolation). These particular VM's and CT's are quite idle at the moment, I would dig into top command etc, but I am suspecting something broader than what is going on within any 1 VM / CT...

B) Why do multiple, seemingly unrelated VMs AND CTs show (almost) identical graphs? This is really puzzling me. To the point I am suspecting a PVE graphing issue, as I am pretty sure each VM is not seeing spikes of 14M network traffic (based on stats from elsewhere on the network)...

And for reference, here are a couple of Windows VM's which are being quite well-behaved:

I am not experiencing any specific issues (performance, etc), but I would like an explanation for this odd resource stats reporting behaviour!

CPU:

Net:

CPU Pressure Stall:

You can start to see a bit of a pattern on the CPU. Nothing too odd so far...

Now this is from a Linux CT. I have two CT's that look exactly like the following, and two that do not.

CPU is quite a constant, low, flat graph.

Net:

And this is from Linux VM 1:

And this is from Linux VM 2, completely separate from the above machine:

There are two things really confusing me here.

A) What is causing the odd resource spikes on the VM / CT (any one, in isolation). These particular VM's and CT's are quite idle at the moment, I would dig into top command etc, but I am suspecting something broader than what is going on within any 1 VM / CT...

B) Why do multiple, seemingly unrelated VMs AND CTs show (almost) identical graphs? This is really puzzling me. To the point I am suspecting a PVE graphing issue, as I am pretty sure each VM is not seeing spikes of 14M network traffic (based on stats from elsewhere on the network)...

And for reference, here are a couple of Windows VM's which are being quite well-behaved:

I am not experiencing any specific issues (performance, etc), but I would like an explanation for this odd resource stats reporting behaviour!