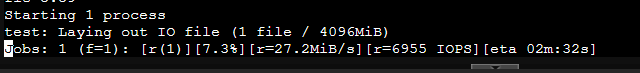

I've been working on setting up a high performance NAS to host storage for some VMs/LXCs and Windows PCs, and while I'm able to get 200k+ IOPS on the Windows PCs through the 56G Infiniband link, for some reason my Proxmox node is only getting 6000.. (according to fio):

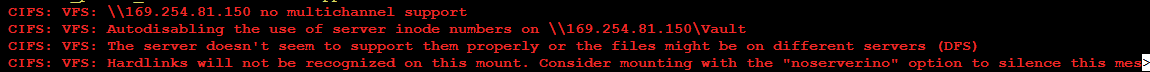

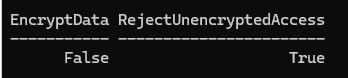

I'm using fstab to mount the share via the Windows Server's Infiniband interface IP (i.e. \\169.254.81.150\sharename). I tried hooking in a 40GBe link directly between the Proxmox and the Windows Server, but I haven't been able to get it to work correctly, ping is telling me the host isn't reachable (the 40G interface IP on the windows machine; 169.254.82.151), but if I use ping with the -I command and specify the 40G interface, it works just fine.

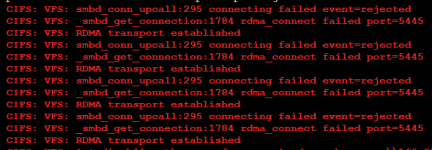

I tried to do some SMB Multichannel stuff but I have no idea how to make it work on Proxmox, looked into mounting via hostname and handling that with DNS but mainly due to the aformentioned issue I'm having where it can't "normally" reach the 40G Ethernet interface (and being somewhat lost/over my head) I ended up giving up on it.

Diagram of my network topology:

The 40Gbe / multichannel was mostly just to try troubleshooting the connection since there's not any tools (iperf doesn't work very good for RDMA ime) for testing connection performance of IB between windows and linux, but disabling the IB interface on proxmox and setting the fstab mount point to the 40Gbe interface (169.254.82.151) still doesn't mount/ping. The subnets being different was on account of reading that it was recommended for SMB multichannel on linux. I also wanted to use it as a backup bridge in case I can't use IB in a VM.

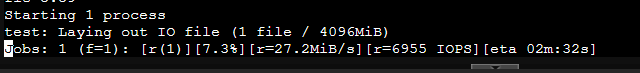

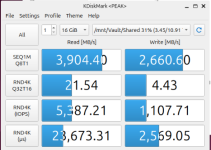

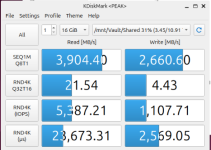

I tested the SRIOV (ETH Only) performance in a Ubuntu VM, it was able to talk over the 40Gbe to the windows server out of the box, but also showed similarly bad results:

(sanity tested on windows 11 client immediately after and it's still showing 240k+ IOPS)

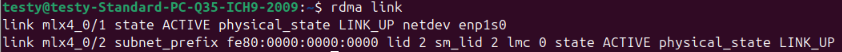

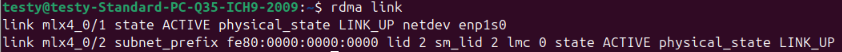

RDMA Link shows both being Active with Link up on both the proxmox host and the VM:

I know this is a lot of text, but tl;dr - how do I figure out where my network IOPS are going between proxmox and the Windows Server?

Any help is much appreciated in advance <3

I'm using fstab to mount the share via the Windows Server's Infiniband interface IP (i.e. \\169.254.81.150\sharename). I tried hooking in a 40GBe link directly between the Proxmox and the Windows Server, but I haven't been able to get it to work correctly, ping is telling me the host isn't reachable (the 40G interface IP on the windows machine; 169.254.82.151), but if I use ping with the -I command and specify the 40G interface, it works just fine.

I tried to do some SMB Multichannel stuff but I have no idea how to make it work on Proxmox, looked into mounting via hostname and handling that with DNS but mainly due to the aformentioned issue I'm having where it can't "normally" reach the 40G Ethernet interface (and being somewhat lost/over my head) I ended up giving up on it.

Diagram of my network topology:

The 40Gbe / multichannel was mostly just to try troubleshooting the connection since there's not any tools (iperf doesn't work very good for RDMA ime) for testing connection performance of IB between windows and linux, but disabling the IB interface on proxmox and setting the fstab mount point to the 40Gbe interface (169.254.82.151) still doesn't mount/ping. The subnets being different was on account of reading that it was recommended for SMB multichannel on linux. I also wanted to use it as a backup bridge in case I can't use IB in a VM.

I tested the SRIOV (ETH Only) performance in a Ubuntu VM, it was able to talk over the 40Gbe to the windows server out of the box, but also showed similarly bad results:

(sanity tested on windows 11 client immediately after and it's still showing 240k+ IOPS)

RDMA Link shows both being Active with Link up on both the proxmox host and the VM:

I know this is a lot of text, but tl;dr - how do I figure out where my network IOPS are going between proxmox and the Windows Server?

Any help is much appreciated in advance <3