Hi there,

Got a new HP DL380 GEN10 Plus with 4 3.84TB SSD on a raid 10 config with a MegaRAID Controller MR416i-a GEN10+

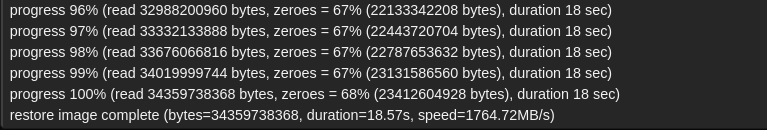

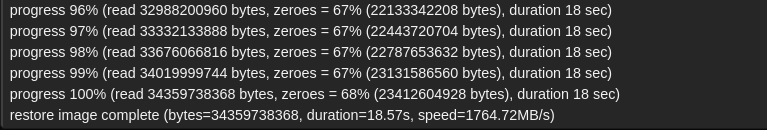

Tried to restore a VM from my Proxmox Backup Server and it stuck at 100% :

waited over an hour but still here !

From other PVE nodes restore VMs from same PBS is working as usual. No issues at all

Any idea ? All nodes (PVEs and PBS are on a 10Gb network)

No errors during boot (no dmesg weird message about megaraid controller):

[ 1.556571] megaraid_sas 0000:86:00.0: BAR:0x0 BAR's base_addr(phys):0x00000000d5000000 mapped virt_addr:0x00000000e64da170

[ 1.556577] megaraid_sas 0000:86:00.0: FW now in Ready state

[ 1.556579] megaraid_sas 0000:86:00.0: 63 bit DMA mask and 63 bit consistent mask

[ 1.556872] megaraid_sas 0000:86:00.0: firmware supports msix : (128)

[ 1.557268] megaraid_sas 0000:86:00.0: requested/available msix 32/32 poll_queue 0

[ 1.557276] megaraid_sas 0000:86:00.0: current msix/max num queues : (32/24)

[ 1.557278] megaraid_sas 0000:86:00.0: RDPQ mode : (enabled)

[ 1.557280] megaraid_sas 0000:86:00.0: Current firmware supports maximum commands: 5101 LDIO threshold: 0

[ 1.568765] megaraid_sas 0000:86:00.0: Performance mode :Balanced (latency index = 8)

[ 1.568768] megaraid_sas 0000:86:00.0: FW supports sync cache : Yes

[ 1.568771] megaraid_sas 0000:86:00.0: megasas_disable_intr_fusion is called outbound_intr_mask:0x40000009

[ 1.653488] megaraid_sas 0000:86:00.0: FW supports atomic descriptor : Yes

[ 1.716486] megaraid_sas 0000:86:00.0: FW provided supportMaxExtLDs: 1 max_lds: 240

[ 1.716498] megaraid_sas 0000:86:00.0: controller type : MR(4096MB)

[ 1.716503] megaraid_sas 0000:86:00.0: Online Controller Reset(OCR) : Enabled

[ 1.716507] megaraid_sas 0000:86:00.0: Secure JBOD support : Yes

[ 1.716511] megaraid_sas 0000:86:00.0: NVMe passthru support : Yes

[ 1.716515] megaraid_sas 0000:86:00.0: FW provided TM TaskAbort/Reset timeout : 6 secs/60 secs

[ 1.716520] megaraid_sas 0000:86:00.0: JBOD sequence map support : Yes

[ 1.716523] megaraid_sas 0000:86:00.0: PCI Lane Margining support : Yes

[ 1.737848] megaraid_sas 0000:86:00.0: NVME page size : (4096)

[ 1.738207] megaraid_sas 0000:86:00.0: megasas_enable_intr_fusion is called outbound_intr_mask:0x40000000

[ 1.738209] megaraid_sas 0000:86:00.0: INIT adapter done

[ 1.740653] megaraid_sas 0000:86:00.0: Snap dump wait time : 15

[ 1.740658] megaraid_sas 0000:86:00.0: pci id : (0x1000)/(0x10e2)/(0x1590)/(0x032a)

[ 1.740659] megaraid_sas 0000:86:00.0: unevenspan support : no

[ 1.740660] megaraid_sas 0000:86:00.0: firmware crash dump : no

[ 1.740661] megaraid_sas 0000:86:00.0: JBOD sequence map : enabled

[ 1.740748] megaraid_sas 0000:86:00.0: Max firmware commands: 5100 shared with default hw_queues = 24 poll_queues 0

but quite sure it's something related to this kind of raid controller in some way

- PVE 9.1.4 running kernel: 6.17.4-2-pve

- PBS 4.1.1 running kernel: 6.17.4-2-pve

- enterprise repo enabled on both hosts

- DL380GEN10+ : updated with latest SPP from December 2025

UPDATE: SOLVED, CRAP LSI CONTROLLER...IT'S NOT WORKING WELL WITH TWO ARRAYS IN PLACE :

- 4 SSD SAS RAID 10 (12GB/S)

- 2 SSD SATA RAID 1 (6GB/S)

REMOVED SECOND ARRAY AND NOW IT WORKS FINE

I WILL REMOVE IT AND INSTALL A P408i

Got a new HP DL380 GEN10 Plus with 4 3.84TB SSD on a raid 10 config with a MegaRAID Controller MR416i-a GEN10+

Tried to restore a VM from my Proxmox Backup Server and it stuck at 100% :

waited over an hour but still here !

From other PVE nodes restore VMs from same PBS is working as usual. No issues at all

Any idea ? All nodes (PVEs and PBS are on a 10Gb network)

No errors during boot (no dmesg weird message about megaraid controller):

[ 1.556571] megaraid_sas 0000:86:00.0: BAR:0x0 BAR's base_addr(phys):0x00000000d5000000 mapped virt_addr:0x00000000e64da170

[ 1.556577] megaraid_sas 0000:86:00.0: FW now in Ready state

[ 1.556579] megaraid_sas 0000:86:00.0: 63 bit DMA mask and 63 bit consistent mask

[ 1.556872] megaraid_sas 0000:86:00.0: firmware supports msix : (128)

[ 1.557268] megaraid_sas 0000:86:00.0: requested/available msix 32/32 poll_queue 0

[ 1.557276] megaraid_sas 0000:86:00.0: current msix/max num queues : (32/24)

[ 1.557278] megaraid_sas 0000:86:00.0: RDPQ mode : (enabled)

[ 1.557280] megaraid_sas 0000:86:00.0: Current firmware supports maximum commands: 5101 LDIO threshold: 0

[ 1.568765] megaraid_sas 0000:86:00.0: Performance mode :Balanced (latency index = 8)

[ 1.568768] megaraid_sas 0000:86:00.0: FW supports sync cache : Yes

[ 1.568771] megaraid_sas 0000:86:00.0: megasas_disable_intr_fusion is called outbound_intr_mask:0x40000009

[ 1.653488] megaraid_sas 0000:86:00.0: FW supports atomic descriptor : Yes

[ 1.716486] megaraid_sas 0000:86:00.0: FW provided supportMaxExtLDs: 1 max_lds: 240

[ 1.716498] megaraid_sas 0000:86:00.0: controller type : MR(4096MB)

[ 1.716503] megaraid_sas 0000:86:00.0: Online Controller Reset(OCR) : Enabled

[ 1.716507] megaraid_sas 0000:86:00.0: Secure JBOD support : Yes

[ 1.716511] megaraid_sas 0000:86:00.0: NVMe passthru support : Yes

[ 1.716515] megaraid_sas 0000:86:00.0: FW provided TM TaskAbort/Reset timeout : 6 secs/60 secs

[ 1.716520] megaraid_sas 0000:86:00.0: JBOD sequence map support : Yes

[ 1.716523] megaraid_sas 0000:86:00.0: PCI Lane Margining support : Yes

[ 1.737848] megaraid_sas 0000:86:00.0: NVME page size : (4096)

[ 1.738207] megaraid_sas 0000:86:00.0: megasas_enable_intr_fusion is called outbound_intr_mask:0x40000000

[ 1.738209] megaraid_sas 0000:86:00.0: INIT adapter done

[ 1.740653] megaraid_sas 0000:86:00.0: Snap dump wait time : 15

[ 1.740658] megaraid_sas 0000:86:00.0: pci id : (0x1000)/(0x10e2)/(0x1590)/(0x032a)

[ 1.740659] megaraid_sas 0000:86:00.0: unevenspan support : no

[ 1.740660] megaraid_sas 0000:86:00.0: firmware crash dump : no

[ 1.740661] megaraid_sas 0000:86:00.0: JBOD sequence map : enabled

[ 1.740748] megaraid_sas 0000:86:00.0: Max firmware commands: 5100 shared with default hw_queues = 24 poll_queues 0

but quite sure it's something related to this kind of raid controller in some way

- PVE 9.1.4 running kernel: 6.17.4-2-pve

- PBS 4.1.1 running kernel: 6.17.4-2-pve

- enterprise repo enabled on both hosts

- DL380GEN10+ : updated with latest SPP from December 2025

UPDATE: SOLVED, CRAP LSI CONTROLLER...IT'S NOT WORKING WELL WITH TWO ARRAYS IN PLACE :

- 4 SSD SAS RAID 10 (12GB/S)

- 2 SSD SATA RAID 1 (6GB/S)

REMOVED SECOND ARRAY AND NOW IT WORKS FINE

I WILL REMOVE IT AND INSTALL A P408i

Last edited: