Hey there! Welcome to the forum.

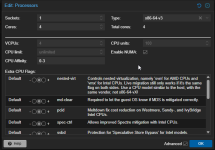

For the CPU Affinity, you can set the cores in the Processors options under Advanced (here I pinned my 4 vCPUs to core 0-3):

But normally, if you enable NUMA, it stays on one NUMA Node and uses all of those vCPU Cores from this single numa node.

It can also only allocate the RAM on this single NUMA Node. => If possible

Find out, which CPUs you need with

numactl --hardware

Also set the CPU Flag (if supported, not sure if really necessary):

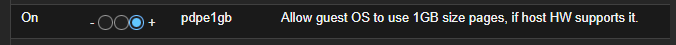

Hugepages are another story.

Those cant be configured over the UI atm.

You need to set it for the VM with:

qm set <VMID> --hugepages=1024

=> This will set the 1GB Hugepages

qm set <VMID> --keephugepages=0

=> This is already the default, as long as the qemu proccess is running and the VM is only softly restarted (Without ending the qemu process), the hugepages will be reserved. If you set this to 1, the hugepages will not be deleted even after full VM shutdown / end of qemu process.

Interleave and bind behave quite the same in a single numa node binding. Bind is forcefully locking it onto this NUMA Node, while interleave behaves more like Round-Robin and would distribute over every available defined hostnode => We only define one, so it does not matter.

I would still prefer "bind".

Preferred would allow a fallback to the second NUMA Node, but that way you could have some RAM on Node0 and some on Node1. => Bottleneck

qm set <VMID> --numa0=cpus=0-3,hostnodes=0,memory=65536,policy=bind

=> Hostnodes defines the physical NUMA node to lock onto. Crucial: Make sure this matches the NUMA node where your pinned CPU cores (from the affinity setting) are actually located!

=> memory needs to match the total memory of the VM (65536), since you are forcing 100% of the RAM onto this single NUMA node to prevent cross-socket latency.

=> cpus is just the vCPUs inside the VM that you want to map to this NUMA node. Since you only have one guest node, it should match the total amount of vCPUs (e.g., 0-3 for a 4-core VM, 0-7 for a 8-core VM and so on).

All this can be found here:

https://pve.proxmox.com/pve-docs/chapter-qm.html#qm_cpu

Just a few hints:

Keep in mind, that a bootup of the VM can fail, if it is not possible to allocate that many hugepages.

You may need to tweak the Host, to reserve such spaces on bootup, so that the VM can safely boot up at any time.

=> This should do it as kernel param (depends on your installation, where to add): default_hugepagesz=1G hugepagesz=1G hugepages=64

If not possible or wanted, you may need to set "any" for hugepages, this would prefer 1GB hugepages, but has a fallback to 2 MiB if it fails to allocate.

Also how did you mount the tmpfs inside the VM? Mount it with huge=always.

Cheers