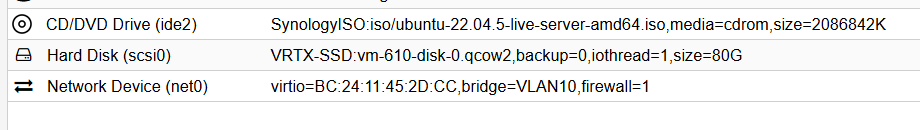

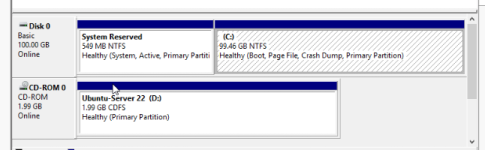

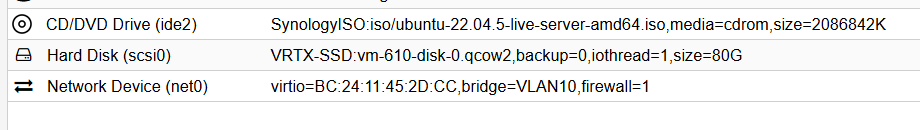

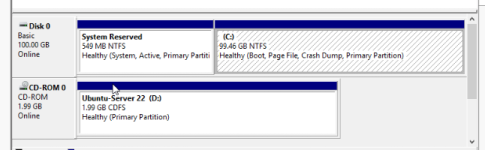

New to Proxmox but seeing something very weird. I am creating a NEW Linux VM. I go through the Wizard to create the VM spec's, tell it to mount the Ubuntu ISO, create a new disk and so forth. When I power on the VM, it boots to Windows. What in the world? I am not telling it to use any existing disk. I am not even sure where this Windows disk came from, maybe from some Test Windows VM I created and deleted in the past. But how is PVE booting a new VM with some old random Windows Disk? My new disk I create when setting up the Linux VM is 80GB, this Windows VM is 100GB. Also, if I create the disk size to 5gb, it boots into the linux install like I would expect. If I create the disk to 40GB, Windows boots into a repair mode and can't fully boot. Any idea where in the heck this Windows boot is coming from. I don't have a PXE server, so not trying to boot from any network setup.

Thanks

-Craig

Thanks

-Craig