I've got 3xm.2 slots, so I thought I'd expand the local lvm so I could throw a bunch of VMs on it instead of making each nvme into its own... I followed the commands to add the other drives to the VG, extended them... thought it was all good, until I went to create a VM and got an out of space error. I'm running commands from other threads, trying to figure out what I did or did not do to set this up correctly. I haven't built anything on here, so it would not be the end of the world if I had to remove it from the cluster, wipe it out and start from scratch... but I'd rather fix it or at least know what I did extending it that caused this.

Halp?

Halp?

Bash:

root@Defiant:/# vgs

VG #PV #LV #SN Attr VSize VFree

pve 3 2 0 wz--n- 4.59t 0

root@Defiant:/# lvs

LV VG Attr LSize Pool Origin Data% Meta% Move Log Cpy%Sync Convert

root pve -wi-ao---- 4.58t

swap pve -wi-ao---- 8.00g

root@Defiant:/# pvs

PV VG Fmt Attr PSize PFree

/dev/nvme0n1 pve lvm2 a-- 1.86t 0

/dev/nvme1n1p3 pve lvm2 a-- <1.82t 0

/dev/nvme2n1 pve lvm2 a-- 931.51g 0

root@Defiant:/# du -sx /*

0 /bin

306140 /boot

12 /Defiant

0 /dev

5672 /etc

4 /home

0 /lib

0 /lib64

16 /lost+found

4 /media

16 /mnt

4 /opt

du: cannot access '/proc/3101/task/3101/fd/4': No such file or directory

du: cannot access '/proc/3101/task/3101/fdinfo/4': No such file or directory

du: cannot access '/proc/3101/fd/3': No such file or directory

du: cannot access '/proc/3101/fdinfo/3': No such file or directory

0 /proc

80 /root

1440 /run

0 /sbin

4 /srv

0 /sys

0 /tmp

5547260 /usr

917568 /var

root@Defiant:/# df -h

Filesystem Size Used Avail Use% Mounted on

udev 47G 0 47G 0% /dev

tmpfs 9.3G 1.5M 9.3G 1% /run

/dev/mapper/pve-root 4.6T 6.5G 4.4T 1% /

tmpfs 47G 66M 46G 1% /dev/shm

efivarfs 128K 46K 78K 37% /sys/firmware/efi/efivars

tmpfs 5.0M 0 5.0M 0% /run/lock

tmpfs 1.0M 0 1.0M 0% /run/credentials/systemd-journald.service

tmpfs 47G 0 47G 0% /tmp

/dev/nvme1n1p2 1022M 8.8M 1014M 1% /boot/efi

/dev/sdc1 13T 8.0G 12T 1% /mnt/pve/DATA

/dev/fuse 128M 52K 128M 1% /etc/pve

tmpfs 1.0M 0 1.0M 0% /run/credentials/getty@tty1.service

tmpfs 9.3G 4.0K 9.3G 1% /run/user/0

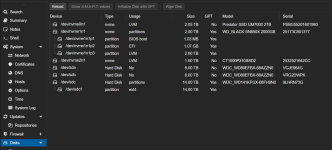

root@Defiant:/# lsblk

NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINTS

sda 8:0 0 7.3T 0 disk

sdb 8:16 0 7.3T 0 disk

sdc 8:32 0 12.7T 0 disk

└─sdc1 8:33 0 12.7T 0 part /mnt/pve/DATA

nvme0n1 259:0 0 1.9T 0 disk

└─pve-root 252:1 0 4.6T 0 lvm /

nvme1n1 259:1 0 1.8T 0 disk

├─nvme1n1p1 259:2 0 1007K 0 part

├─nvme1n1p2 259:3 0 1G 0 part /boot/efi

└─nvme1n1p3 259:4 0 1.8T 0 part

├─pve-swap 252:0 0 8G 0 lvm [SWAP]

└─pve-root 252:1 0 4.6T 0 lvm /

nvme2n1 259:5 0 931.5G 0 disk

└─pve-root 252:1 0 4.6T 0 lvm /

root@Defiant:/# df

Filesystem 1K-blocks Used Available Use% Mounted on

udev 48248356 0 48248356 0% /dev

tmpfs 9657840 1560 9656280 1% /run

/dev/mapper/pve-root 4843419192 6779160 4638772624 1% /

tmpfs 48289184 67488 48221696 1% /dev/shm

efivarfs 128 46 78 37% /sys/firmware/efi/efivars

tmpfs 5120 0 5120 0% /run/lock

tmpfs 1024 0 1024 0% /run/credentials/systemd-journald.service

tmpfs 48289184 0 48289184 0% /tmp

/dev/nvme1n1p2 1046512 8976 1037536 1% /boot/efi

/dev/sdc1 13563486156 8328068 12871522636 1% /mnt/pve/DATA

/dev/fuse 131072 52 131020 1% /etc/pve

tmpfs 1024 0 1024 0% /run/credentials/getty@tty1.service

tmpfs 9657836 4 9657832 1% /run/user/0

root@Defiant:/# vgdisplay

--- Volume group ---

VG Name pve

System ID

Format lvm2

Metadata Areas 3

Metadata Sequence No 13

VG Access read/write

VG Status resizable

MAX LV 0

Cur LV 2

Open LV 2

Max PV 0

Cur PV 3

Act PV 3

VG Size 4.59 TiB

PE Size 4.00 MiB

Total PE 1203520

Alloc PE / Size 1203520 / 4.59 TiB

Free PE / Size 0 / 0

root@Defiant:/# lsblk -f

NAME FSTYPE FSVER LABEL UUID FSAVAIL FSUSE% MOUNTPOINTS

sda

sdb

sdc

└─sdc1 ext4 1.0 87d33dd7-b87e-47fe-8b80-c489d2c69706 12T 0% /mnt/pve/DATA

nvme0n1 LVM2_member LVM2 001 2e10xc-Hjzc-TzO0-UUDj-d2Eg-OUAg-GmmaCx

└─pve-root ext4 1.0 2bd9affa-c39a-4033-98af-2bfd84a8cf31 4.3T 0% /

nvme1n1

├─nvme1n1p1

├─nvme1n1p2 vfat FAT32 E300-262D 1013.2M 1% /boot/efi

└─nvme1n1p3 LVM2_member LVM2 001 DJcr63-oJhZ-Mhnh-aClM-1fpU-7ZXv-oZWo1S

├─pve-swap swap 1 444ee4b2-2c2d-4d02-ad07-e8128ce4d753 [SWAP]

└─pve-root ext4 1.0 2bd9affa-c39a-4033-98af-2bfd84a8cf31 4.3T 0% /

nvme2n1 LVM2_member LVM2 001 ciJ3FL-TgQh-q2Ce-g4rX-EVPX-Io67-wC2xle

└─pve-root ext4 1.0 2bd9affa-c39a-4033-98af-2bfd84a8cf31 4.3T 0% /

root@Defiant:/# cat /etc/pve/storage.cfg

dir: local

path /var/lib/vz

content iso,vztmpl

shared 0

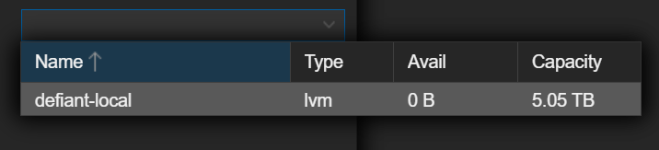

lvm: defiant-local

vgname pve

content rootdir,images

nodes Defiant

saferemove 0

shared 0

dir: DATA

path /mnt/pve/DATA

content rootdir,backup,snippets,iso,import,vztmpl

is_mountpoint 1

nodes Defiant

shared 0

Last edited: