Hi

I have 4 nodes cluster.

Suddenly since yesterday I can't start the VM.

I tried various solutions but they all failed.

Here are some debugs.

pve1 /etc/pve/corosync.conf

results of # systemctl status pve-cluster

results of # systemctl status corosync

results of # pvecm nodes

results of # journalctl -xeu corosync.service

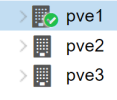

pve1 WebUI

pve2, pve3 WebUI

pve4 WebUI

I don't want to rebuild it if possible.

How can this be done?

I have 4 nodes cluster.

Suddenly since yesterday I can't start the VM.

I tried various solutions but they all failed.

Here are some debugs.

pve1 /etc/pve/corosync.conf

Code:

logging {

debug: off

to_syslog: yes

}

nodelist {

node {

name: pve1

nodeid: 2

quorum_votes: 1

ring0_addr: 192.168.100.11

}

node {

name: pve2

nodeid: 3

quorum_votes: 1

ring0_addr: 192.168.100.12

}

node {

name: pve3

nodeid: 1

quorum_votes: 1

ring0_addr: 192.168.100.13

}

node {

name: pve4

nodeid: 4

quorum_votes: 1

ring0_addr: 192.168.100.14

}

}

quorum {

provider: corosync_votequorum

}

totem {

cluster_name: cluster

config_version: 5

interface {

linknumber: 0

}

ip_version: ipv4-6

link_mode: passive

secauth: on

version: 2

}results of # systemctl status pve-cluster

Code:

● pve-cluster.service - The Proxmox VE cluster filesystem

Loaded: loaded (/lib/systemd/system/pve-cluster.service; enabled; preset: enabled)

Active: active (running) since Sun 2024-07-14 23:11:11 JST; 51min ago

Process: 3951 ExecStart=/usr/bin/pmxcfs (code=exited, status=0/SUCCESS)

Main PID: 3953 (pmxcfs)

Tasks: 7 (limit: 18952)

Memory: 35.4M

CPU: 1.754s

CGroup: /system.slice/pve-cluster.service

└─3953 /usr/bin/pmxcfs

Jul 15 00:02:28 pve1 pmxcfs[3953]: [dcdb] crit: cpg_initialize failed: 2

Jul 15 00:02:28 pve1 pmxcfs[3953]: [status] crit: cpg_initialize failed: 2

Jul 15 00:02:34 pve1 pmxcfs[3953]: [quorum] crit: quorum_initialize failed: 2

Jul 15 00:02:34 pve1 pmxcfs[3953]: [confdb] crit: cmap_initialize failed: 2

Jul 15 00:02:34 pve1 pmxcfs[3953]: [dcdb] crit: cpg_initialize failed: 2

Jul 15 00:02:34 pve1 pmxcfs[3953]: [status] crit: cpg_initialize failed: 2

Jul 15 00:02:40 pve1 pmxcfs[3953]: [quorum] crit: quorum_initialize failed: 2

Jul 15 00:02:40 pve1 pmxcfs[3953]: [confdb] crit: cmap_initialize failed: 2

Jul 15 00:02:40 pve1 pmxcfs[3953]: [dcdb] crit: cpg_initialize failed: 2

Jul 15 00:02:40 pve1 pmxcfs[3953]: [status] crit: cpg_initialize failed: 2results of # systemctl status corosync

Code:

× corosync.service - Corosync Cluster Engine

Loaded: loaded (/lib/systemd/system/corosync.service; enabled; preset: enabled)

Active: failed (Result: exit-code) since Sun 2024-07-14 23:11:11 JST; 53min ago

Duration: 3.477s

Docs: man:corosync

man:corosync.conf

man:corosync_overview

Process: 3959 ExecStart=/usr/sbin/corosync -f $COROSYNC_OPTIONS (code=exited, status=8)

Main PID: 3959 (code=exited, status=8)

CPU: 11ms

Jul 14 23:11:11 pve1 systemd[1]: Starting corosync.service - Corosync Cluster Engine...

Jul 14 23:11:11 pve1 corosync[3959]: [MAIN ] Corosync Cluster Engine starting up

Jul 14 23:11:11 pve1 corosync[3959]: [MAIN ] Corosync built-in features: dbus monitoring watchdog systemd xmlconf vq>

Jul 14 23:11:11 pve1 corosync[3959]: [MAIN ] Could not open /etc/corosync/authkey: No such file or directory

Jul 14 23:11:11 pve1 corosync[3959]: [MAIN ] Corosync Cluster Engine exiting with status 8 at main.c:1417.

Jul 14 23:11:11 pve1 systemd[1]: corosync.service: Main process exited, code=exited, status=8/n/a

Jul 14 23:11:11 pve1 systemd[1]: corosync.service: Failed with result 'exit-code'.

Jul 14 23:11:11 pve1 systemd[1]: Failed to start corosync.service - Corosync Cluster Engine.results of # pvecm nodes

Code:

Cannot initialize CMAP serviceresults of # journalctl -xeu corosync.service

Code:

░░ A start job for unit corosync.service has begun execution.

░░

░░ The job identifier is 1032.

Jul 14 23:11:11 pve1 corosync[3959]: [MAIN ] Corosync Cluster Engine starting up

Jul 14 23:11:11 pve1 corosync[3959]: [MAIN ] Corosync built-in features: dbus monitoring watchdog systemd xmlconf vq>

Jul 14 23:11:11 pve1 corosync[3959]: [MAIN ] Could not open /etc/corosync/authkey: No such file or directory

Jul 14 23:11:11 pve1 corosync[3959]: [MAIN ] Corosync Cluster Engine exiting with status 8 at main.c:1417.

Jul 14 23:11:11 pve1 systemd[1]: corosync.service: Main process exited, code=exited, status=8/n/a

░░ Subject: Unit process exited

░░ Defined-By: systemd

░░ Support: https://www.debian.org/support

░░

░░ An ExecStart= process belonging to unit corosync.service has exited.

░░

░░ The process' exit code is 'exited' and its exit status is 8.

Jul 14 23:11:11 pve1 systemd[1]: corosync.service: Failed with result 'exit-code'.

░░ Subject: Unit failed

░░ Defined-By: systemd

░░ Support: https://www.debian.org/support

░░

░░ The unit corosync.service has entered the 'failed' state with result 'exit-code'.

Jul 14 23:11:11 pve1 systemd[1]: Failed to start corosync.service - Corosync Cluster Engine.

░░ Subject: A start job for unit corosync.service has failed

░░ Defined-By: systemd

░░ Support: https://www.debian.org/support

░░

░░ A start job for unit corosync.service has finished with a failure.

░░

░░ The job identifier is 1032 and the job result is failed.pve1 WebUI

pve2, pve3 WebUI

pve4 WebUI

I don't want to rebuild it if possible.

How can this be done?