Hello Guys, I Have a recently installed Proxmox cluster whit 3 server, 10G NIC networking true a Aruba Swithc, 11 OSD SSD (8 x 1.7T, 3 x 3.4T), Total RAW capacity off 24T, 12T Used in 6 VM. One Pool

The problem are this 9 pg, that are active+remapped+backfill_toofull

This is the output for, ceph df

root@PVE12:~# ceph df

--- RAW STORAGE ---

CLASS SIZE AVAIL USED RAW USED %RAW USED

ssd 24 TiB 12 TiB 12 TiB 12 TiB 50.03

TOTAL 24 TiB 12 TiB 12 TiB 12 TiB 50.03

--- POOLS ---

POOL ID PGS STORED OBJECTS USED %USED MAX AVAIL

.mgr 1 1 177 MiB 28 532 MiB 0.03 516 GiB

SSD1 2 128 4.3 TiB 1.19M 12 TiB 88.95 516 GiB

.rgw.root 3 32 1.3 KiB 4 48 KiB 0 516 GiB

default.rgw.log 4 32 242 B 2 32 KiB 0 516 GiB

default.rgw.control 5 32 0 B 8 0 B 0 516 GiB

default.rgw.meta 6 32 0 B 0 0 B 0 516 GiB

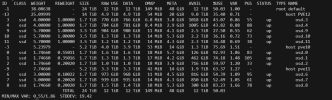

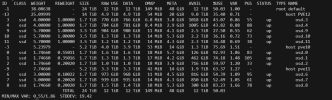

root@PVE12:~# ceph osd df tree

The recovery process stop, never reach more clean pg, the problem start with un spectect reboots of the physical servers, because and faulty UPS.

Really, apreciate any help. :

thanks

Heiber

The problem are this 9 pg, that are active+remapped+backfill_toofull

| Low space hindering backfill (add storage if this doesn't resolve itself): 9 pgs backfill_toofull | |

| pg 2.12 is active+remapped+backfill_toofull, acting [9,1,6] pg 2.2c is active+remapped+backfill_toofull, acting [9,7,2] pg 2.36 is active+remapped+backfill_toofull, acting [10,1,8] pg 2.39 is active+remapped+backfill_toofull, acting [9,6,2] pg 2.3f is active+remapped+backfill_toofull, acting [11,1,6] pg 2.5a is active+remapped+backfill_toofull, acting [11,6,2] pg 2.6e is active+remapped+backfill_toofull, acting [11,1,8] pg 2.71 is active+remapped+backfill_toofull, acting [11,8,2] pg 2.74 is active+remapped+backfill_toofull, acting [9,2,7] |

This is the output for, ceph df

root@PVE12:~# ceph df

--- RAW STORAGE ---

CLASS SIZE AVAIL USED RAW USED %RAW USED

ssd 24 TiB 12 TiB 12 TiB 12 TiB 50.03

TOTAL 24 TiB 12 TiB 12 TiB 12 TiB 50.03

--- POOLS ---

POOL ID PGS STORED OBJECTS USED %USED MAX AVAIL

.mgr 1 1 177 MiB 28 532 MiB 0.03 516 GiB

SSD1 2 128 4.3 TiB 1.19M 12 TiB 88.95 516 GiB

.rgw.root 3 32 1.3 KiB 4 48 KiB 0 516 GiB

default.rgw.log 4 32 242 B 2 32 KiB 0 516 GiB

default.rgw.control 5 32 0 B 8 0 B 0 516 GiB

default.rgw.meta 6 32 0 B 0 0 B 0 516 GiB

root@PVE12:~# ceph osd df tree

The recovery process stop, never reach more clean pg, the problem start with un spectect reboots of the physical servers, because and faulty UPS.

Really, apreciate any help. :

thanks

Heiber