Hi everyone,

This is my second post here, and I’m looking for some advice and guidance on storage and RAID options for my main Proxmox server.

I’m currently running Proxmox as my primary homelab host. It runs my main Pi-hole instance along with a few test nodes. I’ve been really happy with the setup so far. My Pi-hole cluster uses a virtual network that communicates with the correct VLANs in my Ubiquiti environment, keeping IoT and trusted devices properly segmented.

Proxmox OS drive:

Secondary drive (backups / ISOs):

I also have two spare M.2 drives:

Is it okay to mix and match different SSDs (SATA and NVMe, different sizes and models) in a RAID setup, or is that generally not recommended?

Ideally, I’d prefer not to spend much (or any ) money on additional hardware if I can make use of what I already have.

I’d like to improve redundancy and overall reliability, and I’m especially interested in best practices for RAID and storage configuration in a Proxmox homelab environment.

The final stage is link to Proxmox to a NAS for additional redundancy.

Any recommendations, suggestions, or lessons learned would be greatly appreciated.

This is my second post here, and I’m looking for some advice and guidance on storage and RAID options for my main Proxmox server.

I’m currently running Proxmox as my primary homelab host. It runs my main Pi-hole instance along with a few test nodes. I’ve been really happy with the setup so far. My Pi-hole cluster uses a virtual network that communicates with the correct VLANs in my Ubiquiti environment, keeping IoT and trusted devices properly segmented.

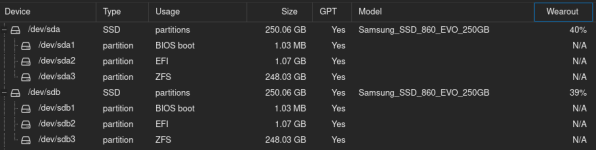

Current Storage Setup

Proxmox OS drive:

- SanDisk SD9TB8W256G1001 (SSD) – 238.5 GB

Secondary drive (backups / ISOs):

- KXG50ZNV1T02 NVMe TOSHIBA 1024GB (M.2) – 953.9 GB

Hardware

- Lenovo ThinkCentre M720q

- I have a 4-pin to 2/3-port SATA power splitter cable available, so I can add additional SATA SSDs if needed.

I also have two spare M.2 drives:

- SK Hynix SC311 (128GB)

- SK Hynix PC401 (256GB)

My Question

Is it okay to mix and match different SSDs (SATA and NVMe, different sizes and models) in a RAID setup, or is that generally not recommended?

Ideally, I’d prefer not to spend much (or any ) money on additional hardware if I can make use of what I already have.

I’d like to improve redundancy and overall reliability, and I’m especially interested in best practices for RAID and storage configuration in a Proxmox homelab environment.

The final stage is link to Proxmox to a NAS for additional redundancy.

Any recommendations, suggestions, or lessons learned would be greatly appreciated.