Hey there, I'm new to Proxmox and LXC so I've been trying to stand up apps using helper scripts. Every attempt—regardless of the application—fails with Exit Code 255approximately 2 minutes after creation.

The Symptoms:

Any input or suggestions would be greatly appreciated.

The Symptoms:

- The script successfully creates the container.

- I manually start the container.

- The script hangs for about 2 minutes.

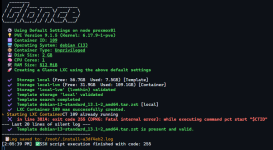

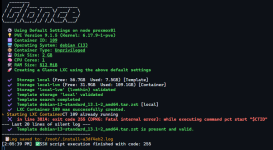

- After the timeout, it fails with: in line 3814: exit code 255 (DPKG: Fatal internal error): while executing command pct start "$CTID".

- PVE Version: 9.1.5 (pve-manager: 9.1.5)

- Kernel: 6.17.9-1-pve

- Storage: LVM-Thin (pve/data)

- Template: debian-13-standard_13.1-2_amd64.tar.zst

- AppArmor: Currently disabled at the kernel level (apparmor=0 in GRUB). aa-status confirms it is not running.

- Max Release: Modified /usr/share/perl5/PVE/LXC/Setup/Debian.pm to allow versions up to 15.

- Tested both Privileged and Unprivileged modes; same result.

- Nesting and Keyctl enabled or disabled; same result.

Any input or suggestions would be greatly appreciated.

Last edited: