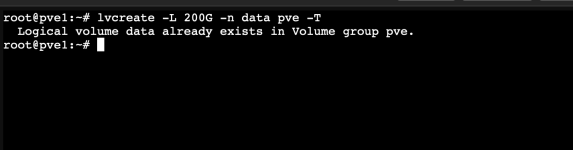

Hey all - wanted to share my experience today if helpful, YMMV - I am not a pro by any means. Ultimately I was able to run Alex's lvconvert command (

lvconvert --repair <thinpool>) and get back up and running.

Background -

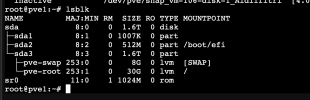

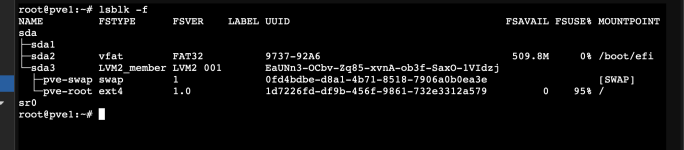

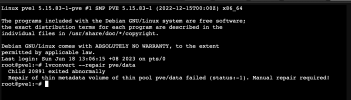

My system powers down and restarts daily with no issues. The system has two physical volumes, one ssd and one nvme, Today I powered on the system and went to boot the vms and also received the

Check of pool pve/ssd01 failed (status:1) message. The thin pool on the ssd reported "not available" in lvdisplay as well. vgdisplay on the ssd drive showed free of 16gb, alloc of 448GB, for a total of 464 on the vg.

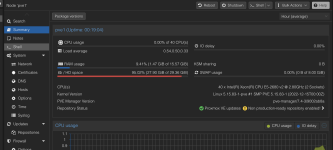

On the drive is a thin pool (ssd01) with 338g total (96GB for root, 8G on swap (unsure where the missing 6g is)), with 5 thin provisioned vm disks in that pool (their sum is 390gb). Proxmox metrics in the UI showing about 45% utilization on the pool. Today however the pool showed as "not available" in lvdisplay and "inactive" in lvscan, and since the pool was down all the dependent lvs were reporting that as well.

dmsetup info -c was not showing the lvs either. Tried a

lvchange -a y pve/ssd01 first and received the same message. Then performed the

lvconvert --repair pve/ssd01.

On running I received the below messages

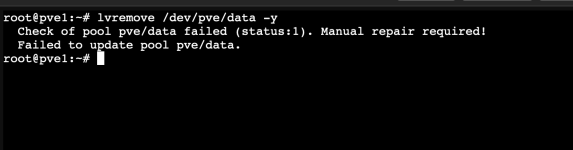

After a couple successful reboots I removed the pve/ssd01_meta0

lvremove pve/ssd01_meta0

I also removed an extra clone to reduce over provisioning risk, and left the other messages slide

per this thread

Not sure how the system got into this state. I ran a

pvck /dev/sda3 for the drive in question and no metadata error messages. I read through syslog for a while looking for anything but did not find any message indicating a root cause for the

pvestatd[1264]: activating LV 'pve/ssd01' failed. SMART values on the drive show no wearout and no failing attributes (PASSED).

I'll report back if I see anything further here.

Running proxmox version 8.0.3

Also just wanted to drop a general thanks to the Proxmox team - appreciate the awesome product as always.