I reproduce migration VM from nodes....

first I create VM on node3 with OS windows 10, then I destroy Vm on node3 and create new Vm on node1 with linux OS.

I migrate new vm (with linux) from node1 to node3

in log i see:

2026-04-29 15:43:16 starting migration of VM 100 to node 'ictdlpx03' (172.16.10.253)

2026-04-29 15:43:16 found local disk 'addon-data:vm-100-disk-1.qcow2' (attached)

2026-04-29 15:43:16 starting VM 100 on remote node 'ictdlpx03'

2026-04-29 15:43:19 volume 'addon-data:vm-100-disk-1.qcow2' is 'addon-data:vm-100-disk-0.qcow2' on the target

2026-04-29 15:43:19 start remote tunnel

2026-04-29 15:43:20 ssh tunnel ver 1

2026-04-29 15:43:20 starting storage migration

2026-04-29 15:43:20 scsi0: start migration to nbd:unix:/run/qemu-server/100_nbd.migrate:exportname=drive-scsi0

mirror-scsi0: transferred 50.0 GiB of 50.0 GiB (100.00%) in 7m 45s, ready

that this mean? why proxmox create new lv?

then i try migrate from node3 to node2, but i got error:

2026-04-29 15:54:39 found local disk 'addon-data:vm-100-disk-0.qcow2' (attached)

2026-04-29 15:54:39 copying local disk images

2026-04-29 15:54:39 ERROR: storage migration for 'addon-data:vm-100-disk-0.qcow2' to storage 'addon-data' failed - cannot migrate from storage type 'lvm' to 'lvm'

2026-04-29 15:54:39 aborting phase 1 - cleanup resources

2026-04-29 15:54:39 ERROR: migration aborted (duration 00:00:01): storage migration for 'addon-data:vm-100-disk-0.qcow2' to storage 'addon-data' failed - cannot migrate from storage type 'lvm' to 'lvm'

TASK ERROR: migration aborted

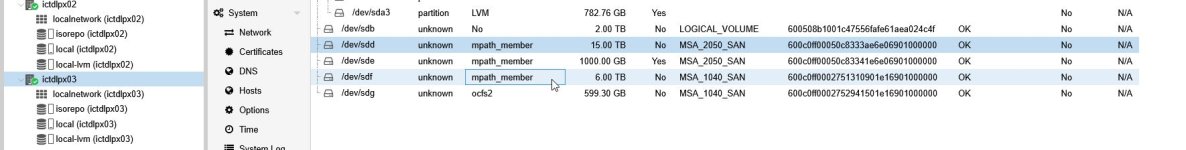

So, i think that I wrong create "shared" disk....

How can i correct add FC LUN disk for VM disk image with snap-shot?

UPDATE:

on node 2:

--- Logical volume ---

LV Path /dev/addon-repo/vm-100-disk-0.qcow2

LV Name vm-100-disk-0.qcow2

VG Name addon-repo

LV UUID tMipyK-zgGe-Mwm6-LFTZ-V7gV-J7sN-rHW6We

LV Write Access read/write

LV Creation host, time ictdlpx03, 2026-04-29 15:43:17 +0200

LV Status NOT available

LV Size 50.01 GiB

Current LE 12803

Segments 1

Allocation inherit

Read ahead sectors auto

on node1 lvdisplay:

--- Logical volume ---

LV Path /dev/addon-repo/vm-100-disk-0.qcow2

LV Name vm-100-disk-0.qcow2

VG Name addon-repo

LV UUID tMipyK-zgGe-Mwm6-LFTZ-V7gV-J7sN-rHW6We

LV Write Access read/write

LV Creation host, time ictdlpx03, 2026-04-29 15:43:17 +0200

LV Status NOT available

LV Size 50.01 GiB

Current LE 12803

Segments 1

Allocation inherit

Read ahead sectors auto

on node3:

--- Logical volume ---

LV Path /dev/addon-repo/vm-100-disk-0.qcow2

LV Name vm-100-disk-0.qcow2

VG Name addon-repo

LV UUID tMipyK-zgGe-Mwm6-LFTZ-V7gV-J7sN-rHW6We

LV Write Access read/write

LV Creation host, time ictdlpx03, 2026-04-29 15:43:17 +0200

LV Status available

# open 0

LV Size 50.01 GiB

Current LE 12803

Segments 1

Allocation inherit

Read ahead sectors auto

- currently set to 4096

Block device 252:10