We lost the one server from cluster, but VMs haven't been restarted on another nodes.

We deleted broken node from cluster by "pvecm delnode" and fully redeployed one.

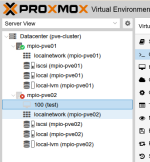

After joining into the cluster I see 5 VMs assigned to this node in gray "question" mark.

Node also in gray status, neither maintenance mode, nor green.

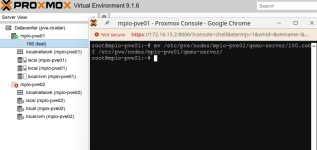

All VM files stored in shared NFS storage, so we can create new VM with these files.

How to cleanup these VMs from Proxmox ?

We deleted broken node from cluster by "pvecm delnode" and fully redeployed one.

After joining into the cluster I see 5 VMs assigned to this node in gray "question" mark.

Node also in gray status, neither maintenance mode, nor green.

All VM files stored in shared NFS storage, so we can create new VM with these files.

How to cleanup these VMs from Proxmox ?