I have a Proxmox 9.1.6 - 3 node cluster with shared iscsi LVM flash storage. I have one VM (VM 100) that I can successfully launch/start/migrate/move between hosts so I know the storage and cluster functions are working.

On the hosts I see this in the system log:

Feb 24 16:32:57 pve2 pvedaemon[3290454]: failed to stat '/dev/ssd-lun1-lvm/vm-100-disk-2.qcow2'

Feb 24 16:32:57 pve2 pvedaemon[3290454]: failed to stat '/dev/ssd-lun1-lvm/vm-100-disk-0.qcow2'

Feb 24 16:32:57 pve2 pvedaemon[3290454]: failed to stat '/dev/ssd-lun1-lvm/vm-100-disk-1.qcow2'

And when I try to P2V a machine using Starwind, I get the following error on the target host:

Feb 24 16:36:37 pve1 pvedaemon[3294352]: unable to create VM 201 - lvcreate 'ssd-lun1-lvm/vm-201-disk-0' error: Run `lvcreate --help' for more information.

Feb 24 16:36:37 pve1 pvedaemon[3292326]: <root@pam> end task UPID ve1:00324490:05CE5999:699E4415:qmcreate:201:root@pam: unable to create VM 201 - lvcreate 'ssd-lun1-lvm/vm-201-disk-0' error: Run `lvcreate --help' for more information.

ve1:00324490:05CE5999:699E4415:qmcreate:201:root@pam: unable to create VM 201 - lvcreate 'ssd-lun1-lvm/vm-201-disk-0' error: Run `lvcreate --help' for more information.

And the task error on the host:

--size may not be zero.

TASK ERROR: unable to create VM 201 - lvcreate 'ssd-lun1-lvm/vm-201-disk-0' error: Run `lvcreate --help' for more information.

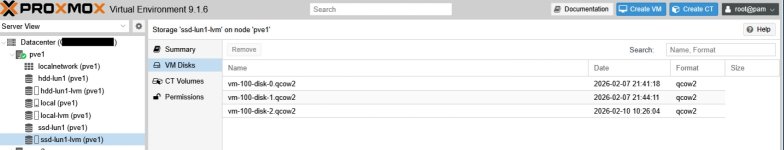

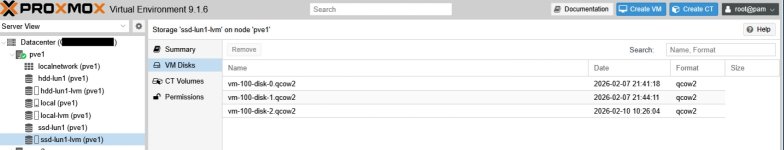

If I try to view the storage details, I don't see any info in the size column:

Keep in mind, I can start/run VM 100 no problem.

I also checked to make sure that the iscsi connections where alive on all the hosts, and they were:

Target: iqn.2000-01.com.synology:xxx-uc3400-san.ssd-lun1.789ca40f3c (non-flash)

Current Portal: xxx.xxx.xxx.50:3260,5

Persistent Portal: xxx.xxx.xxx.50:3260,5

**********

Interface:

**********

Iface Name: default

Iface Transport: tcp

Iface Initiatorname: iqn.1993-08.org.debian:01:e2c850d5cd66

Iface IPaddress: xxx.xxx.xxx.xxx

Iface HWaddress: default

Iface Netdev: default

SID: 2

iSCSI Connection State: LOGGED IN

iSCSI Session State: LOGGED_IN

Internal iscsid Session State: NO CHANGE

Any ideas?

Thanks!

On the hosts I see this in the system log:

Feb 24 16:32:57 pve2 pvedaemon[3290454]: failed to stat '/dev/ssd-lun1-lvm/vm-100-disk-2.qcow2'

Feb 24 16:32:57 pve2 pvedaemon[3290454]: failed to stat '/dev/ssd-lun1-lvm/vm-100-disk-0.qcow2'

Feb 24 16:32:57 pve2 pvedaemon[3290454]: failed to stat '/dev/ssd-lun1-lvm/vm-100-disk-1.qcow2'

And when I try to P2V a machine using Starwind, I get the following error on the target host:

Feb 24 16:36:37 pve1 pvedaemon[3294352]: unable to create VM 201 - lvcreate 'ssd-lun1-lvm/vm-201-disk-0' error: Run `lvcreate --help' for more information.

Feb 24 16:36:37 pve1 pvedaemon[3292326]: <root@pam> end task UPID

And the task error on the host:

--size may not be zero.

TASK ERROR: unable to create VM 201 - lvcreate 'ssd-lun1-lvm/vm-201-disk-0' error: Run `lvcreate --help' for more information.

If I try to view the storage details, I don't see any info in the size column:

Keep in mind, I can start/run VM 100 no problem.

I also checked to make sure that the iscsi connections where alive on all the hosts, and they were:

Target: iqn.2000-01.com.synology:xxx-uc3400-san.ssd-lun1.789ca40f3c (non-flash)

Current Portal: xxx.xxx.xxx.50:3260,5

Persistent Portal: xxx.xxx.xxx.50:3260,5

**********

Interface:

**********

Iface Name: default

Iface Transport: tcp

Iface Initiatorname: iqn.1993-08.org.debian:01:e2c850d5cd66

Iface IPaddress: xxx.xxx.xxx.xxx

Iface HWaddress: default

Iface Netdev: default

SID: 2

iSCSI Connection State: LOGGED IN

iSCSI Session State: LOGGED_IN

Internal iscsid Session State: NO CHANGE

Any ideas?

Thanks!