Hi!

This concerns a PVE installation with a VM and an attached disk of approximately 16 TB.

When attempting to create a snapshot of this VM, the process aborted after a few seconds.

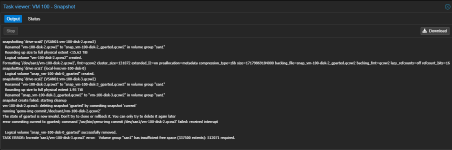

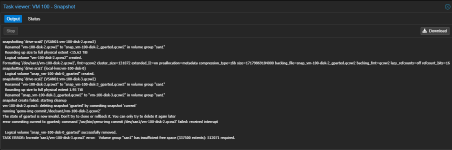

Here is the log file I have (I named the snapshot "gparted," but the tool wasn't used):

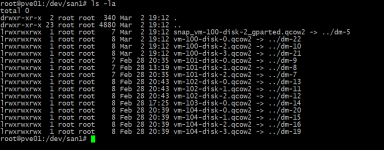

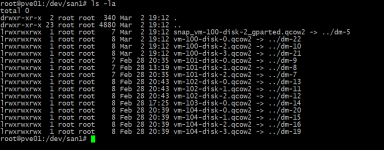

At the file level, I now see the following:

The VM itself doesn't display any snapshots.

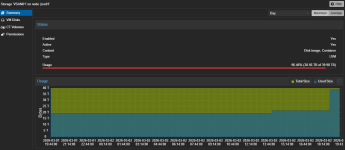

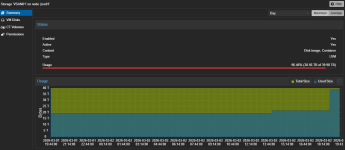

A look at the SAN is as follows (it was only partially populated before the snapshot):

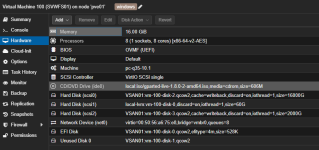

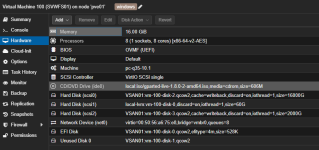

The affected VM has the following hardware:

Now I can't free up any more storage space and I probably have remnants of the failed snapshot on the SAN.

Do you have any ideas on how I can clean this up?

Thanks in advance and best regards

This concerns a PVE installation with a VM and an attached disk of approximately 16 TB.

When attempting to create a snapshot of this VM, the process aborted after a few seconds.

Here is the log file I have (I named the snapshot "gparted," but the tool wasn't used):

At the file level, I now see the following:

The VM itself doesn't display any snapshots.

A look at the SAN is as follows (it was only partially populated before the snapshot):

The affected VM has the following hardware:

Now I can't free up any more storage space and I probably have remnants of the failed snapshot on the SAN.

Do you have any ideas on how I can clean this up?

Thanks in advance and best regards