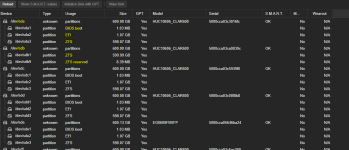

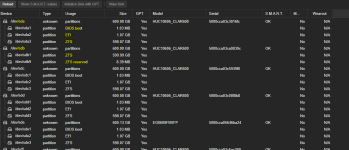

I believe that the Proxmox VE installer usually does not allow installing on SD cards. Is this ZFS pool named rpool? Maybe it was installed this way and later the boot drive was changed?

You could remove the drive, wipe it, re-insert it and follow the "Changing a failed bootable device"?

Is the SD-card the only EFI System Partition (ESP) active in proxmox-boot-tool? Then you can also remove all other ESPs.

You don't really have to correct anything here, except maybe remove the old ESP UUID from the proxmox-boot-tool configuration?

EDIT: Your system is already non-standard with the SD-card and using a RAIDz1 for VMs (which is terrible for running VMs as the IOPS is very low compared to stripe of mirrors).