Hi there,

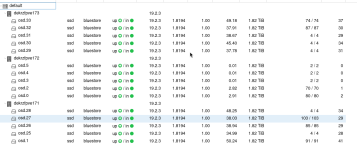

we are running an Proxmoy PVE Ceph cluster. Current configuration is:

In the network I can't see anything wrong. Max utiilisation about 2 Gbit/s.

Maybe there is somethin with the blocksize wrong?

But rados bench seems to work fine:

I have tried everything I know, but without any success.

Can you please help me, maybe there is a simple solution?

Thanks ans best wisches!

we are running an Proxmoy PVE Ceph cluster. Current configuration is:

- 3 Nodes

- Each 2 x 10GBit/s LACP to 4 switches (only for ceph)

- 2 x Intel Xeon E5-2640 2,6GHzs

- 192GB RAM

- Each 5 Crucial SATA SSD CT2000MX500SSD1 on HBA

Code:

~# ceph osd perf

osd commit_latency(ms) apply_latency(ms)

5 3 3

27 99 99

1 85 85

30 85 85

25 4 4

2 114 114

31 100 100

26 4 4

28 4 4

4 3 3

33 124 124

0 83 83

29 81 81

3 3 3

32 4 4

Code:

~#ceph config dump

WHO MASK LEVEL OPTION VALUE RO

mon advanced auth_allow_insecure_global_id_reclaim false

mgr advanced mgr/prometheus/rbd_stats_pools * *

mgr advanced mgr/prometheus/server_addr 0.0.0.0 *

mgr advanced mgr/prometheus/server_port 9283

osd.0 basic osd_mclock_max_capacity_iops_ssd 14103.653049

osd.1 basic osd_mclock_max_capacity_iops_ssd 29488.059277

osd.2 basic osd_mclock_max_capacity_iops_ssd 28081.425876

osd.25 basic osd_mclock_max_capacity_iops_ssd 33508.744911

osd.26 basic osd_mclock_max_capacity_iops_ssd 29059.514671

osd.27 basic osd_mclock_max_capacity_iops_ssd 28531.477981

osd.28 basic osd_mclock_max_capacity_iops_ssd 27336.937265

osd.29 basic osd_mclock_max_capacity_iops_ssd 28923.222275

osd.3 basic osd_mclock_max_capacity_iops_ssd 28222.454163

osd.30 basic osd_mclock_max_capacity_iops_ssd 24904.106530

osd.31 basic osd_mclock_max_capacity_iops_ssd 33027.761220

osd.32 basic osd_mclock_max_capacity_iops_ssd 30125.968423

osd.33 basic osd_mclock_max_capacity_iops_ssd 29039.451886

osd.4 basic osd_mclock_max_capacity_iops_ssd 13437.845129

osd.5 basic osd_mclock_max_capacity_iops_ssd 29437.110346In the network I can't see anything wrong. Max utiilisation about 2 Gbit/s.

Maybe there is somethin with the blocksize wrong?

Code:

~ # ceph tell osd.1 bench

{

"bytes_written": 1073741824,

"blocksize": 4194304,

"elapsed_sec": 4.4707894909999997,

"bytes_per_sec": 240168280.38124242,

"iops": 57.260580153761488

}

~ # ceph tell osd.1 bench 12288000 4096

{

"bytes_written": 12288000,

"blocksize": 4096,

"elapsed_sec": 0.22974307799999999,

"bytes_per_sec": 53485833.423020475,

"iops": 13058.064800542108

}But rados bench seems to work fine:

Code:

~ # rados bench -p vmdata-test 60 write --object_size=16MB

hints = 1

Maintaining 16 concurrent writes of 4194304 bytes to objects of size 16777216 for up to 60 seconds or 0 objects

Object prefix: benchmark_data_dekrzfpve172.m350d4.local_2947169

sec Cur ops started finished avg MB/s cur MB/s last lat(s) avg lat(s)

0 0 0 0 0 0 - 0

1 16 64 48 191.966 192 0.159245 0.226872

2 16 130 114 227.964 264 0.129076 0.263465

3 16 188 172 229.296 232 0.190153 0.268754

4 16 244 228 227.964 224 0.149965 0.269745

5 16 313 297 237.563 276 0.153686 0.2619

6 16 408 392 261.292 380 0.147233 0.242496

7 16 452 436 249.103 176 0.161968 0.239273

8 16 521 505 252.459 276 0.131404 0.246513

9 16 609 593 263.514 352 0.158967 0.239661

10 16 675 659 263.559 264 0.15734 0.240216

11 16 740 724 263.232 260 0.186306 0.240057

12 16 816 800 266.626 304 0.190017 0.237839

13 16 908 892 274.42 368 0.145102 0.232077

14 16 993 977 279.101 340 0.137637 0.227651

15 16 1060 1044 278.359 268 0.180164 0.228952

16 16 1142 1126 281.458 328 0.187289 0.225321

17 16 1223 1207 283.958 324 0.142261 0.224037

18 16 1300 1284 285.292 308 0.164915 0.223076

19 16 1384 1368 287.958 336 0.27237 0.221085

2026-02-24T08:08:19.821984+0100 min lat: 0.0867595 max lat: 0.896916 avg lat: 0.219436

sec Cur ops started finished avg MB/s cur MB/s last lat(s) avg lat(s)

20 16 1468 1452 290.359 336 0.218903 0.219436

21 16 1552 1536 292.529 336 0.279236 0.217773

22 16 1603 1587 288.504 204 0.134028 0.221397

23 16 1684 1668 290.046 324 0.272487 0.219583

24 16 1764 1748 291.292 320 0.178104 0.217464

25 16 1857 1841 294.517 372 0.193856 0.216869

26 16 1945 1929 296.726 352 0.109291 0.215212

27 16 2036 2020 299.215 364 0.165189 0.213234

28 16 2118 2102 300.242 328 0.251923 0.212245

29 16 2213 2197 302.99 380 0.143125 0.210969

30 16 2244 2228 297.023 124 0.211594 0.210369

31 16 2324 2308 297.763 320 0.141827 0.214227

32 16 2416 2400 299.956 368 0.137237 0.212851

33 16 2492 2476 300.077 304 0.234285 0.212307

34 16 2575 2559 301.015 332 0.123825 0.21169

35 16 2662 2646 302.356 348 0.223174 0.211221

36 16 2744 2728 303.068 328 0.215484 0.20999

37 16 2806 2790 301.579 248 0.136269 0.209555

38 16 2894 2878 302.904 352 0.234224 0.210683

39 16 2970 2954 302.931 304 0.149764 0.209496

2026-02-24T08:08:39.824871+0100 min lat: 0.0712509 max lat: 0.911975 avg lat: 0.209701

sec Cur ops started finished avg MB/s cur MB/s last lat(s) avg lat(s)

40 16 3062 3046 304.556 368 0.131103 0.209701

41 16 3141 3125 304.834 316 0.163803 0.209185

42 16 3223 3207 305.384 328 0.14628 0.208901

43 16 3308 3292 306.188 340 0.156289 0.208613

44 16 3396 3380 307.228 352 0.170044 0.207929

45 16 3481 3465 307.955 340 0.168001 0.20722

46 16 3554 3538 307.607 292 0.152485 0.20772

47 16 3628 3612 307.359 296 0.156532 0.208084

48 16 3704 3688 307.288 304 0.145656 0.207882

49 16 3780 3764 307.22 304 0.179062 0.208009

50 16 3862 3846 307.635 328 0.14893 0.207506

51 16 3953 3937 308.739 364 0.228471 0.206915

52 16 4033 4017 308.955 320 0.206297 0.206616

53 16 4102 4086 308.333 276 0.171784 0.206904

54 16 4197 4181 309.659 380 0.131003 0.206404

55 16 4258 4242 308.464 244 0.0744125 0.206071

56 16 4344 4328 309.098 344 0.106985 0.206624

57 16 4436 4420 310.13 368 0.175133 0.206044

58 16 4520 4504 310.576 336 0.167728 0.205802

59 16 4584 4568 309.65 256 0.150262 0.206083

2026-02-24T08:08:59.827798+0100 min lat: 0.058507 max lat: 0.911975 avg lat: 0.206958

sec Cur ops started finished avg MB/s cur MB/s last lat(s) avg lat(s)

60 16 4653 4637 309.089 276 0.225592 0.206958

Total time run: 60.1962

Total writes made: 4653

Write size: 4194304

Object size: 16777216

Bandwidth (MB/sec): 309.189

Stddev Bandwidth: 53.9634

Max bandwidth (MB/sec): 380

Min bandwidth (MB/sec): 124

Average IOPS: 77

Stddev IOPS: 13.4909

Max IOPS: 95

Min IOPS: 31

Average Latency(s): 0.206948

Stddev Latency(s): 0.110647

Max latency(s): 0.911975

Min latency(s): 0.058507

Cleaning up (deleting benchmark objects)

Removed 1164 objects

Clean up completed and total clean up time :6.84856

rados bench -p vmdata-test 60 write --object_size=16MB 2,96s user 6,57s system 13% cpu 1:08,13 totalI have tried everything I know, but without any success.

Can you please help me, maybe there is a simple solution?

Thanks ans best wisches!