So I've had intermittent issues over a long time of one of my four nodes being fenced and going unresponsive. I think I've narrowed down the issues to some basic network issues that have never really dealt with and I'm trying to figure out best practices.

I am told that "storage communication should never be on the same network as corosync!" - generally speaking, this is exactly what I've done with all of my nodes - lots of traffic, all on the same 172.16.120.0 network.

I've tried to rectify this issue, but I'm not sure if what I've done will be sufficient.

My cluster nodes all have two nics - 1-1G and 1-2.5G. Initially, all traffic has been put through the 2.5G nics. To try and separate out the Corosync network, I moved the management interface from the 2.5G nic to a new vlan connected bridge port bridge on the 1G nic. (I followed this post).

Now, I believe that all of my corosync traffic will be moving across that second 1G nic while VMs and data traffic remain on the 2.5G nic.

Will this setup achieve that goal of not having storage communication on the same network as corosync? The VMs and management interface are still on the 172.16.120.0 network, but management is on a completely different nic.

If it helps, here is my /etc/pve/corosync.conf:

And here is /etc/network/interfaces:

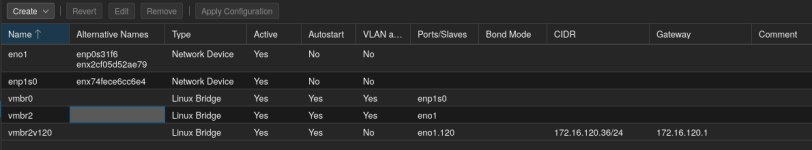

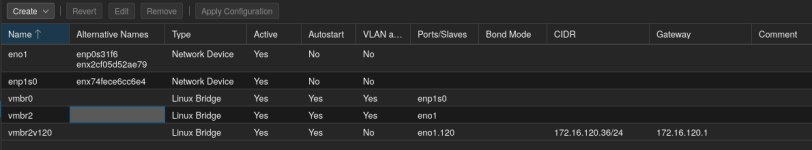

And finally, here is what a node generally looks like:

I am told that "storage communication should never be on the same network as corosync!" - generally speaking, this is exactly what I've done with all of my nodes - lots of traffic, all on the same 172.16.120.0 network.

I've tried to rectify this issue, but I'm not sure if what I've done will be sufficient.

My cluster nodes all have two nics - 1-1G and 1-2.5G. Initially, all traffic has been put through the 2.5G nics. To try and separate out the Corosync network, I moved the management interface from the 2.5G nic to a new vlan connected bridge port bridge on the 1G nic. (I followed this post).

Now, I believe that all of my corosync traffic will be moving across that second 1G nic while VMs and data traffic remain on the 2.5G nic.

Will this setup achieve that goal of not having storage communication on the same network as corosync? The VMs and management interface are still on the 172.16.120.0 network, but management is on a completely different nic.

If it helps, here is my /etc/pve/corosync.conf:

Code:

ogging {

debug: off

to_syslog: yes

}

nodelist {

node {

name: prox4

nodeid: 4

quorum_votes: 1

ring0_addr: 172.16.120.36

}

node {

name: prox5

nodeid: 3

quorum_votes: 1

ring0_addr: 172.16.120.37

}

node {

name: prox7

nodeid: 2

quorum_votes: 1

ring0_addr: 172.16.120.34

}

node {

name: pve1z440

nodeid: 1

quorum_votes: 1

ring0_addr: 172.16.120.39

}

}

quorum {

provider: corosync_votequorum

}

totem {

cluster_name: congregation

config_version: 12

interface {

linknumber: 0

}

ip_version: ipv4-6

link_mode: passive

secauth: on

version: 2

}And here is /etc/network/interfaces:

Code:

auto lo

iface lo inet loopback

iface enp1s0 inet manual

iface eno1 inet manual

auto vmbr0

iface vmbr0 inet manual

bridge-ports enp1s0

bridge-stp off

bridge-fd 0

bridge-vlan-aware yes

bridge-vids 2-4094

auto vmbr2

iface vmbr2 inet manual

bridge-ports eno1

bridge-stp off

bridge-fd 0

bridge-vlan-aware yes

bridge-vids 2-4094

auto vmbr2v120

iface vmbr2v120 inet static

address 172.16.120.36/24

gateway 172.16.120.1

bridge-ports eno1.120

bridge-stp off

bridge-fd 0

source /etc/network/interfaces.d/*And finally, here is what a node generally looks like: